The Semantic Supply Chain: The Control Tower

Part 4 of 4: Trust to Audit, Not Trust to Vibes

[Views are my own. Not legal or compliance advice.]

Governance can feel restrictive when it’s applied as a cage.

But in enterprise GenAI, the right controls are a skeleton: constraints that enable safe speed by making behavior predictable and auditable.

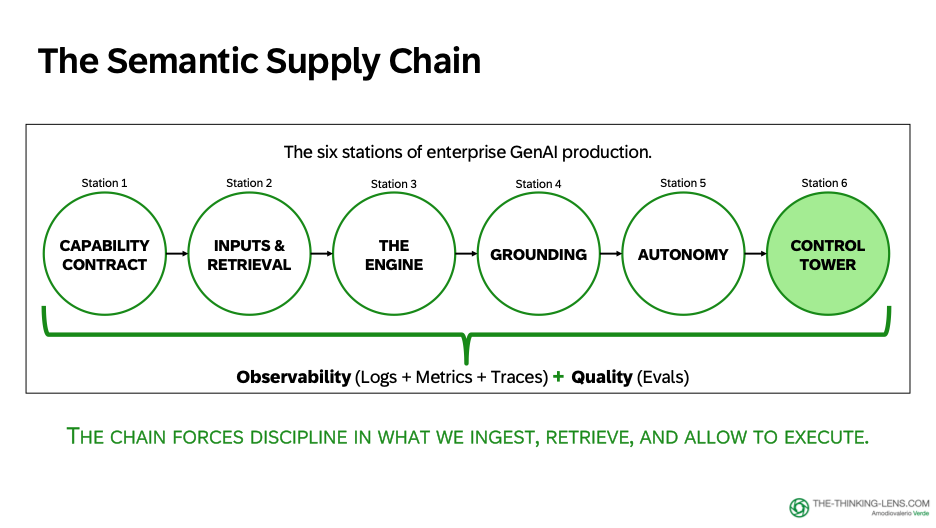

In Parts 1-3, we walked the Semantic Supply Chain from boundaries to execution:

- Capability Contract –> Define what AI may and must never do

- Inputs & Retrieval –> Assemble the right context at the right cost

- Engine vs Wrapper –> Understand that reliability lives in the system, not the model, and how grounding works

- Autonomy Ladder –> Design copilot vs autopilot based on reversibility and risk

Now we arrive at the final station: The Control Tower –> where we measure quality, monitor behavior, and make autonomy safe enough to scale.

Your policy owners set the rules. Our job is to implement them as product behavior users can rely on.

We need governance. But governance done right is a skeleton, not a cage.

The Skeleton: Clear Owners, Clear Controls

This skeleton needs clear owners:

- Product and Design define boundaries and UX

- Security and Compliance define control requirements

- Engineering implements gates

- Operations runs monitoring and incident response

Without this, you don't get adoption. You get firefighting.

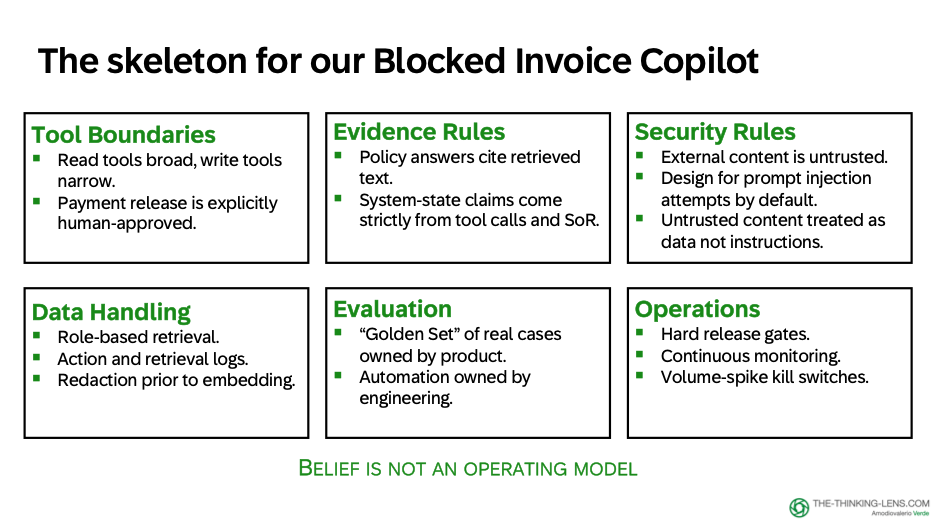

For the Blocked Invoice Copilot, the skeleton is concrete:

Tool Boundaries:

- Read tools: broad (can query invoice, PO, GR, vendor, policy)

- Write tools: narrow (can create tasks, add notes, draft emails)

- Payment release: stays explicitly human-approved

Evidence Rules:

- Policy answers cite retrieved text with version and section

- System-state claims come from tool calls with timestamps and sources

Security Rules:

- Treat external content as untrusted input (vendor emails, attachments)

- Prompt injection attempts will happen: design for it by default

- Enforce instruction hierarchy: system and product rules → user intent → retrieved content

Data Handling:

- Role-based retrieval (never retrieve what the user can't see)

- Logs for retrieval and actions

- Data minimization controls for embeddings and storage based on classification, lawful purpose, retention, and access requirements

Evaluation:

- A Golden Set: real blocked-invoice cases with expected actions and refusal rules

- Owner: Product owns the dataset, Engineering owns automation, Operations owns incident learnings that update it

Operations:

- Release gates, monitoring, and kill switches. Example: auto-degrade to read-only mode, alert the owner, and require approval to re-enable if write-call volume spikes.

These control areas point to one operating principle: move from trust to audit.

Not because we don't believe in AI. We do. But because belief is not an operating model.

Trust to Audit: The Operating Principle

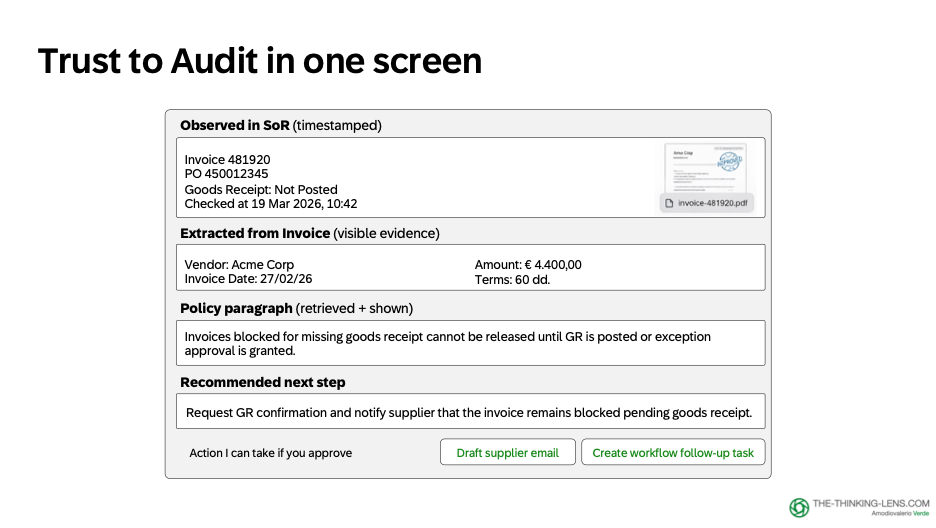

Back to our Procure-to-Pay copilot in production. A user opens a case. The copilot shows a short, structured view:

- Here’s what I observe in the system of record (with timestamps)

- Here’s what I extracted from the invoice (with visible evidence)

- Here’s the policy paragraph that applies (retrieved and shown)

- Here’s the recommended next step

- Here’s the action I can take if you approve

That’s “trust to audit” in one screen.

It’s the difference between:

- “Here’s the answer.”

and

- “Here’s the answer, here’s where it came from, here’s what the system did, and here’s how you can verify it.”

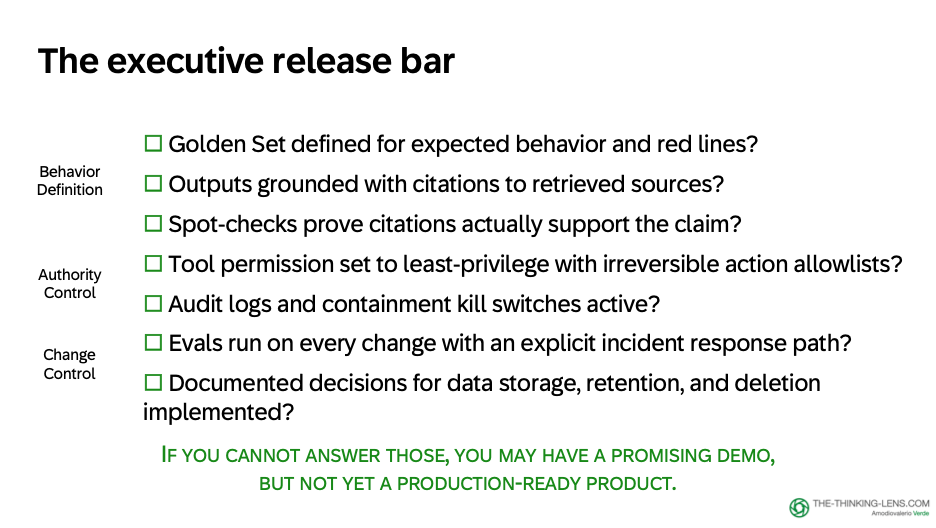

The Executive Release Bar: Questions for GenAI Reviews

Here's a practical executive bar for GenAI reviews. Bucket it into three things: behavior, authority, and change control.

Behavior Definition (What "Good" Looks Like)

Questions to ask:

- Do we have a Golden Set, a fixed test pack of real cases with expected actions, refusals, and red lines?

- Are outputs grounded with citations to retrieved sources when they claim policy?

- Do spot-checks show the citations actually support the claim, not just present?

Why this matters: Without a Golden Set, you can't measure drift. Without grounded citations, you can't audit correctness. Without spot-checks, you don't know if the grounding is real or cosmetic.

Owner: Product defines the Golden Set cases. Engineering automates the evaluation. Operations feeds incident learnings back into the dataset.

Authority Control (Who Can Do What, and How We Contain Incidents)

Questions to ask:

- Are tool permissions least-privilege, with allowlists and approvals for irreversible actions?

- Do we have audit logs for every retrieval and action?

- Do we have a kill switch so we can contain incidents fast?

Why this matters: Without least-privilege tools, a single prompt-injection attempt can escalate privileges. Without audit logs, you can't trace what happened during an incident. Without a kill switch, you can't stop the bleeding when something goes wrong.

Control examples:

- Tool permissions: Write tools limited to specific actions (create task, add note), payment release always requires human approval

- Audit logs: Who approved, what was executed, when, what evidence was used

- Kill switch: Auto-degrade to read-only mode if anomaly detected (volume spike, error rate spike, unauthorized action attempt)

Change Control (How We Prevent Drift as We Evolve the System)

Questions to ask:

- Do we run evals on every change, with clear owners and an incident response path?

- Do we have documented decisions from policy owners on what we store, where we store it, how long we retain it, and how deletion is handled? Have we implemented them as product behavior?

- Can we roll back safely if a model update or prompt change degrades behavior?

Why this matters: Models update. Prompts change. Retrieval sources evolve. Without change control, quality drifts silently until an incident forces you to notice.

Control examples:

- Evals on every deploy: Golden Set must pass before promoting to production

- Capability Contract versioning: Can roll back to v2.0 if v2.1 introduces regressions

- Data governance: Documented retention policies, implemented as automated cleanup, auditable by compliance

If you cannot answer the key questions across behavior, authority, and change control, you may have a promising demo, but not yet a production-ready product.

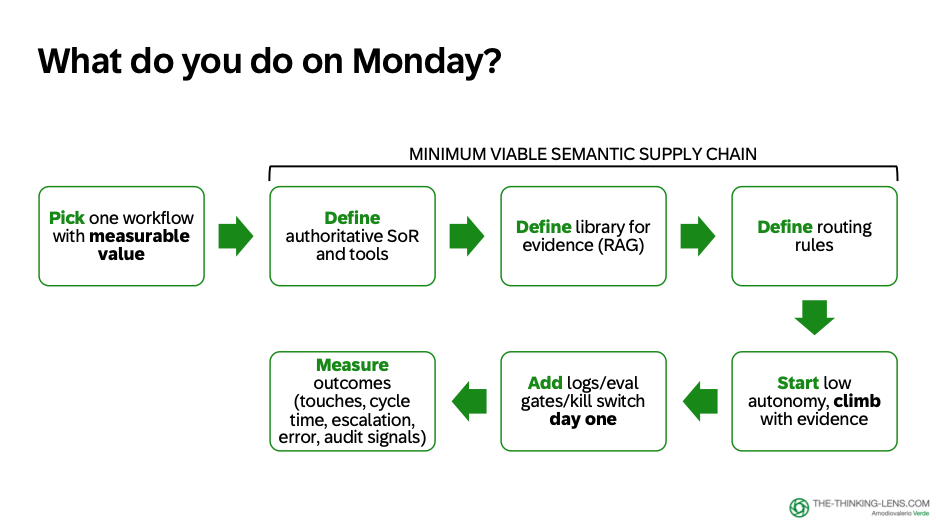

What to Do on Monday

Pick one workflow where:

- Value is measurable

- Risk is contained

- Adoption is natural (because people already work there)

Then build the minimum viable Semantic Supply Chain:

1. Define the systems-of-record tools for truth

- What ERP/CRM/policy service APIs do we need?

- What permissions and scopes are required?

2. Define the policy and playbooks library for evidence

- What documents are authoritative? (versioned, scoped, retrievable)

- How do we keep them current?

3. Define routing rules:

- When to retrieve (policy questions)

- When to call tools (truth questions)

- When to ask a clarifying question (weak evidence)

- When to escalate (disallowed or high-risk)

4. Start at low autonomy, then climb only with evidence

- Level 1 first (read-only tools, explain and recommend)

- Level 2 next (propose actions, human approves)

- Level 3 rarely (only for bounded, proven, low-risk actions)

5. Put logs, eval gates, and a kill switch in from day one: not after the first incident. Before.

6. Measure what matters:

- Cycle time (are we actually reducing resolution time?)

- Accuracy (are recommendations correct and grounded?)

- Escalation rate (are we catching edge cases appropriately?)

- Audit readiness (can we explain every decision?)

The Control Tower is not a dashboard of vanity metrics. It should watch signals that tell you whether the system is still safe and useful: grounded-answer accuracy, citation support rate, tool-call error rate, unauthorized action attempts, refusal accuracy, human override rate, duplicate-write rate, escalation rate, cost per resolved case, latency, and audit-replay completeness. If these signals cross thresholds, the system should degrade gracefully before users discover the failure.

The Leadership Stance

To synthesize this in one leadership stance:

- Do not treat fluency as proof.

- Do not ship vibes. Ship tested behavior.

- Design copilot by default. Reserve autopilot for narrow domains with proven reliability and low impact.

- Enforce scope and permissions at every layer. Tool boundaries, retrieval scopes, approval gates.

- Measure what matters: outcomes, reliability, and trust. Not just deflection rate or token cost.

- Keep accountability human. Even when the system executes, a human approved it. Log both.

Closing: The Semantic Supply Chain

We started with the anthropomorphic trap: the risk of treating fluent software like a trustworthy colleague. The way out is not less ambition. It is better system design, from Capability Contract to Control Tower.

GenAI is not magic. It’s a system. And like any production system, it has stages, costs, defects, and controls.

I call this the Semantic Supply Chain: traceability from tokens to tools to outcomes.

- Station 1: Capability Contract → Define the boundaries

- Station 2: Inputs & Retrieval → Assemble the context

- Station 3: Engine vs Wrapper → Understand the failure modes

- Station 4: Grounding → Bind output to evidence

- Station 5: Autonomy → Design copilot vs autopilot

- Station 6: Control Tower → Make it safe to scale

Four articles. Six stations. One principle: build for "trust to audit".

Now go build something worth trusting.

If you found this useful:

- Subscribe to my newsletter "The Thinking Lens" on LinkedIn

- Share with product teams building enterprise GenAI

- Comment: Where does your team sit on the Executive Release Bar?

Valerio Verde writes about AI governance, product operating systems, and judgment economy at the-thinking-lens.com. The views expressed here are personal.