The Judgment Economy (Part 3/4): Credibility vs. Plausibility

This is the central tension for every enterprise. Generic AI is built for plausibility (it sounds correct). Enterprise AI must be built for credibility (it is correct, auditable, and grounded in your business data). This requires a new, essential human function: Level 3: The Trust Broker.

[Views are my own]

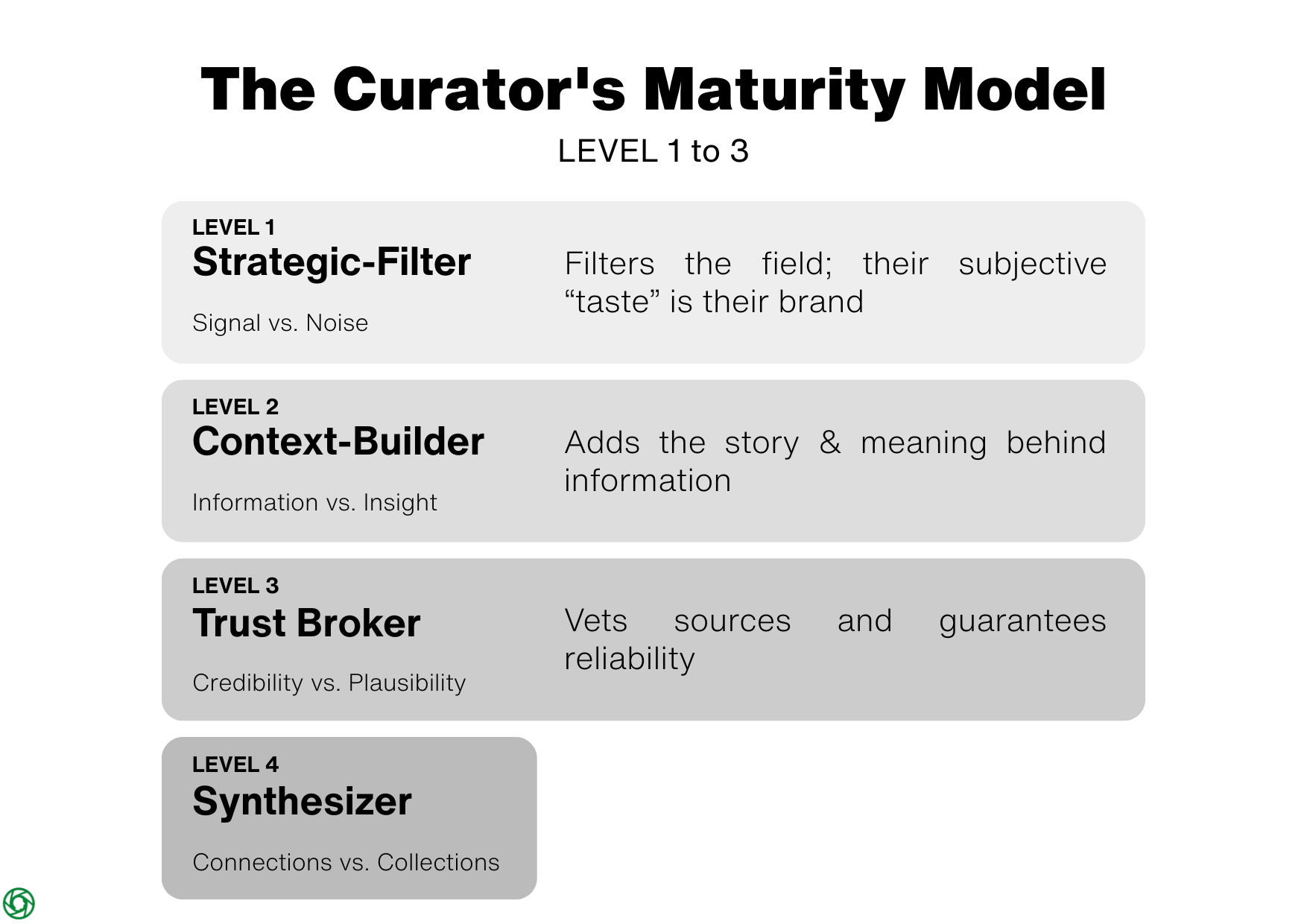

In Part 1 of this series, we tackled 'Signal vs. Noise.' In Part 2, we moved from 'Information to Insight.'

Now, we address the most critical tension for any modern enterprise: building trust.

An insight, no matter how profound, is worthless if it isn't credible. In the age of AI, we must be vigilant in separating what is merely plausible from what is credible. This distinction is the new non-negotiable moat for enterprise value.

The Core Tension: Plausibility (Generic AI) vs. Credibility (Grounded AI)

A fundamental tension exists between two types of Generative AI. This tension is defined by a critical capability: AI Explainability (XAI), or the ability to audit how and why an AI produced a specific result.

- On one side is Generic Generative AI (like general purpose LLMs and chatbots), which is engineered for plausibility. These models lack explainability, making them "black box" systems. Their novel output is disconnected from any single source of truth, making pre-defined accountability impossible.

- On the other is the Explainable, Grounded Generative AI. This model is engineered not for mere plausibility, but for credibility. Its explainability comes from its process: it works by first retrieving high-fidelity, "auditable" data from a "trusted 'codified' source". Only then does it use its generative ability to summarize or act upon that specific, verified data.

This accountability becomes mission-critical as we move from plausible content to plausible action. With Generic AI, the risk is misinformation – a plausible-sounding lie. With Grounded AI, the risk is more subtle: automated error at scale. An AI grounded in business data won't invent facts. Instead, it might make a perfectly logical, plausible, and wrong recommendation because it was grounded in a dataset that was factually correct but incomplete. The risk shifts from "hallucination" to "a lack of wisdom."

The curator's role thus becomes the most critical control point in the business: not just to stop the AI from "lying," but to provide the strategic context, judgment, and wisdom that the data itself will never have.

Level 3 – The Trust Broker (Credibility vs. Plausibility)

This distinction – the presence or absence of explainability – is the heart of the curator's role as a Trust Broker.

Accountability for outcomes must always rest with a human, but the nature of that accountability changes:

- For a Generic AI, the curator's job is to be a skeptic, treating all output as a potential hallucination that must be fact-checked from scratch.

- For a Grounded AI, the curator's job is to be an auditor. Their responsibility is to ensure the AI's data sources are correct and that its conclusions (even if plausible) are strategically sound.

If a generic AI hallucinates, it has no 'skin in the game.' If a human curator – even one using a grounded AI – is wrong, their reputation and personal brand are damaged. For high-stakes, strategic decisions, true accountability requires a party that can be held responsible.

Governance frameworks like the EU AI Act and NIST's RMF are built on this non-negotiable principle of human oversight. These laws correctly identify that a tool cannot be a liable party, so they place legal accountability on the organization (the 'Provider' or 'Deployer'). That organization, in turn, must rely on a human 'Trust Broker' to be the final, answerable link in the chain.

Some argue this financial risk can be mitigated by an emerging market for AI liability insurance. While this is a necessary development, it fundamentally mistakes financial liability for accountability. Insurance can compensate for a monetary loss; it cannot restore a customer's or the public's lost confidence. Accountability and trust, once broken, are not repaired with a check. This is the non-transferable value of the human curator.

The Ethical Dimension: The Curator's First Responsibility

However, we must also acknowledge the potential 'dark side' of this role. Curation, if unchecked, can lead to gatekeeping. A 'Taste-Maker' can become a tyrant, creating echo chambers that stifle dissenting views and narrow the scope of conversation. This brings a profound ethical responsibility that underpins the entire role, especially that of the Trust Broker.

Curation is not a neutral act. It is a series of choices, and those choices carry real weight. Ethical curation requires sticking to a few golden rules:

Curation Without Attribution is Theft

Always provide clear, prominent credit to the original source. The goal is to send attention and respect to the original creator, not divert it ; never copy and paste an entire article, as that isn't curation, it's theft.

Add Value, Don't Just Aggregate

Ethical curation is not a content dump, but an act of "transformative use". You must add your own analysis, commentary, or context. Explain why you are sharing a piece, what it means, and how it connects to other ideas. Your commentary should be more substantial than the excerpt you share.

Your Reputation is the Gate

In an environment where algorithms optimize for clicks and outrage, a human curator has a duty to be a gatekeeper for quality and truth. This means doing basic verification before sharing: check the source's reputation, read beyond the headline, and be wary of outdated information presented as new. Remember, you are stamping a piece of content with your approval, and that approval must be earned.

Own Your Bias

Curators must be vigilant in ensuring their work does not create or amplify harmful biases, whether in the sources they select or the commentary they provide. This requires a conscious effort to seek diverse perspectives and challenge one's own assumptions. Curators are not neutral; they are accountable. These practices are the antidote to the 'dark side,' ensuring a curator remains a guide, not a gatekeeper:

- Publish your sources: If the inputs are visible, people can challenge the output.

- Show the edges: Explicitly list 'what this view does not cover' (e.g., regions, time period) to prevent over-generalization.

- Add a second lens: For sensitive topics, require a second curator or domain owner to co-sign.

This approach aligns with emerging standards for AI governance and model risk management that emphasize documented controls, human oversight, and organizational accountability.

The Curator's Mandate: Building New Judgment.

Finally, an ethical leader-curator must also be a teacher, a guide on the side. We must acknowledge that the 'undifferentiated generation' tasks we now delegate to AI were once the-apprentice's proving ground. If we are not careful, we will automate the very on-ramp we used to build our own judgment. The new mandate, therefore, is not just to be a curator, but to build them – by actively training our teams in the 'Four Roles,' rotating curatorial responsibility, and making the act of judgment and synthesis a deliberate, transparent, and teachable practice.

Coming in Part 4: We have now established the non-negotiable moat for any enterprise using AI: a human-led framework for credibility. But you can't just be a defender of trust; you must also be a creator of new value. In our final part, we'll explore the apex of the model: moving from Collections to Connections.

Sources:

- European Parliament. (2024). Regulation (EU) 2024/1689... (Artificial Intelligence Act). (Article 14: Human Oversight and Article 26: Obligations of Deployers) .

- NIST Special Publication 800-37 Revision 2, "Risk Management Framework for Information Systems and Organizations"

- Hogan Lovells - Insuring AI risks: is your business (already) covered? (2025) - https:// www.hoganlovells.com/en/publications/insuring-ai-risks-is-your-business-already-covered