From Data-Informed to Knowledge-Driven

Data-driven is not enough. Data-informed restores judgment but keeps you in the present. Knowledge-driven turns decisions into reusable assets. A simple template and review loop help teams learn faster, cut rework, and scale better choices.

TL;DR

- Problem: Teams call themselves data-driven, but this is often too passive, leading to poor decisions. "Data-informed" is better because it restores human judgment, but it's stuck focusing only on the present.

- Solution: Become knowledge-driven by turning each decision into a reusable asset. This approach focuses on compounding learning for the future, not just making a good decision today.

- What is "Knowledge"?: It's a "reusable decision asset" that captures a proven effect, the specific context where it works, its strategic meaning, and a decision record with a revisit date.

- The AI Factor: AI multiplies signals but doesn't provide meaning. A knowledge-driven approach ensures humans remain in control of strategy and the "why" behind decisions.

- How to Do It: Use a simple template to record decisions and follow a six-step loop: Frame, Hypothesize, Test, Interpret, Record, and Revisit. This turns learning into a durable, compounding advantage.

Looking Back: The Limits of Being Data-Driven

In 2020 I argued that data-only decision making is a pitfall. Treating data as the only truth outsources judgment to dashboards and creates passive teams. At best they optimize incrementally. At worst they stop thinking.

In a more recent article, I proposed a better posture: being data-informed.

Use a simple thinking loop:

- Start with a question.

- Form a belief.

- Define the stakes.

- Test with data.

Data-informed teams are not waiting for charts to give them answers. They start with intent, and then use data as a sparring partner to test their judgment.

So if data-driven is too passive, and data-informed makes teams more active, why push further?

For years, we've evolved from 'data-driven' to 'data-informed.' But I've often felt something was missing — we were getting smarter in the moment, but were we getting wiser as an organization? We'd solve the same problem twice in different divisions. This led me to codify a framework for the next step: becoming 'Knowledge-Driven.' It's about turning decisions into compounding assets.

Because in 2025, with AI multiplying both signals and noise, being informed is no longer enough. The bar has moved again.

What I mean by Knowledge

Working definition

Knowledge is a reusable decision asset. It pairs a verified causal effect with the specific context where it holds, and it’s captured so the next team can use it without re-learning it.

Think of data as ingredients in your kitchen. Useful, but raw.

Knowledge is the recipe card you wrote after you tested the dish: what worked, why it worked, when it works, when it fails, and how to repeat it. The next cook can pick it up and get the same result. That is how learning compounds.

The parts of knowledge

Keep it simple and explicit:

1. The effect

What changed because of our decision. Proven with a controlled experiment or a solid quasi-experiment. No hand-waving. State the primary metric and the counter-metrics you protected.

2. The context

Where the effect holds and where it does not. Audience, segment, plan size, channel, season, policy or legal constraints, operational readiness. This prevents cargo-cult rollouts.

3. The meaning

Why this matters for long-term behavior and strategy. Are we reinforcing the right habit? Does this align with where we want the product and business to go?

4. The decision record

One short page that captures the above and the action taken. It includes the revisit date to check durability and update the record. This is the unit that makes knowledge reusable across Product, Sales, CS, Legal, Security.

Knowledge template

- Goal: What outcome do we want?

- Belief: We think ___ will improve ___ for ___ users/customers.

- Measure: The one metric we care about is ___.

- Protect: These must not get worse: ___, ___.

- How we tested: A/B or before/after. Who was included.

- What happened: The change was ___ (small / medium / big).

- Where it works / doesn’t: Works for ___; doesn’t for ___.

- Decision: Ship / Don’t ship / Ship only to ___.

- Check later: Recheck in ___ weeks. Owner: ___.

Filled example

- Goal: More active accounts by week 4.

- Belief: Showing value earlier in onboarding will help small teams (3+ seats).

- Measure: % of accounts active in week 4.

- Protect: Support tickets, 30-day churn.

- How we tested: A/B test at account level, 50/50 split, last 4 weeks.

- What happened: +2 percentage points. Tickets up 4% (ok), churn unchanged.

- Where it works / doesn’t: Works for 3-50 seats in low-compliance industries. No lift for solo users. In high-compliance, tickets rose too much.

- Decision: Ship to 3+ seat accounts only. Exclude high-compliance. Add CS handoff.

- Check later: Recheck in 8 weeks. Owner: Onboarding PM.

Quick litmus tests

- If you can’t name the effect, you have analysis, not knowledge.

- If you can’t name the context, you have advice, not knowledge.

- If there is no record with a revisit date, you have a memory, not knowledge.

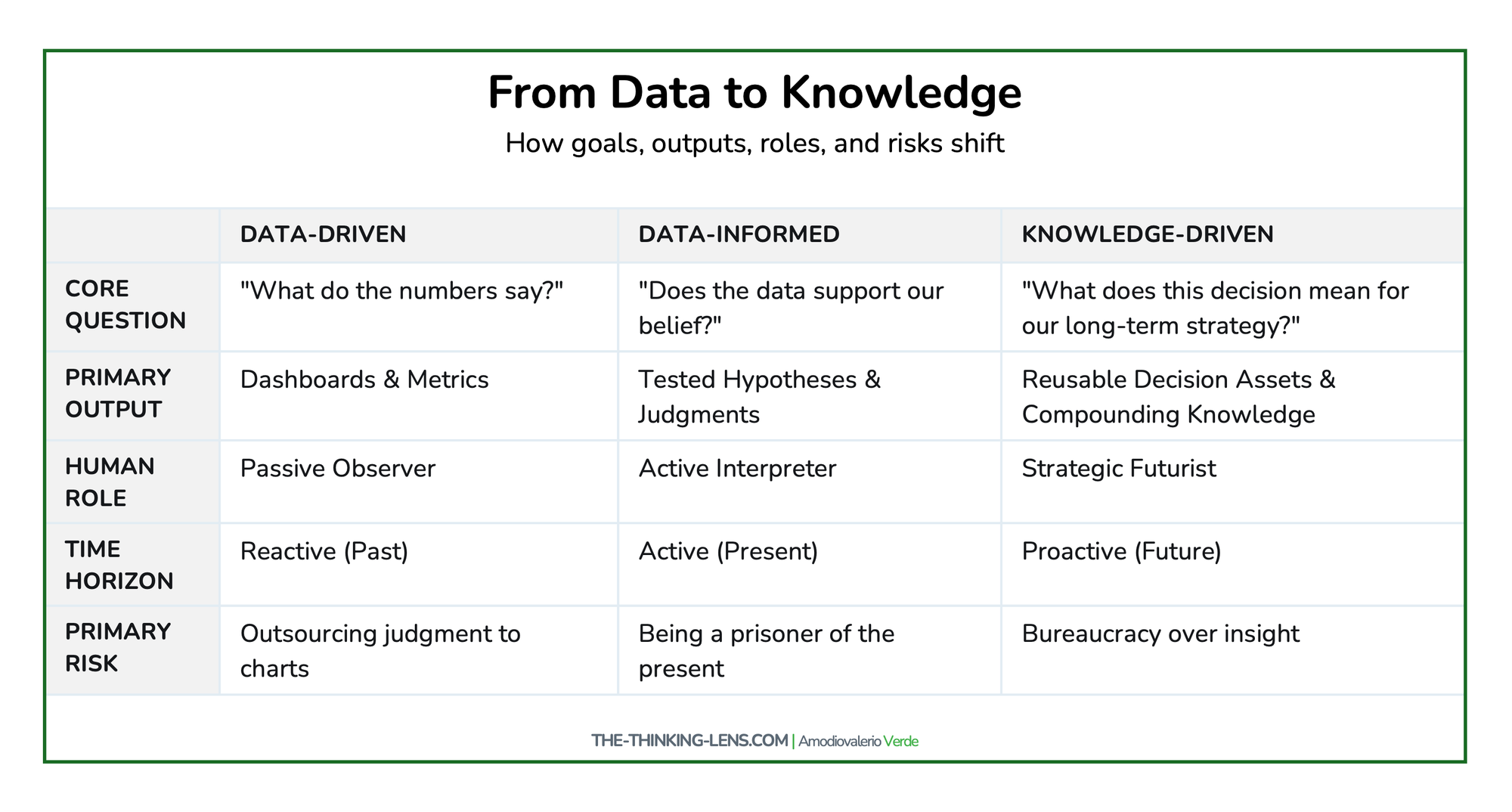

Why Knowledge-Driven is the Next Step

Data-informed focuses on the present: making sound decisions in context, avoiding the trap of blindly following numbers.

Knowledge-driven extends that posture into the future. It is about asking not just “what do the results say now?” but “what do these results mean for long-term behavior, strategy, and values?”

Think of it this way:

- A data-driven team says: “Feature A had 3% higher click-through than Feature B, so we’ll ship A.”

- A data-informed team says: “We believed A would perform better, and the data confirmed it. Good. But we own the judgment, not the chart.”

- A knowledge-driven team says: “Why did A perform better? What long-term behavior does this reinforce? Does it align with our strategy, or are we just chasing vanity clicks?”

Knowledge-driven is the difference between optimization and direction. Between asking what works and asking what matters.

In enterprise SaaS, the right unit of analysis isn’t a single feature. It’s the account, the portfolio, and the cross-product workflow. The move from data-informed to knowledge-driven means we judge decisions by durable customer behavior across the suite and by how well Product, Sales, CS, Security, and Legal make those decisions reusable for the next deal and the next release.

The AI Factor: More Signals, Less Meaning

Generative AI can summarize research, surface patterns, and detect anomalies at scale. With fine-tuning, retrieval, and policy, AI can reflect parts of your context. It still does not set strategy or values.

If teams once outsourced judgment to dashboards, the new temptation is to outsource understanding to machines. That is the risk.

AI provides signals. Humans provide meaning. AI optimizes the what. Humans must anchor the why. The why is where strategy, foresight, and ethics live.

AI guardrails

- Require a human-authored intent and risk section for any AI-assisted analysis.

- Log prompts and outputs used to inform decisions. Treat them as supporting evidence, not the decision itself.

- Establish evaluation checks for AI-produced insights the same way you do for experiments.

- Use retrieval to inject your decision records and domain models into AI tools so context is first-class.

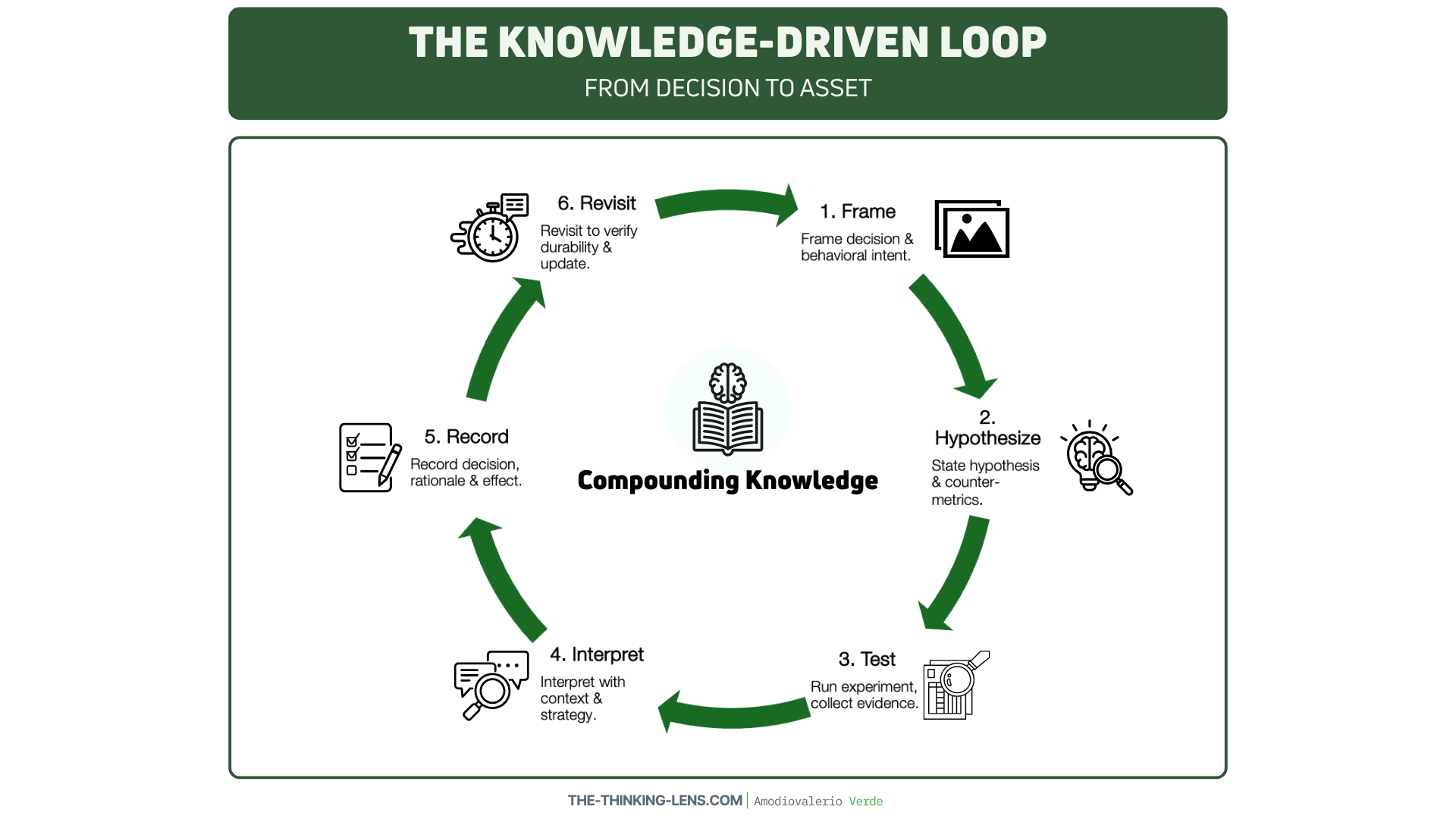

The Knowledge-Driven Loop

Use this loop to turn data into compounding knowledge:

- Frame the decision and the behavioral intent.

- State a falsifiable hypothesis and define counter-metrics.

- Run the experiment or collect evidence.

- Interpret with context: customer narratives, constraints, strategy.

- Record the decision, rationale, and expected long-term behavior.

- Revisit after 4 to 12 weeks to verify durable impact and update the record.

Adopting this loop requires a real commitment: investing time in documentation and creating a culture where revisiting past decisions is seen as a feature, not a bug.

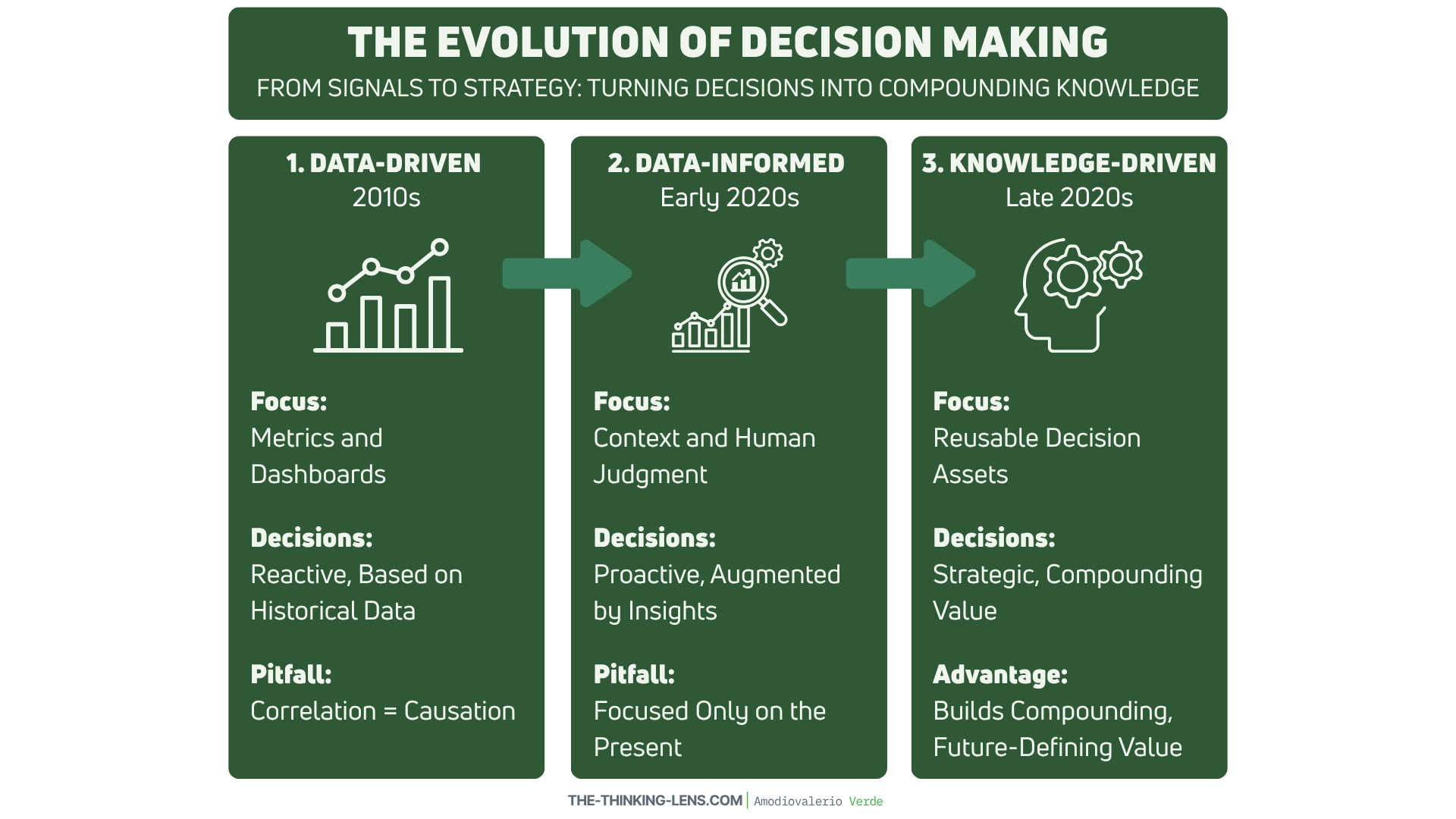

The Evolution of Postures

If I had to frame the last 15 years in one line:

- 2010s: Data-driven. The obsession with measuring everything.

- Early 2020s: Data-informed. The correction: data supports judgment, not replaces it.

- Late 2020s: Knowledge-driven. The next evolution: decisions rooted not only in evidence but in foresight and meaning.

Being data-informed prevents you from being a slave to the numbers. Being knowledge-driven ensures you are not a prisoner of the present.

From Currency to Compound Interest

We used to say: data is the new oil. Later: data is the new currency. Both still hold. But data on its own depreciates quickly.

Knowledge is what compounds.

Knowledge is what differentiates.

Knowledge is what endures.

A company with more dashboards has no real advantage. A company that connects signals to meaning, meaning to strategy, and strategy to long-term value creation, that company builds compound interest.

Putting Knowledge to Work: Culture and Tooling

Adopting a knowledge-driven approach is as much a cultural shift as it is a process change. Here’s how to get started:

- Start Small and Be Consistent: Don't try to boil the ocean. Pick one team or one significant project to pilot the decision record template. Consistency is more important than perfection. The goal is to build the habit of recording and revisiting.

- Lead by Example: Leaders must champion this process by asking the right questions in reviews: "What did we learn here that the next team can use?" "Where is the decision record?" "When will we revisit this to check for durability?"

- Choose Simple Tooling: A knowledge repository doesn't need to be a complex system. A shared space in Confluence, Notion, or even a well-organized Google Drive is a perfect starting point. The key is that it's searchable, accessible, and owned. Tag records by product area, team, and key results to make them easily discoverable.

Common Pitfalls and How to Navigate Them

Adopting this model isn't just about process; it's about changing habits. Here are common failure modes and how to overcome them:

- The "Record and Forget" Trap: Teams diligently fill out the decision record, but it ends up in a repository that no one ever consults. The knowledge never becomes reusable.

- Solution: Champion re-use explicitly. In project kickoffs, a required step should be to search the knowledge base for relevant prior decisions. In project retrospectives, ask, "How did a previous decision record accelerate our work here?"

- The "Zombie Record" Problem: The "revisit date" is set but consistently ignored, meaning the durability of the learning is never verified.

- Solution: Automate the follow-up. Integrate the revisit task directly into your team's workflow tool (e.g., Jira, Asana) with an assigned owner. Treat it like any other task in a sprint or project plan.

- The "Bureaucracy" Misconception: The process is perceived as overly burdensome documentation that slows things down.

- Solution: Focus on the "why." Frame it as a "slow down to speed up" investment. Share a concrete example of how 30 minutes spent on a record saved days of redundant work or prevented a costly mistake, demonstrating its value in accelerating future decisions.

Prerequisites for Success: Is Your Organization Ready?

Adopting a knowledge-driven model is more than a process change; it's a bet on a specific organizational philosophy. The framework rests on a few core assumptions. If these don't hold true for your team, the system can backfire, creating bureaucracy instead of insight. Before scaling, gut-check these prerequisites.

Assumption 1: The Value of Learning Exceeds the Cost of Speed

The model assumes that the long-term advantage of compounding knowledge is worth the short-term cost of slowing down to document and revisit decisions.

- When This Assumption Fails: This trade-off doesn't work if your team operates in a highly volatile environment where speed of iteration is the only thing that matters. This is often true for early-stage startups searching for initial product-market fit or teams in a "wartime" crisis. In these scenarios, learnings become obsolete in weeks, and the context shifts too rapidly for a decision asset to remain relevant.

- What Happens: The process becomes a tax on speed with no future payoff. The knowledge base turns into a museum of irrelevant decisions. In this situation, it's better to stick with a lighter-weight data-informed loop and accept that learnings are temporary until the environment stabilizes.

Assumption 2: Your Key Learnings Can Be Written Down

The framework assumes that the most critical components of a decision — the effect, context, and meaning — can be effectively codified in a document.

- When This Assumption Fails: This breaks down when decisions rely heavily on tacit knowledge — the unspoken intuition and deep experience of a few key experts. For example, the nuanced "feel" a senior negotiator has for a client's motivations or a designer's gut instinct for user delight cannot be fully captured in a template.

- What Happens: Future teams may follow the record's instructions perfectly but fail because they miss the unwritten wisdom. The decision record becomes a "brittle" script, not a robust recipe. To mitigate this, the decision record should be treated as the start of a conversation, not the end of one. It must be paired with mentorship, and the process should encourage consulting the original decision-makers.

Assumption 3: Incentives Can Shift from Output to Outcomes

The entire system is predicated on the idea that leadership can and will shift rewards from pure output ("how many features did you ship?") to durable outcomes and reusable learnings.

- When This Assumption Fails: If your organization's performance reviews, promotion criteria, and bonus structures exclusively reward short-term velocity, this framework is doomed. Teams will correctly identify documentation and revisiting as unrewarded work that slows them down.

- What Happens: You'll get superficial compliance. Teams will fill out the template with the least possible effort, turning it into a "check-the-box" exercise. The knowledge base will be populated with low-quality "zombie records" that nobody trusts or reuses. Before launching this initiative, leaders

must first address the incentive structure. If you only celebrate shipping, you will only get shippers, not learners.

Scaling Knowledge: From a Single Team to the Enterprise

Adopting the knowledge-driven loop in a single team is the first step. Scaling it across an organization requires intentional system design.

- Federate, Don't Centralize. Avoid a single, central "knowledge team" that becomes a bottleneck. Instead, empower champions within each product area or department to own their decision records. The central role should be to maintain the system (the templates and tools), not the content.

- Integrate into Existing Workflows. Knowledge creation shouldn't be a separate task. Integrate the decision record into the tools your teams already use. Create a Jira ticket type for "Decision Record" or build a Slack command that pops up the template. The revisit date should automatically create a task in your project management tool assigned to the owner.

- Measure and Reward Reuse. To combat the "record and forget" trap, track how often existing decision records are referenced in new project proposals or kickoff documents. Publicly praise teams that build upon prior knowledge to accelerate their work or avoid a past mistake. This makes the value of the system visible and reinforces the desired culture.

The Leadership Call to Action

If you lead teams today, ask yourself:

- Are we just optimizing metrics, or are we aligning them with long-term outcomes?

- Do we use AI to accelerate our clarity, or to replace our thinking?

- Are we treating data as a signal, or as the final word?

Because the companies that thrive in the AI era will not be those with the largest datasets or fanciest models. They will be the ones that connect data to knowledge, and knowledge to direction.

Closing Thought

Dashboards track history.

Judgment shapes the present.

Knowledge defines the future.

The last decade rewarded companies that could measure faster. The next decade will reward those that can interpret better.

Because in the end, the real advantage isn’t having the most data. It’s knowing what matters, why it matters, and how to act on it before everyone else does.

PAQs (Probably Asked Questions)

Does knowledge-driven contradict your article on being data-informed?

No. Knowledge-driven builds on data-informed. Data-informed ensures you ask the right questions and use data to test your judgment. Knowledge-driven ensures you connect those answers to long-term strategy. Think of it as a ladder:

- Step 1: Stop being data-driven (too passive).

- Step 2: Become data-informed (intentional, rigorous).

- Step 3: Evolve to knowledge-driven (strategic, future-oriented).

Isn’t “knowledge-driven” just another buzzword?

Fair point. Words are cheap if not tied to culture. That’s why the distinction matters: data is just information. Knowledge is information interpreted with context, experience, and foresight. Without that, all you have are prettier dashboards.

Could AI eventually create “knowledge” too?

Yes, AI will approximate knowledge more and more. But AI does not set strategy or values. AI can support causal inference and hypothesis generation, but intent, values, and accountability remain human.

Isn’t being data-informed enough?

In 2020, yes. In 2025, no. Being informed makes you rigorous in the present. But competitive advantage will come from foresight. If your team only optimizes the now, you’ll miss the shifts shaping the future.

What’s the smallest step I can take to make my team more knowledge-driven?

At your next A/B test or experiment, don’t stop at “what worked.” Ask: “What behavior did this reinforce? What does it mean for our long-term strategy?” That simple question forces a shift from metrics to meaning.

This sounds like a lot of documentation. How do we implement this without slowing down?

It's a classic "slow down to speed up" scenario. The initial effort of creating a decision record takes maybe 30 minutes, but it can save days or weeks of re-work and re-learning down the line. The goal isn't bureaucracy; it's to prevent "organizational amnesia." By making learning reusable, you accelerate future decisions across the entire company.

How does this apply to teams that don't run A/B tests, like Sales or Legal?

The principle is universal, even if the "test" isn't a controlled experiment. A sales team can record why a certain negotiation tactic worked for a specific customer segment (the effect and context). A legal team can document the rationale behind a policy change and set a date to revisit its impact on business friction. Every team makes decisions with lasting effects, and every team benefits from making that learning explicit and reusable.

How do you measure the ROI of becoming knowledge-driven?

While direct financial ROI is tricky to quantify, you can track powerful proxy metrics:

- Reduced Redundancy: Track the number of times similar experiments are proposed. A successful knowledge base should reduce this.

- Decision Velocity: Measure the time it takes for teams to move from question to a confident, evidence-backed decision.

- Onboarding Speed: New hires can become effective faster by studying a repository of key decisions and their rationale.

What's the difference between this and just having a good company wiki?

A wiki is a tool; a knowledge-driven approach is a system. A wiki can easily become a "document graveyard." This system is different because it uses a structured template , focuses on causal effects and context , and has a built-in process for revisiting and verification. It turns passive documentation into an active, compounding asset.

How do you handle documenting decisions that were wrong or inconclusive?

They are just as valuable! A record of a failed experiment or a disproven belief is critical knowledge. It prevents future teams from wasting time on the same dead end. Documenting why it didn't work and what you learned is a key part of building a learning organization. The goal isn't to be right every time; it's to learn every time.

How do you incentivize teams to adopt this, especially when they're measured on shipping speed?

This is a crucial leadership challenge. The key is to reframe the incentive structure. First, celebrate "smart failures" where a well-documented, failed experiment prevents future wasted effort. Second, make "search the knowledge base" a required step in any project kickoff; this immediately demonstrates the time-saving value. Over time, leaders must shift from rewarding pure output ("how many features did you ship?") to rewarding durable outcomes and reusable learnings ("how did this project make the next project faster or smarter?").

Who is responsible for maintaining the knowledge base and ensuring records are high-quality and not contradictory?

Ownership should be federated. The decision owner (e.g., the Product Manager) is responsible for the quality and accuracy of their specific record, including updating it after the revisit date. A domain lead (e.g., Director of Product for a specific area) should be responsible for periodically reviewing records in their domain for thematic coherence. This avoids creating a bureaucratic "knowledge police" force while ensuring the repository remains a trusted, high-signal resource.