Beyond the Dashboard | Principle 9: Reconcile Metric Definitions Before Analysis

Teams don’t argue about numbers; they argue about definitions. Inconsistent metrics like MAU erode trust, stall decisions, and mislead AI at scale. Fix it upstream: build a Metric Dictionary with clear names, sources, formulas, and owners. One name, one definition.

TL;DR (for those whose last meeting was a debate about definitions)

- If teams argue about numbers, it’s usually not math they’re debating but definitions. Vague definitions aren't a data issue; they're a systems failure.

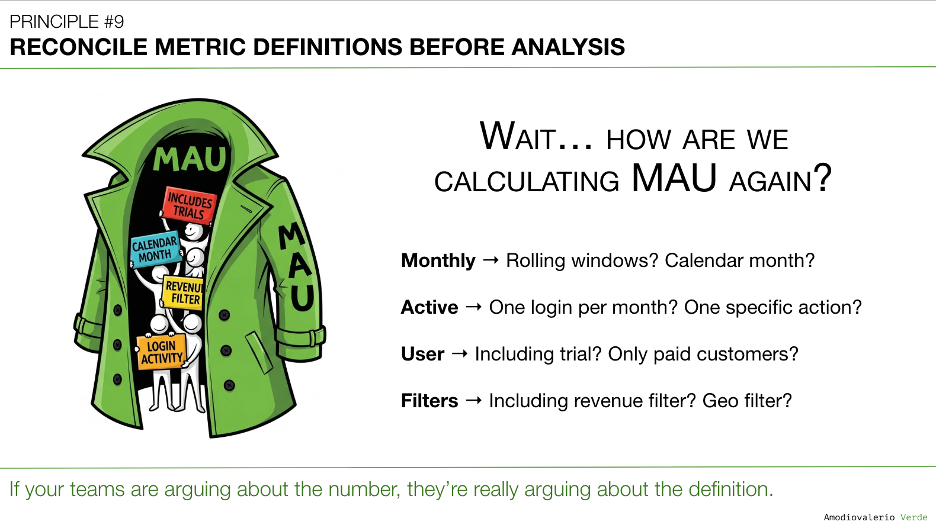

- Inconsistent metric definitions (like “Active User”) create conflicting truths, erode trust, and stall decisions. Your MAU might be four metrics in a trench coat pretending to be one.

- AI usually makes it worse, unless you’ve already solved the definition problem upstream. Feed it inconsistent data and you’ll get confident-sounding nonsense at scale.

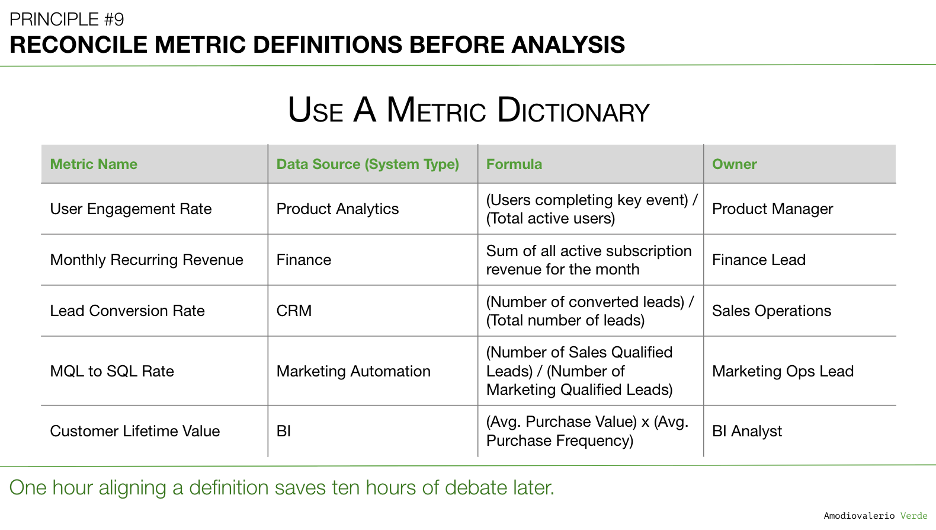

- What you need is a Metric Dictionary, one source of truth with each metric's name, source, formula, and owner.

A Quick Recap: The House of Cards

In Principle 8: Manage Multi-Product Portfolios Separately, we dismantled the dangerous myth of the blended portfolio dashboard. We established that treating each product as its own distinct system, with its own vitals and success metrics, is the only way to avoid the strategic blindness caused by "Franken-metrics". By managing products separately, we gain the clarity to see which are thriving, which are struggling, and where to invest with precision.

But even with perfectly separated, layered dashboards for each product, the entire structure can collapse like a house of cards if it's built on a cracked foundation. That foundation is language itself. What happens when the words we use to describe our metrics mean different things to different people?

That brings us to the most common, most expensive, and most underestimated failure point in any data-informed system: the failure to define what our numbers actually mean.

The Most Expensive Meeting in Your Company

You’ve been in this meeting. I guarantee it.

A slide goes up. It shows that Monthly Active Users (MAU) dropped by 5%. The air in the room instantly changes. Half the stakeholders lean forward in panic, seeing a leading indicator of churn. The other half lean back, shrugging, assuming it’s a seasonal dip or a data-quality issue.

The debate begins. But it’s not about strategy. It’s not about customers. It’s not about what to do next. Then, inevitably, someone asks the question that grinds the entire conversation to a halt: “Wait… how are we defining ‘Active User’ again?”

Silence.

That silence is the sound of one of your organization’s most expensive habits. It’s not the meeting with the highest-paid people that costs the most; it’s the one where smart, well-intentioned people waste an hour arguing about the meaning of a number because it was never defined.

What follows is a painfully familiar scene.

- The Product team defines an "active user" as anyone who logged in.

- The Finance team’s report, used for revenue forecasting, only counts users tied to a paid subscription.

- The Growth team uses a rolling 28-day window and includes free trial users to measure top-of-funnel conversion.

- And the Marketing team’s dashboard, tracking campaign effectiveness, uses calendar months and filters for users from specific acquisition channels.

Your "Monthly Active User" might actually be four different metrics in a trench coat pretending to be one.

Additional Metric Examples

This isn't an isolated problem with user metrics. It appears everywhere a core business concept can be measured in more than one way.

Take churn, for example:

- Finance tracks Logo Churn: the percentage of customer accounts that terminate contracts in a given period, a clear commercial event.

- Product tracks User Churn: the percentage of individual users who stop logging in or performing key actions, even if the account remains active, an early warning of value loss.

- Customer Success tracks Net Revenue Churn: recurring revenue lost after downgrades, cancellations, and expansions are factored in.

None of these definitions is “wrong,” but together they measure different realities. Without explicit, agreed-upon definitions, they don’t create clarity; they create chaos and erode trust.

You can’t build strategy on a number that means different things to different teams. This isn’t a data problem, but a leadership problem disguised as a spreadsheet debate.

The Risk: AI as a Chaos Multiplier

If your data system is filled with inconsistent language and vague definitions, AI won’t fix it. It will multiply your confusion with terrifying efficiency. You'll get:

- Confident but Flawed Insights: Ask AI to analyze declining user activity, and it may choose one conflicting definition, producing a polished but misleading analysis.

- Automated Misdirection: It might optimize churn prediction for the wrong user segment or build a recommendation engine on a flawed notion of “engagement,” steering users away from real value.

- Accelerated Erosion of Trust: Teams present AI-generated reports, only to have the same definitional conflicts surface, now wrapped in opaque, machine-generated complexity. People stop trusting both the numbers and the “black box” behind them.

The rise of AI and automated analytics has amplified the cost of vague definitions. What used to be an hour of executive debate is now entire strategies executed on flawed assumptions at machine speed. As organizations accelerate their use of AI-powered dashboards and decision systems, the margin for definitional error has collapsed.

The question isn’t if a Metric Dictionary is necessary. It’s whether you can afford to operate without one.

The Opportunity: AI as a Guardian of Clarity

However, once you’ve done the hard work of building a Metric Dictionary, AI can transform from a liability into your most powerful ally for enforcing consistency at scale. Instead of just amplifying chaos, it can become a guardian of your shared language. Here’s how:

- Automated Auditing and Governance: AI agents scan dashboards, reports, and documents to flag any metric use that doesn’t match the official definition. This turns governance from reactive to proactive.

- Contextual Intelligence in Reports: AI-generated summaries or dashboards can automatically include official definitions as footnotes or tooltips, ensuring context always travels with the data.

- Accelerated Onboarding: New hires can query an AI assistant trained on the Metric Dictionary for instant, precise answers (e.g., how Customer Lifetime Value is calculated, including formula and owner).

AI is a judgment multiplier. With consistent definitions, it multiplies clarity, helping scale a data-informed culture quickly and reliably.

The Strategic Price of Imprecision

Ignoring metric definitions isn't a minor oversight; it's a quiet poison that slowly paralyzes your organization's ability to learn and act.

- Decision Gridlock: Strategic conversations stall as debates over sourcing and calculation replace actual decisions.

- Eroded Trust: When Product, Finance, and Sales bring different numbers for the same metric, cross-functional trust collapses. Data becomes a political weapon instead of a shared resource.

- No Accountability: Teams can’t be held accountable for moving a metric if no one agrees on what it means. Vague definitions create loopholes and excuses when targets are missed.

- Strategy on Sand: Forecasts and budgets rely on clear metrics. If those metrics are ambiguous, every plan rests on guesswork.

The real failure is allowing a preventable problem, imprecise language, to undermine your most critical business processes.

The Solution: A Metric Dictionary, the Operating System for Clarity

You don’t fix this with more meetings, better dashboards, or a new analytics tool. You fix it by treating your metric definitions with the same rigor you apply to your code. You build a Metric Dictionary.

At the start, creating a Metric Dictionary will feel like effort and bureaucracy. But once built, it stops being overhead and becomes infrastructure: a single, non‑negotiable source of truth that saves thousands of hours of debate. This dictionary becomes the official record for how you measure success.

Here’s how it fits:

- Metrics are raw measures of performance.

- KPIs are the critical subset used to track business health.

- OKRs are strategic goals often tied to one or more KPIs.

The Metric Dictionary underpins them all, ensuring every metric behind your KPIs and OKRs has a clear, unambiguous definition.

What to Put in a Metric Dictionary and How to Build One

So, what goes into a robust Metric Dictionary? Every key metric that matters to your business must document four simple but non-negotiable things:

- Precise Name: Not a vague term like "Engagement," but something unambiguous like "Weekly Core Action Completion Rate". The name itself should communicate the meaning.

- The Owner: The single authority accountable for accuracy and evolution, often a functional leader (e.g., VP Product) or Product Operations. A metric without an owner is an orphan, and no one will trust it.

- The Data Source: Where does the raw data come from? Which system is the record of truth? (e.g., BI warehouse, analytics tool, CRM). Be explicit.

- The Formula: The exact calculation, written with software‑level precision. Include all inclusions, exclusions, and time windows. Example: A user who completes at least one core action (X, Y, or Z) in a rolling 7‑day period. Excludes internal users and suspended accounts.

One Name, One Definition

The goal isn’t to force a single definition for every concept. Different teams may need different versions of the same metric and that’s fine. The rule is simple: one name, one definition.

Multiple definitions can and should exist when needed, but they must each carry a unique, explicit label. The risk isn’t variety; it’s ambiguity.

For example, Finance and Product may both track active users. Instead of both calling it “MAU,” define:

- MAU - Financial (Paying Accounts)

- MAU - Engagement (Core Action Users)

Each has its own clear definition in the dictionary. This eliminates ambiguity while letting teams measure what matters to them.

Make the dictionary accessible company‑wide and link it directly from dashboards. Any new metric must be added to the dictionary before it’s used at a strategic level.

A metric dictionary is a foundation, not a silver bullet.

A Metric Dictionary is the starting point, not the finish line. It must be paired with:

- Cultural adoption so teams actually use it instead of reverting to their own definitions.

- Governance and maintenance so it stays current as products, markets, and strategies evolve.

- Executive sponsorship to resolve disputes and give the dictionary real authority.

Only with these in place does the dictionary move from a static document to a living operating system for clarity. This is a leadership responsibility. Without visible executive sponsorship, a Metric Dictionary will wither. Leaders must model its use in meetings, challenge reports that use undefined metrics, and enforce that decisions are based only on reconciled definitions. Clarity in metrics is not a technical initiative, it is an act of leadership.

How to Get Started

If you’re building a Metric Dictionary from scratch, keep it simple:

- Identify your top 10 crown jewel metrics used in board decks, executive reviews, or funding conversations.

- Assign a single accountable owner for each.

- Define the precise name, source, and formula.

- Publish in a visible, shared space (e.g., Confluence, Wiki, Notion, or your BI tool).

- Make it a living document. Revisit definitions quarterly as your strategy evolves.

The Politics of Maintenance: A Dictionary Is a Garden, Not a Stone Tablet

A Metric Dictionary is not a one‑and‑done project. Creating it is only the first step. The real work is keeping it alive and relevant. Without stewardship, it becomes a well‑organized museum of forgotten arguments. Think of it as a garden: it requires constant care.

- Weeding: Rogue metrics will sprout in unaudited dashboards and siloed reports. Governance, manual or AI‑assisted, means finding and removing these before they choke out the shared language.

- Pruning: As strategy evolves, some metrics lose relevance. A clear process to deprecate and archive them prevents the dictionary from becoming a graveyard of forgotten KPIs.

- Sunsetting: Outdated KPIs left in dashboards create confusion and erode trust. Retire them with a defined process: identify, explain the rationale, document the history, and remove them from live use.

Stewardship is not administration; it is strategic negotiation. When leaders disagree on how a definition (e.g., MQL to SQL rate) should evolve, the cross‑functional council is the forum. If it can’t resolve the issue, escalate to the senior leader with authority over both sides. That decision is final, giving the dictionary real authority.

No definition can change in a silo. Proposals must go through the council, debated for impact, and formally ratified and communicated. The Metric Dictionary is your company’s living constitution for shared language and it only works with vigilant, ongoing care.

Final Thought

This is one of my favorite principles because I’ve seen its impact firsthand. I’ve spent countless hours aligning on definitions, not out of pickiness, but to create clarity and shared understanding. Agreement isn’t always required, but shared definitions are.

Your dashboards, analytics tools, and AI models are useless if the words defining success are built on sand. Inconsistent definitions tax your organization’s time, trust, and focus.

This isn’t a data science problem; it’s a leadership challenge. It takes discipline to pause, humility to admit your language is messy, and the will to do the unglamorous work of building a shared vocabulary.

Stop letting teams fight about numbers. They’re fighting about definitions. Fix them upstream, and you’ll unlock the clarity needed to stop debating and start deciding.

Without a metric dictionary, even the most polished dashboard can shift from being a decision tool to a debate starter.

What’s Next?

Once you have established a common language through a reconciled Metric Dictionary, you have the foundational building blocks for a truly intelligent system. But clear definitions are only the starting point. How do you assemble them into a structure that doesn't just report what happened, but actively helps your teams reason about what to do next?

That brings us to our next principle, where we transition from building reports to building intelligence. Get ready for Principle 10: Build Thinking Systems, Not Reporting Systems.

PAQs – Potentially Asked Questions

A metric dictionary sounds like a lot of bureaucratic overhead, especially for a fast-moving startup. Is it really necessary?

Yes. The goal isn’t paperwork but eliminating wasted time. One hour defining a key metric saves dozens of hours of debate later. For a startup, establishing this discipline early is far cheaper and easier than trying to fix a chaotic data culture after you've scaled to 100 people and have five different definitions for "customer".

Who should own the Metric Dictionary? Product? Data? Finance?

Ownership must be cross‑functional. The best model is a small council with leaders from Product, Data, Finance, and Marketing. Product Ops often facilitates, but each metric needs a single accountable owner who can resolve future debates.

What happens when teams can't agree on a single definition?

Often, the fix is creating two clearly named metrics instead of forcing one (e.g., MAU-Financial and MAU-Engagement). If disagreement persists over the official version for leadership, it signals a deeper strategic misalignment that must be resolved.

How is this different from the "Source of Authority" we discussed in Principle 6?

Principle 6 ("Know Your Tool Stack's Boundaries") defines where the data comes from. Principle 9 defines what the data means. You need both: a trusted source and a precise definition.

How granular should we get? Do we need to define every single metric we track?

No. Start with the 10-15 “crown jewels” used in board decks and executive reviews. Focus on the metrics tied to OKRs or funding milestones, the ones driving high‑stakes decisions. Expand gradually.

This feels like bureaucracy, not agility.

It’s the opposite. Defining a metric once saves dozens of recurring debates. That’s agility, moving decisions forward instead of circling definitions.

We Already Know What Our Metrics Mean

That’s the illusion. If everyone truly agreed, the same MAU drop wouldn’t trigger four different interpretations in the same meeting. The dictionary isn’t for the metrics you think are clear, it’s for the ones that consistently spark debate.

It Will Never Stay Updated

That risk is real, which is why governance is designed as ongoing stewardship, not a one-time project. Think of it like code or compliance: it requires maintenance because the cost of letting it drift is far higher.

Don’t different teams need different truths?

Exactly. The dictionary doesn’t force one definition. It forces one name, one definition. Finance and Product can both track active users, but they must be labeled clearly so we know what’s being discussed.

Isn’t this a leadership distraction?

No. It’s a leadership responsibility. If leaders don’t enforce shared definitions, metrics become political weapons instead of decision tools. Strategy collapses when we can’t agree on what the numbers mean.

Each principle in this series builds upon the last to form a coherent system for better decision-making. Here is the full list of principles we are exploring:

Intro: Beyond the Dashboard Series

Principle 1: Avoid the Data Delusion

Principle 2: Adopt a Data-Informed Approach

Principle 3: Choose What to Measure

Principle 4: Use Frameworks as Filters, Not Blueprints

Principle 5: Focus on Adoption, Not Just Delivery

Principle 6: Know Your Tool Stack’s Boundaries

Principle 7: Build Layered Dashboards to Scale Thinking

Principle 8: Manage Multi-Product Portfolios Separately

Principle 9: Reconcile Metric Definitions Before Analysis

Principle 10: Build Thinking Systems, Not Reporting Systems

Principle 11: Turn AI into a Judgment Multiplier