Beyond the Dashboard | Principle 5: Focus on Adoption, Not Just Delivery

Shipping is overhead; adoption is the asset. In B2B, features stick and removal is costly, so prevent bloat. In B2C, unused features fuel silent churn. AI can surface adoption signals, not define value. Lead by asking: what delivers outcomes, not what shipped. Treat non-adoption as debt. Measure it.

TL;DR (For Leaders Reading This Between Two Strategy Calls)

- Shipping is overhead. Adoption is the asset.

- In B2B SaaS, removing features is rarely easily feasible. Prevention might be your only scalable strategy.

- In B2C SaaS, unused features lead to silent churn. Users remove themselves.

- AI won’t tell you what success looks like. It can help surface adoption signals but not define value for your customers.

- Your product is a system for driving outcomes, not a catalogue of releases.

- Shift the conversation from: “What did we ship?” to “What is delivering value?”

Principle 4 was about how stacking frameworks creates noise and why leadership must enforce clarity by choosing one dominant lens per decision.

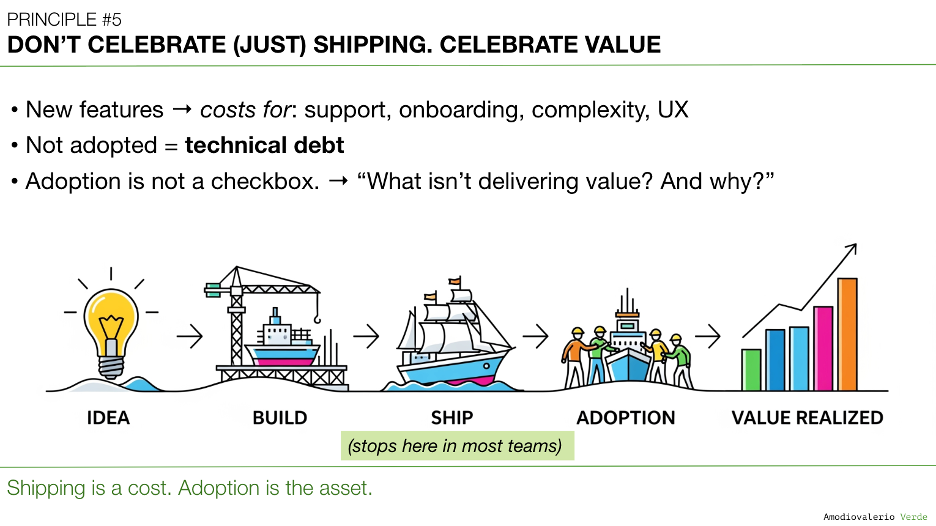

Now, in Principle 5, we confront a deeper trap: mistaking shipping for success. Because features aren’t value. Adoption is.

The Strategic Cost of Mistaking Activity for Progress

You’ve probably lived this moment.

The backlog is full. The roadmap is full. Velocity is high. Features are shipping.

And yet… leadership starts asking questions nobody can answer:

- “Are customers using the last three features we shipped?”

- “Which features generate the most value?”

- “What about the ones we built last year?”

Silence. Awkward glances. Someone mentions that the adoption dashboard is still being built. Leadership moves on.

This silence is your real technical debt.

Because every feature your teams ship, that your customers don’t use, adds silent operational cost:

- Support tickets (“How do I use this thing?”)

- Training materials (“Here’s how to ignore that setting.”)

- UX clutter (confusing interfaces that hurt adoption of valuable features)

- Maintenance burden (keeping code alive just because someone, somewhere, might use it)

In B2B SaaS, this problem compounds fast. Because:

- Enterprise clients demand stability. You can’t just easily remove features.

- Some customers use features that others ignore.

- Removing features involves contract renegotiation, retraining, release notes, legal approvals, and political headaches.

If you ship something unnecessary, you’ll pay for it.

The KPI You’re Probably Not Tracking

Let me ask the uncomfortable question: Do you know the adoption curve of your last three releases?

Not the release notes. Not the tickets closed. Actual, quantified, sustained user adoption.

Most teams don’t.

Because it’s hard. It’s messy. Adoption doesn’t slot neatly into your quarterly OKRs or JIRA workflows.

In most companies, adoption data is fragmented across:

- Product analytics.

- Customer success tools.

- Feedback from sales.

- Anecdotes from implementation teams.

Nobody owns the complete picture.

And yet, adoption is the only metric that matters.

Because features not adopted are not neutral. They’re negative assets.

Adoption Is Not a Checkbox. It’s Your Asset.

Let’s be clear: Shipping is a cost. Adoption is the asset.

If customers don’t adopt, your shipped features are liabilities. Strategic debt.

And yet, many teams still operate as if release equals success.

But adoption isn’t binary. It’s not “did we ship it and check usage once?”

Adoption is a curve. A lifecycle. A dynamic pattern of real-world behavior.

And adoption can fail silently.

A feature can be technically correct but strategically irrelevant.

A workflow can be “used” once but never integrated into customer value creation.

Adoption is complex. That’s why most teams avoid measuring it. And that’s why most leaders ignore it.

But complexity isn’t a reason to look away. Complexity is a reason to lead.

Why Removing Features Is Not a Strategy

In theory, if a feature fails to gain adoption, you remove it.

In practice, reality splits between B2B and B2C.

In B2B SaaS: "A feature is forever"

Like a diamond. Only less romantic, and far harder to get rid of.

- A niche customer might rely on it.

- Your biggest client might label it “critical”, even if they use it twice a year.

- Some customers might complain about the loss of value.

Removing it? Risky.

It can trigger commercial escalations, legal reviews, CS misalignment, sales retraining, and full-blown change management.

Result? Features go in fast. Getting them out? That’s a cost center.

Prevention might be your only viable strategy in B2B.

Ship only what will deliver value. Track adoption obsessively post-release.

Because once shipped, an unused feature doesn’t disappear. It becomes permanent strategic debt.

In B2C SaaS: Features Don’t Get Removed. Customers Do.

- You can technically remove features faster and easier.

- But customers won’t tell you when features confuse or slow them down.

- Most customers will not even complain. They won’t raise a ticket. They won’t explain why.

- They’ll churn quietly long before you realise there’s a problem.

In B2C, poor adoption doesn’t trigger escalations or spark a conversation.

It sparks silent churn or - in worse cases - a very public backlash.

Summary: In B2B, features are hard to remove. In B2C, users remove themselves. In both cases, prevention is your only sustainable strategy.

The Role of AI: Judgment Amplifier or Noise Generator?

AI can help, but only if you lead.

In adoption tracking, AI can be useful. But it’s a tool, not a magic wand.

Where AI is valuable:

- Surface patterns in feature usage.

- Spot anomalies in adoption curves.

- Highlight long-tail features that appear dormant.

- Detect drop-offs in critical workflows.

- Correlate feature usage with retention or churn patterns.

But here’s the risk:

AI doesn’t understand your customers. It cannot define success.

- AI models trained on click data don’t know which features solve real customer problems.

- AI will happily highlight statistically significant but strategically irrelevant patterns (what I call "AI-driven vanity metrics").

- If your teams treat AI outputs as truth, they’ll optimise for noise, faster.

AI amplifies judgment. Without judgment, it multiplies confusion.

AI is your adoption radar, not your navigator.

The Risks of Overusing AI in Adoption Tracking

AI is a powerful tool, but it's also a risk multiplier when misused. Overusing AI in adoption tracking can lead to several critical failures:

- False Precision: AI surfaces patterns, but not priorities. Over-relying on AI risks turning noise into metrics that appear meaningful but lack strategic relevance.

- Decision Paralysis: Too much data, surfaced indiscriminately, overwhelms teams. AI can flood dashboards with irrelevant drop-offs, minor usage anomalies, or edge-case behaviors that distract from meaningful adoption signals.

- Automated Bias: AI models reflect historical usage. If your existing adoption is skewed (toward certain personas, workflows, or geographies), AI will amplify that skew, not correct it.

- Loss of Judgment: AI should augment, not replace, human decision-making. Delegating adoption strategy to AI leads to teams tracking what’s easy to track, not what matters.

The biggest risk? Complexity masquerading as clarity. AI makes data look actionable, even when it isn’t.

AI’s Future Role in Adoption Tracking

Used wisely, AI can transform adoption tracking from a reactive task to a proactive advantage:

- Pattern Detection at Scale: AI excels at spotting latent usage patterns humans miss: surfacing adoption risks before they escalate.

- Anomaly Detection: Drop-offs, churn patterns, or unexplained changes in feature usage can trigger early warnings, allowing Product and CS teams to intervene.

- Segment-Specific Insights: AI can segment usage patterns by persona, region, or customer cohort, allowing tailored adoption strategies.

- Predictive Modeling: AI can forecast which features risk becoming liabilities based on declining engagement, signaling where to invest recovery efforts.

In short: AI will not tell you what matters. But it can show you what’s happening, faster and at scale.

The next evolution? Adoption Intelligence Systems, hybrid models where human-defined KPIs steer AI-powered analysis. Not AI-powered decision-making. A critical distinction.

Your Product Is a System. Every Feature Weakens or Strengthens It.

Your product isn’t a sum of features.

It’s a system.

Every feature either strengthens that system, by driving customer outcomes, or weakens it, by adding operational drag.

Product teams need to think like portfolio managers, not feature brokers.

- What features generate actual value?

- Which ones degrade UX clarity?

- Which features are silent liabilities?

Ask your teams: “Of what we’ve already shipped, what isn’t delivering value? And why?”

That’s where leadership begins.

Changing the Conversation: From Shipping to Realising Value

Shift your team’s language.

From:

- “What should we build next?”

- “What’s in the release pipeline?”

- “What’s our velocity?”

To:

- “What adoption rate did we achieve for our last release?”

- “Which existing features need adoption improvement?”

- “Which features are negatively impacting product coherence?”

Adoption isn’t a success story. It’s a strategic metric.

And it’s your job to measure it.

Strategic Implications

Ignoring adoption metrics:

- Bloats your roadmap with noise.

- Weakens your competitive narrative.

- Drains your operational budget.

- Erodes customer trust as products become harder to navigate.

- Slows innovation as UX and engineering teams are trapped maintaining irrelevant code.

Tracking adoption is not optional. It’s a strategic lever.

From Insight to Action

Especially in B2B SaaS, everything shipped is either an asset or a liability.

You can’t afford to treat adoption as an afterthought. Features that aren’t adopted aren’t neutral. They’re negative.

So the challenge is simple, but not easy:

- Declare adoption as a strategic metric.

- Track it relentlessly.

- Treat non-adoption as debt.

- Prevent before you need to remove.

- Let AI scale your observation, but never your strategy.

Next time your leadership asks for delivery metrics, ask one question back: “Do we know who’s using what we built?”

Because in enterprise SaaS, shipping is debt. Adoption is value.

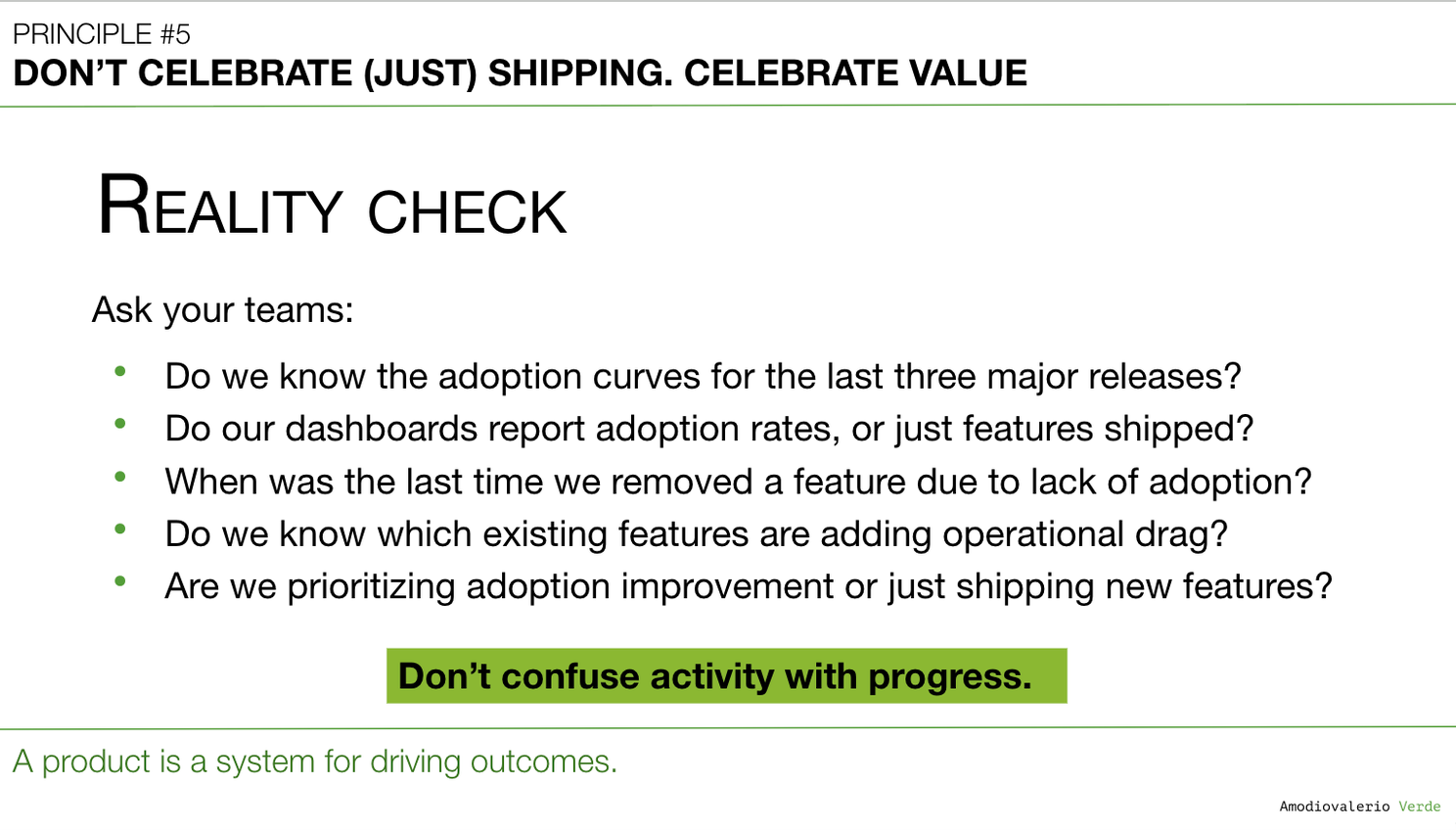

Reality Check

Let’s be blunt.

If you’re not tracking adoption after launch, you’re not managing a product. You’re managing a feature factory.

Ask your teams:

- Can we show adoption curves for the last three major releases?

- Do our dashboards report adoption rates, or just features shipped?

- When was the last time we removed a feature due to lack of adoption?

- Do we know which existing features are adding operational drag?

- Are we prioritizing adoption improvement or just shipping new features?

If you’re hearing silence or vague answers, you’re not building value. You’re building liabilities.

Your Next Three Moves

- Run an Adoption Deep Dive Pick your last 3 releases. Map adoption curves. Review.

- Define Success Upfront For your next roadmap item, define adoption metrics before building.

- Audit Your Product Portfolio List all active features. Tag by adoption level. Flag dormant assets.

Final Reflection: Product is a System. Adoption is Proof.

Your product isn’t a collection of features. It’s a system designed to drive outcomes. Every feature either strengthens that system… or weakens it. Every shipped feature is a strategic decision. Every adopted feature is a strategic victory. Everything else? It’s just overhead.

Now ask yourself, and your teams: Do we know what we’ve built that’s actually delivering value?

If the answer is silence, your adoption problem isn’t tactical. It’s strategic.

And it’s waiting to be solved.

What’s Next

Next, we’ll tackle Principle 6: Know Your Tool Stack’s Boundaries because your adoption data is fragmented and your tools won’t solve that for you.

PAQs – Potentially Asked Questions

Is tracking adoption feature-by-feature realistic in large enterprise products?

Yes, but with focus.

- Track adoption for customer-facing features that impact core workflows.

- Use AI to monitor long-tail features and surface anomalies.

- Prioritise by revenue impact, strategic goals, or operational risk.

Adoption tracking is not a vanity dashboard. It’s your system health check.

What if customers use features in ways we didn’t anticipate?

Welcome to B2B. Track usage patterns, not just feature toggles.

- Focus on workflow completion rates.

- Segment by persona (admins vs. end users).

- Analyse feature combinations.

AI can help surface unexpected usage paths, but you need human interpretation.

We’re too busy delivering to track adoption. How do we start?

Start small:

- Choose your last 3 major releases.

- Define what adoption should look like for each.

- Build adoption dashboards (even manual, in Excel).

- Review adoption monthly at roadmap reviews.

What gets reviewed gets prioritised.

Can AI tell us which features to deprecate?

No. AI can highlight dormant features, but cannot assess strategic importance.

- Use AI to flag candidates.

- Use human judgment to decide.

In B2B, deprecation is rarely tactical. It’s a strategic act.

Isn’t adoption the job of Customer Success?

Customer Success ensures that adopted features drive business outcomes.

However, Product must own adoption inside the product itself: discoverability, usability, and utility. Saying “Product owns adoption” pushes accountability upstream where it belongs.

Product answers: “Is this feature easy to find and use?”

CS answers: “Is the customer getting value from using this feature?”

When is shipping fast more important than initial adoption?

Rarely. Edge cases (and each one creates deliberate strategic debt you must repay fast):

Secure a market beachhead

- First mover, minimum viable product, core‑hypothesis check.

- Goal: plant a flag, learn, then sprint toward real adoption before competitors catch up.

Close a critical defensive gap

- Competitor launches a must‑have feature that blocks deals.

- Goal: stop immediate revenue bleed. Expect to refactor later.

Run a pure hypothesis test

- Isolated, small user segment. Feature is an experiment, not a product.

- Goal: validate or kill a key assumption, then iterate or remove instantly.

You are not “celebrating shipping.” You are borrowing time. Without a ruthless plan to measure, learn, and drive adoption – or to delete the code – you are just expanding the feature graveyard.

Rule of thumb: Speed buys a data point. Adoption creates an asset.

What about shipping fast to prove Product‑Market Fit or to secure funding?

These are the classic traps. They confuse shipping activity with evidence of value.

Product‑Market Fit (PMF)

PMF is not a long feature list. It’s a small set of users repeatedly solving a painful problem with your product. Ship only what’s needed to get real usage data. Adoption, nothing else, signals PMF.

Securing Funding

A flashy demo impresses naïve investors. Sophisticated investors look for traction (adoption, retention, engagement). Stronger pitch: a focused product plus a deeply engaged pilot customer base.

Isn’t shipping itself value creation, not just adoption?

Shipping creates potential value, not realized value.

Until features are adopted, they deliver no customer outcomes and consume operational resources.

Only adoption proves value to the market, to investors, to everyone.

Shipping is necessary but insufficient.

Are non-adopted features always liabilities? Isn’t that too extreme?

In most B2B SaaS contexts, yes.

Non-adopted features add support, maintenance, UX complexity, and distraction.

However, edge cases exist where unused features can retain strategic optionality.

Still, treating non-adoption as neutral risks underestimating its long-term cost.

Saying “In B2B SaaS, a feature is forever” seems exaggerated. Can’t we sunset features?

You can, but it’s expensive.

Legal, commercial, technical, and customer dependencies often block or delay removals.

Prevention costs less than deprecation.

Saying “forever” is a forcing function to shift mindset, not a literal rule.

Each principle in this series builds upon the last to form a coherent system for better decision-making. Here is the full list of principles we are exploring:

Intro: Beyond the Dashboard Series

Principle 1: Avoid the Data Delusion

Principle 2: Adopt a Data-Informed Approach

Principle 3: Choose What to Measure

Principle 4: Use Frameworks as Filters, Not Blueprints

Principle 5: Focus on Adoption, Not Just Delivery

Principle 6: Know Your Tool Stack’s Boundaries

Principle 7: Build Layered Dashboards to Scale Thinking

Principle 8: Manage Multi-Product Portfolios Separately

Principle 9: Reconcile Metric Definitions Before Analysis

Principle 10: Build Thinking Systems, Not Reporting Systems

Principle 11: Turn AI into a Judgment Multiplier