When Words Lie: The Invisible Risk in Your Product

Words shape trust. Product language isn’t neutral: it trains mental models. “Friend” now means barely acquainted; “I’m thinking...” makes users over-trust AI. Audit copy, cut unearned metaphors, and test for over-trust. Tech runs on tokens; products run on words. Clarity is a trust contract.

Words matter.

Words carry weight. They shape how we understand the world.

We don’t “use facial tissue.” We “grab a Kleenex.”

We don’t “send a connection request.” We “friend” someone.

This isn’t accidental. It’s design. And it has consequences.

Sometimes, the shift is obvious, like when a brand name becomes the generic term. It’s a marker of dominance, not a change in meaning. (think to Scotch tape, Velcro, Google)

But other times, the shift is slower. Subtler. And more dangerous.

The Hidden Cost of Product Language

Take friend, for example.

Facebook redefined it. What used to imply closeness, trust, and shared experience now means “we once went to the same school” - in different years. Or “my cousin’s ex-boss.” Or “my mom.” The word stayed the same, but the meaning drifted. And few noticed.

That’s how semantic drift works: slowly, invisibly, until the original meaning is gone.

One interface at a time. And when you multiply that across millions or billions of interactions, you don’t just change vocabulary. You change perception. You change trust.

We’re seeing it again.

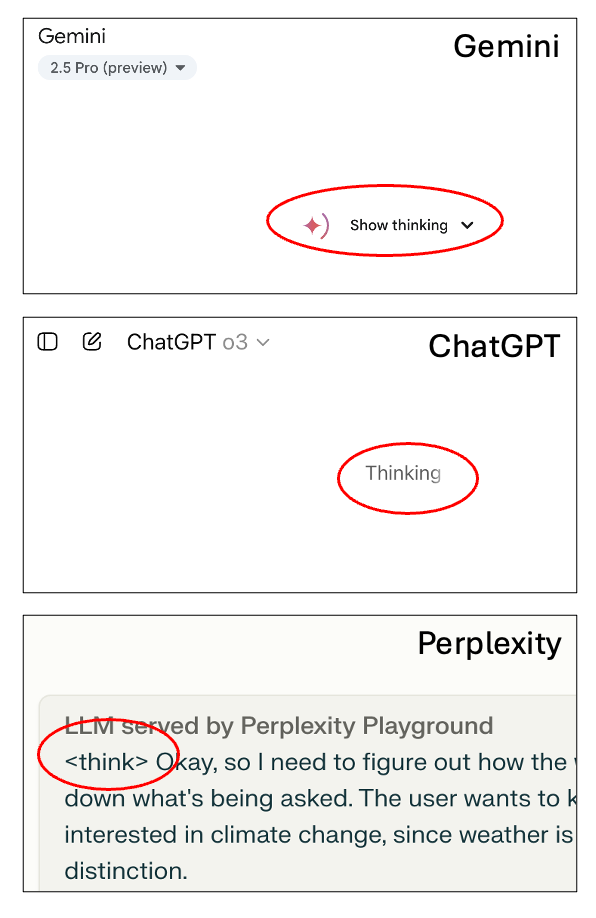

LLMs like ChatGPT, Gemini, and Perplexity say things like:

“I’m thinking...”

Maybe it's a placeholder. Maybe it’s just filler. But it’s not neutral. It’s a metaphor that changes how people relate to the tool. When an AI says it’s “thinking,” it invites users to believe it can. And that belief has consequences.

Because when we anthropomorphize, we inflate trust. When we generalize, we erase nuance.

And when we don’t think about the words in our product, we still ship them. At scale.

Words become models. Models drive expectations. And mismatched expectations are a liability.

What You Can Do

—> Audit the copy. Strip metaphors your product hasn’t earned.

—> Stop treating microcopy as decoration. It’s UX infrastructure.

—> Train teams on semantic drift and how language shapes mental models.

—> Test for over-trust, not just usability. If users assume cognition where there is none, you have risk.

Bottom Line

Your model might be built with tokens. But your product is built with words.

And if your words are wrong, it doesn’t matter how good your tech is.

Because clarity isn’t just a UX win.

It’s a trust contract.

💬 Seen this happen in your product?

💬 What’s the most misleading label you’ve shipped or seen?

👇 Let’s talk.