Weekend Reflections #1 | The Momentum Wave

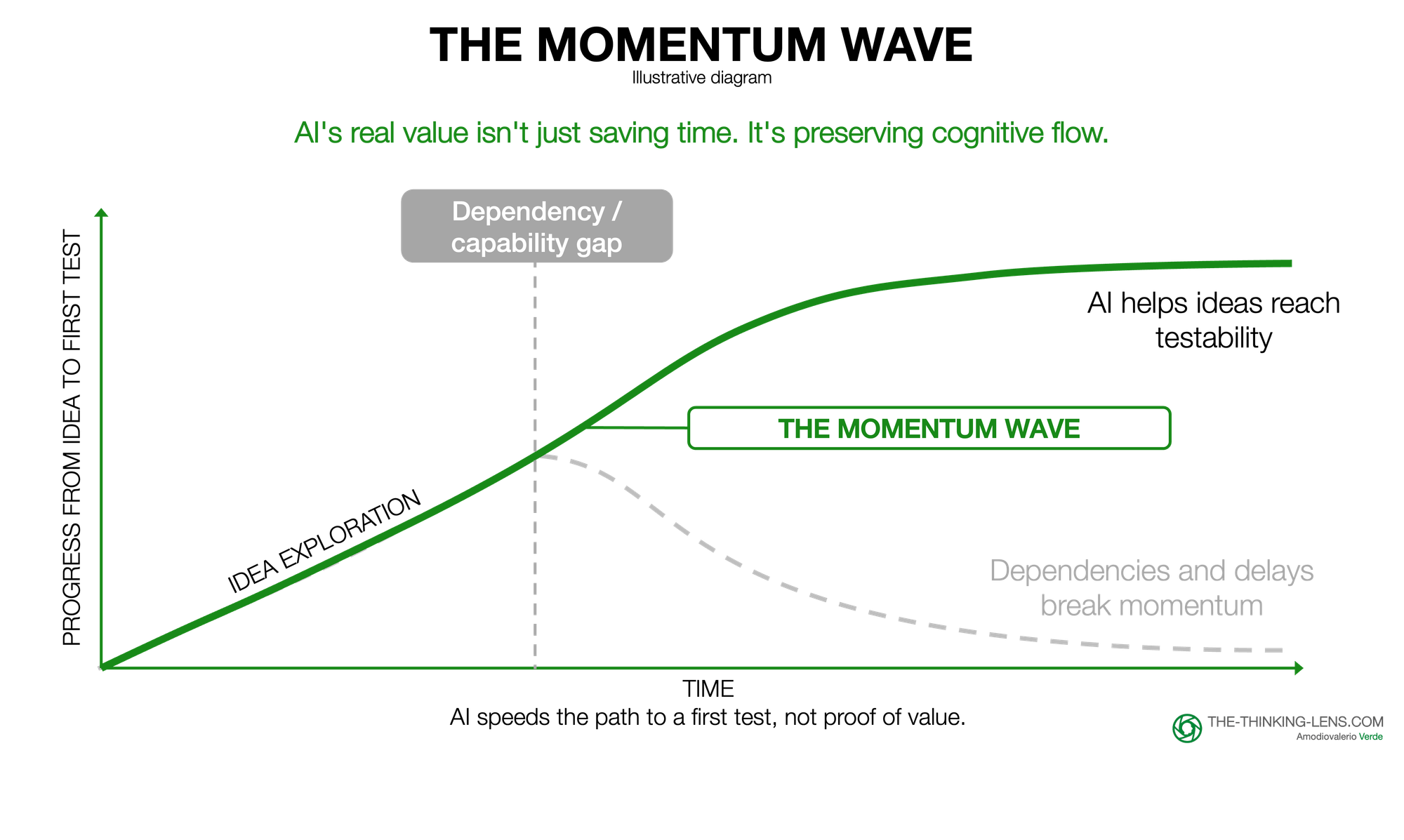

Most ideas don't die because they are bad; they die when momentum breaks before testing. AI shrinks the gap between thought and test, creating a Momentum Wave where natural language lets anyone prototype. But beware: speed without customer validation is just fast failure.

[Views are my own]

Most ideas in knowledge work do not die because they are bad. They die because momentum breaks before they become testable. AI may change that.

Over the past few weekends, I have been experimenting with AI coding tools and felt something I had not felt in a long time: that intense flow state where thinking, making, and learning happen almost continuously. As someone leading product excellence, I want to understand these tools through direct use, not just theory. That experience made me think more clearly about what AI is actually doing when it works well.

In knowledge work, plenty of ideas fail because they are bad. But some fail for a different reason: they lose momentum before they become testable. One of AI’s most useful near-term effects is that it shrinks the gap between thought and test, preserving momentum when it matters most.

Before AI changes organizations at scale, it may first change something smaller and more immediate: how long an idea can stay alive before friction kills it.

The hidden friction in knowledge work

One of the biggest frictions in knowledge work is interruption. Some interruptions are external: meetings, emails, and context switching. They are constant, but often manageable.

The more dangerous interruptions are internal. They happen when thinking runs into a skill gap, a dependency, or a handoff before an idea becomes tangible.

You have a promising idea for a better way to solve a customer problem or remove an operational friction. But to test whether that approach is even viable, you may need to code a prototype, model the data, design an interface, or configure an API. Without those skills, exploration requires pulling in others. That means resources, prioritization, and delay before you know whether the core idea works.

The thread breaks. Momentum fades. Innovation dies. Quietly. Before the first test.

A lot of the AI discussion still focuses on role substitution: which jobs will change, which tasks will disappear, who replaces whom. Those are fair questions, but they can overshadow a more immediate shift.

The key shift is that natural language is becoming the interface. You no longer need to master Python, SQL, or design tools to explore feasibility. You describe what you are trying to learn, and the tool helps you get to a first judgment. This is not just faster prototyping. It expands who can prototype at all.

AI can sometimes keep the cognitive thread alive long enough to get to a first test. Not because it removes the need for expertise or produces production-ready work, but because it can generate enough structure, output, or direction to keep the work moving while the original problem is still alive in your head.

The Momentum Wave

I call this the Momentum Wave: a temporary phase in which the distance between thought and test becomes unusually short. What makes this different from simply "faster prototyping" is the preservation of cognitive continuity: you stay in the same problem context from question to first answer, without losing the original insight that sparked the exploration.

It happens when focus, energy, and tooling align well enough for an idea to move forward without the usual breaks in momentum. AI does not solve the whole problem. But it can remove just enough of the early skill, knowledge, or execution barrier to keep the work moving.

When a Wave is active: more assumptions tested, more weak ideas filtered, more promising ideas make it far enough to deserve deeper work.

That is also why well-designed hackathons can work so well. They create artificial Momentum Waves through compressed time, reduced handoffs, protected focus.

The implication for leaders is simple: in the earliest stage of work, the goal is not polished output. The goal is to reduce the distance between idea and evidence.

The trap

AI can preserve momentum, but it more easily creates false momentum. When output costs approach zero, the natural tendency is to generate more, not validate better. A rough prototype feels like progress before the core assumption has been tested. This is not a trap to avoid, but the default outcome. Speed only creates value when paired with judgment, validation, and good problem framing. The discipline required is higher, not lower.

Momentum must serve learning, not replace it. Good thinking needs pauses, time for ideas to settle and information to connect. The point isn't nonstop motion, but using momentum well when it appears.

The trap I am watching for is confusing rapid output with validated learning. Speed without customer contact is just fast failure in disguise. And speed pursued solo can miss the cross-functional reality checks that make ideas viable: technical feasibility, business economics, user desirability. The most valuable Momentum Waves happen when rapid individual exploration leads quickly to collaborative validation, not when they replace it.

A second risk is that AI becomes a crutch. The answer is not to avoid it, but to pair it with deliberate skill-building. Use AI to explore, then learn the underlying mechanics.

The real value and the open questions

The value of AI is not only the hours saved. It is how much more thinking, testing, and learning can happen before momentum is lost.

The value of the Momentum Wave is not measured wave by wave. It shows up over time: more ideas tested, more weak ideas filtered out early, and more promising ideas carried far enough to earn serious investment.

That is what makes this different from simply working faster. AI lowers the barrier to first proof. It allows more people to make an idea tangible enough to be challenged, not just discussed.

That changes the bottleneck. The barrier is no longer only technical skill or access to tools. It is whether the organization has the capacity, judgment, and slack to evaluate more early-stage ideas without drowning in noise. The signal-to-noise filter becomes critical: clear kill criteria, rapid customer contact requirements, and forcing functions that separate exploration from roadmap commitment.

That raises harder questions.

The Momentum Wave is individual and hard to force. Leaders cannot schedule it, but they can create conditions for it. If every team is operating at full utilization, there is no room to follow emerging ideas.

So the real question is not how to control Momentum Waves. It is how much exploratory capacity the organization wants to create. The transition path for underwater teams is not "add 15% slack" but "what are we willing to stop doing, defer, or fund differently to create space for the ideas AI makes testable?". That is a harder conversation, but the right one.

A second question: does faster testing improve learning? Speed to first test only matters if the test validates something meaningful: customer value, not just technical feasibility.

Something you can try next week:

- Pick one person with an idea that's stuck on a skill gap. Pair them with AI tools + a senior IC as a coach. See if you can get from hypothesis to testable prototype in 120 minutes. Then decide: was the learning worth it?

- Ask your team: What idea died this quarter because you couldn't get past a skill gap? Pick the top 3. See if AI tools can revive one.

What comes next

The immediate challenge is creating capacity for Momentum Waves. But within the next months, the challenge will shift to coordination: when your team is riding simultaneous Waves, how do you synchronize without killing momentum? And as AI expands from prototyping into validation, the Wave may lengthen from "idea to test" into "idea to validated insight." Faster validation sounds good, but only if paired with better judgment about what to validate. The risk is not moving too slowly. It is building speed without the discipline to make speed valuable.

What's your experience? Where does a skill gap break your flow, and where has AI helped keep the thread alive? I'd love to hear your perspectives.