Stop Doing Discovery Karaoke

Match discovery to risk, not habit. Most teams default to interviews, tests, and betas, which miss blind spots. Treat Compliance & Ethics as a first-class risk. Build a simple map: risk → stage → methods. Use AI to speed clustering, summarization, and signal detection. Focus on uncertainty reduction

[Views are my own. Not legal or compliance advice.]

Match Discovery to Risk and Use AI to Do It Better

Most product teams over-rely on the same few discovery methods:

- User and customer interviews

- Usability tests

- Surveys

- A validation prototype or a mock-up

- Maybe a beta

It’s familiar. It’s safe. It’s fast. And under pressure, it becomes the default. But default ≠ deliberate. And in many cases, it’s unexamined shortcuts.

The Pattern

- Big bet? “Let’s talk to some customers.”

- New concept? “Let’s build a prototype.”

- 3 months later? “Why didn’t we catch this earlier?”

This isn’t incompetence. It’s structural: time pressure, ingrained habits, shallow method literacy, and little to no tooling. It’s like karaoke with your top 5 songs: it works until it doesn’t. Then it just feels off-key.

Discovery Is Not a Ritual

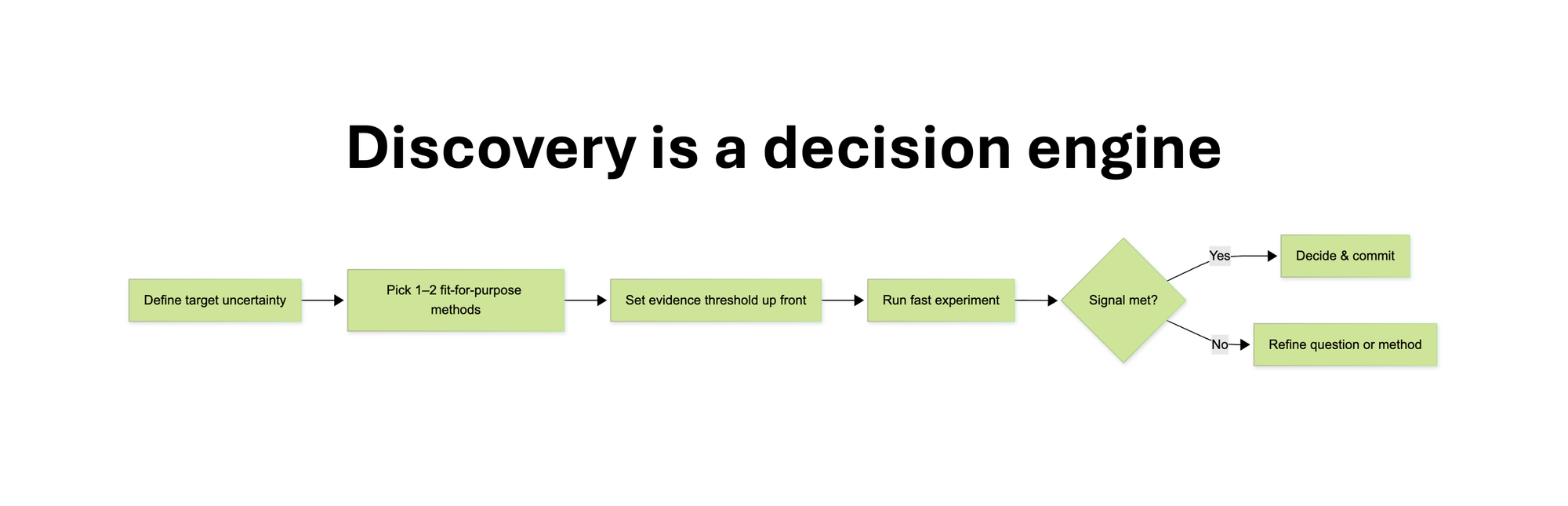

Discovery is a decision engine. Its job is to reduce uncertainty so we can move faster, with more confidence.

We often refer to the four classic product risks, popularized by Marty Cagan:

- Value/Desirability - Will the customer buy it, or will the user choose to use it?

- Feasibility - Can we build it?

- Viability - Can the stakeholders support the solution?

- Usability - Can the user use it?

A few years ago, Marty also proposed a fifth one (that was originally seen as part of business viability):

- Ethical Risk - Should we build it?

That framing still isn’t widely adopted, but it’s more relevant than ever.

With the pace of GenAI development, AI ethics is becoming a major risk area in its own right, touching everything from explainability and data transparency to unintended harm, manipulation, and regulatory exposure.

Moreover, in many industries, ethics is only half the story. Given today’s regulatory climate, compliance has become just as critical and as much of a blocker if ignored.

So in practice, we’re dealing with a broader, fifth risk: Compliance & Ethics: Are we putting the company or users at legal or ethical risk? Could this feature be misused or manipulated? Are we transparent and fair in how we use data? Are we even allowed to build it? And should we?

Think: GDPR, AI governance, EU AI Act, accessibility, algorithmic bias, platform restrictions. These are not theoretical. They kill deals, block launches, and damage trust after you’ve already invested. In regulated or enterprise contexts, this risk comes first.

Takeaway: Treat compliance and ethics as a first-class risk. Not a footnote.

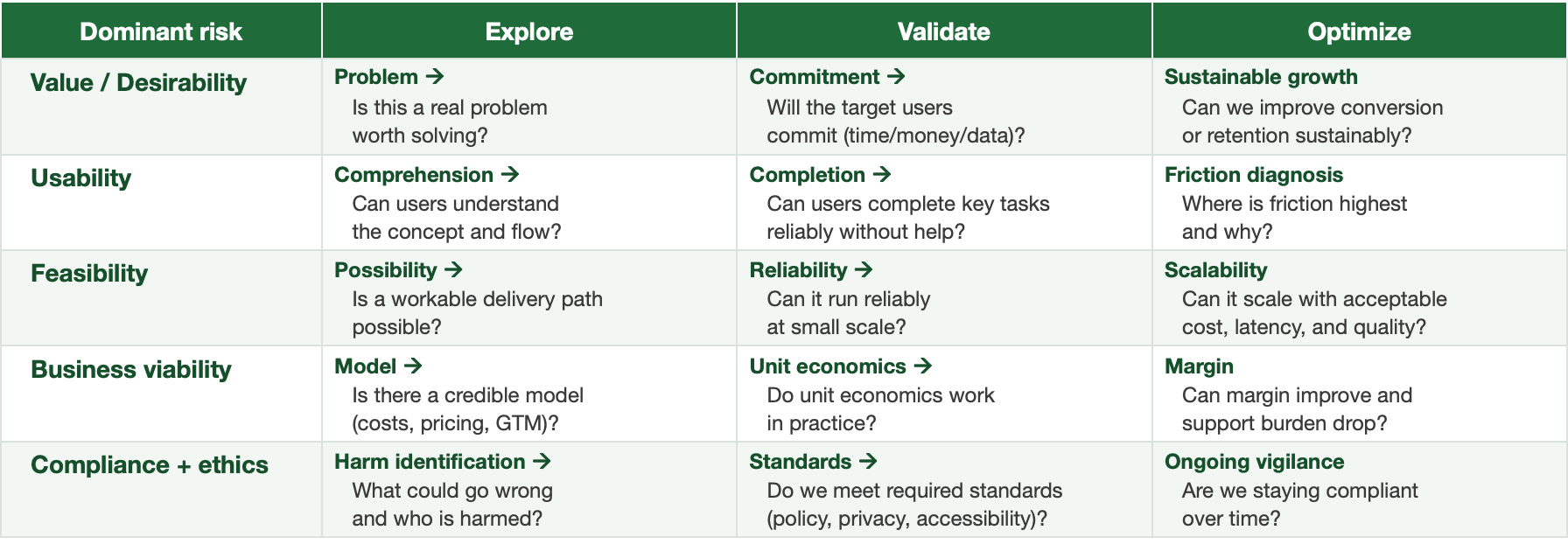

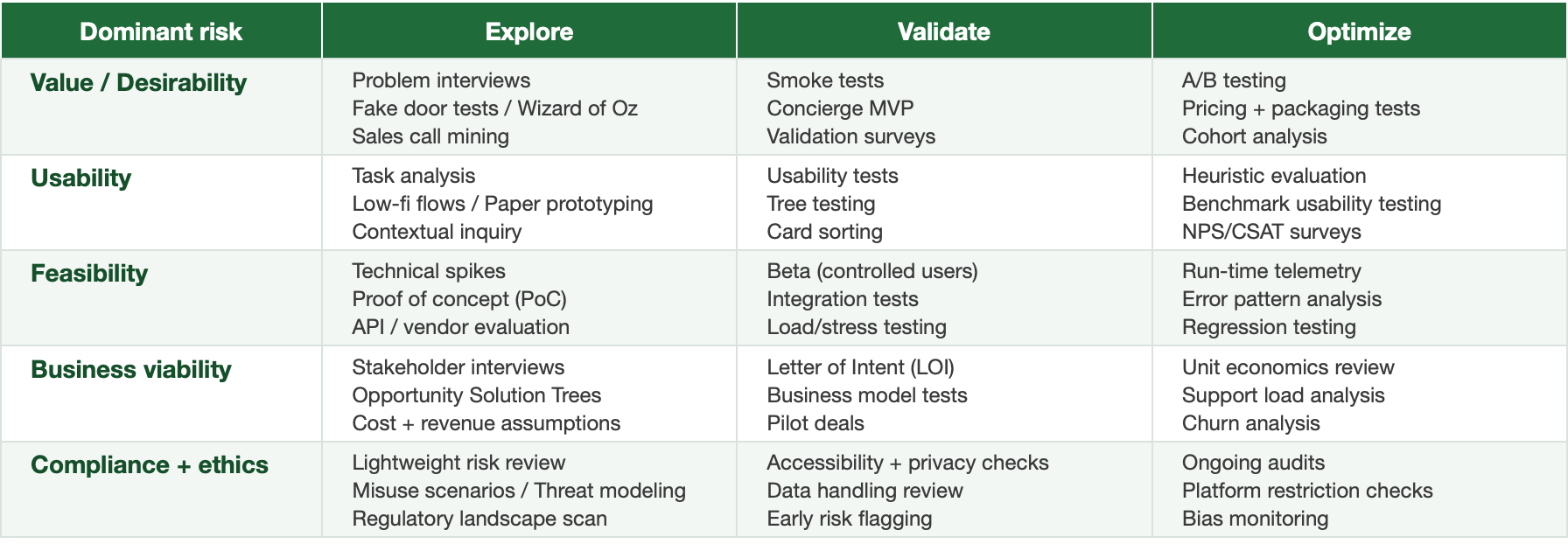

Discovery Should Match the Moment

There are 40+ legitimate discovery techniques. Most teams use 3-5. That’s the real problem (and no, you do not have to use ALL of them).

You don’t need a PhD in research. But you do need to match method to:

- Type of risk

- Stage of the product

- Access to users

- Context: B2B vs B2C, regulated vs non-regulated

Start with a few questions:

- What risk are we trying to reduce?

- Are we in the problem space or solution space?

- Are we at explore, validate, or optimize stage?

- Do we have direct user access or not?

Examples:

- Don’t spend 5 days on a design sprint when a fake door test can validate the idea in 1.

- Don’t launch a 3-month beta if a 2-week concierge test gives stronger insight

- Don’t over-interview when analytics already tell the story

Familiar ≠ fit-for-purpose. Method mismatch leads to waste, delay, and blind spots.

Map It. Teach It. Scale It.

Build a simple method map like: [Risk] → [Stage] → [Recommended Methods]. Build this map based on team expertise, resources, product type, company type, and specific unknowns, ensuring it is kept lightweight, teachable, and visible.

Match discovery to risk, not habit.

Use this as scaffolding not dogma. Train teams. Build shared language. Give them method literacy, not just freedom.

This is what separates “we talked to 10 users” from actual discovery maturity.

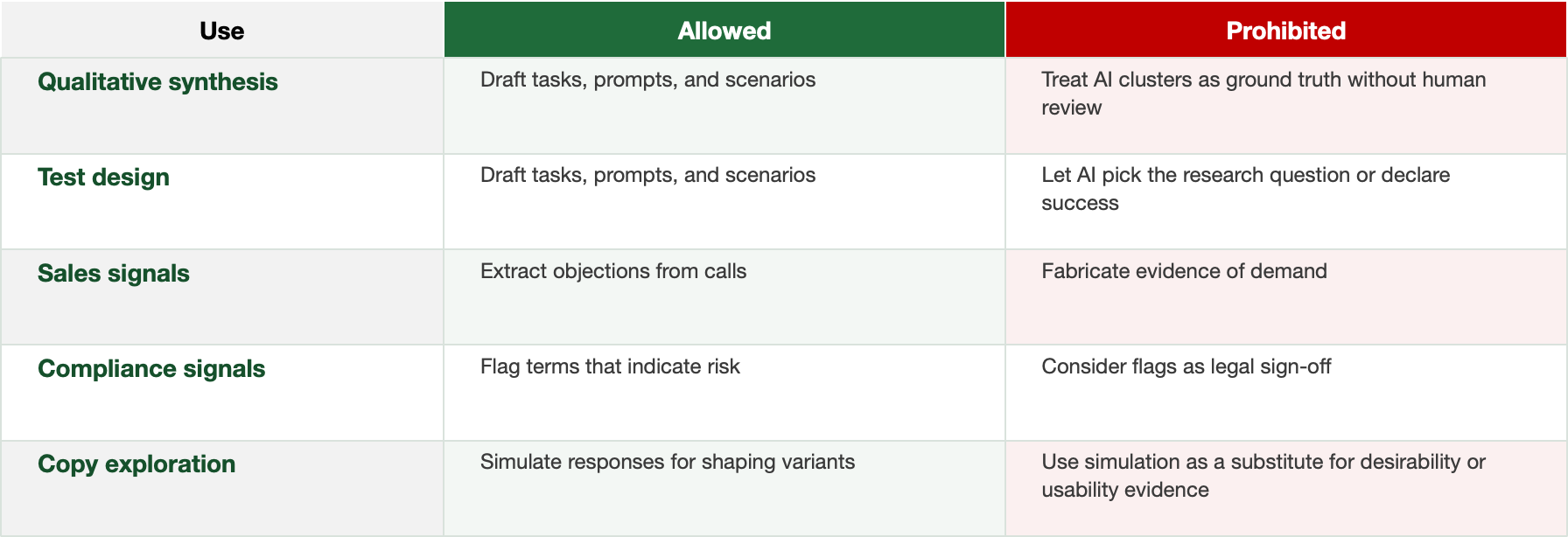

Where AI Actually Helps

AI won’t decide what to test. But it does accelerate how you do it.

Already, top teams use AI to:

- Cluster qualitative themes from interviews

- Summarize open-text survey feedback

- Draft usability tasks and test plans

- Extract objections from sales calls

- Flag early signs of regulatory risk

- Simulate user responses to copy or flows

- Generate prompts for assumption testing

- Detect behavioral patterns in product funnels

This is augmentation, not automation. AI doesn’t replace judgment, but it compresses time-to-signal. Used right, it frees up teams to focus on framing the right questions not just running tests.

Guardrails: “AI in discovery: allowed vs prohibited”

What’s Next

Expect discovery tools to evolve fast in the next 12-18 months:

- Tools that map your context + risk + timeline → and recommend matching discovery methods

- Dynamic systems that learn across projects → to improve recommendations

- From static playbooks to adaptive decision engines

This is where discovery is going. And it’s badly needed.

Stop Defaulting. Start Matching.

If your discovery feels slow, repetitive, or toothless, it’s probably not your team. It’s your method.

The best teams aren’t just fast. They’re deliberate. They don’t start with “what tool are we comfortable with?” They start with “what uncertainty are we killing?”

As leaders, it’s on us to:

- Build shared language around risks and discovery goals

- Map methods to use cases

- Fund experimentation

- Model better defaults

Your Turn

👉 What discovery methods do you actually use?

👉 How are you integrating AI into your process?

Let’s stop playing the same 5 songs. Let’s build a smarter, risk-matched, AI-accelerated discovery system.

Non-exhaustive list of techniques - for reference:

1) Generative (Understanding Needs & Problems)

Use when you're in the problem space and want to uncover insights.

- User Interviews

- Jobs-to-be-Done (JTBD) Interviews

- Diary Studies

- Ethnographic Research / Field Studies

- Contextual Inquiry / Ride-Alongs

- Customer Support Shadowing

- Community Feedback / Forums

- Sales Call Listening / Analysis

- Search Trend Analysis

- Stakeholder Interviews

2) Evaluative (Validating Solutions & Concepts)

Use when you're in the solution space and want to test usability or desirability.

- Usability Testing

- Surveys

- Card Sorting

- Tree Testing

- Expert Reviews / Heuristic Evaluation

- Landing Page Tests

- Fake Door Tests

- Wizard of Oz / Manual Backends

- Beta Programs

- Pre-sales / Pilot Deals

- Concierge MVPs

- Prototyping

3) Exploratory / Strategic Framing (Mapping and Framing Problems)

Use when you're trying to structure uncertainty or design your discovery approach.

- Assumption Mapping

- Opportunity Solution Trees (OST)

- Problem Exploration / Five Whys

- Value Proposition Canvas

- Story Mapping

- Journey Mapping

4) Experimentation / Testing (Fast Signals & Market Validation)

Use when you're looking to test demand, feasibility, or specific hypotheses.

- Fake Door Tests

- Landing Page Tests

- Concierge MVPs

- Wizard of Oz / Manual Backends

- Beta Programs

- Pre-sales / Pilot Deals

5) Collaborative / Internal Alignment Tools

Use to generate ideas, align teams, or synthesize insights.

- Design Sprints

- Internal Team Workshops

- Co-creation/co-design Workshops

- How Might We Sessions

- Story Mapping

- Opportunity Solution Trees (OST)

PAQ (Probably Asked Questions)

Does “compliance first” slow innovation?

No. It prevents expensive rework and blocked launches. Use a fast triage, not a full legal review. The goal is to spot category-level risks early, then right-size the depth of checks. Cheap screening first, deeper review only when a concept crosses predefined risk thresholds.

Is this just process theater with a new name?

No. The core idea is to match the smallest effective method to the biggest current risk. One or two methods per bet, clear evidence thresholds, then a decision. If it does not reduce uncertainty that affects a decision, do not do it.

Why not just run more interviews and usability tests?

Because overusing a few familiar methods leaves blind spots. If the top risk is feasibility, a spike, a prototype with realistic constraints, or a data backfill experiment beats more interviews. If the risk is compliance, a short-form triage beats another usability session.

Can AI-simulated feedback replace real users?

No. Use AI to compress time to signal only. Summarize, cluster, draft prompts, or generate counterexamples. Simulation is exploratory and never evidence of desirability, usability, or business value. Real users and real data decide.

We do not have time for a method map.

You do not have time for preventable rework. A lightweight map takes 30 to 45 minutes and saves weeks. Define the primary risk, pick 1 to 2 methods, set an evidence bar, and run.

How do we set evidence thresholds so decisions do not drag?

Tie the bar to blast radius. Bigger bets or higher risk need higher evidence. Example: for a medium-risk concept, require 3 of 4 signals within 7 days, such as task success on a realistic prototype, willingness to pay proxy, ops feasibility spike, and a quick compliance screen.

What if the main risk changes mid-flight?

Great. Update the risk map and switch to the method that best attacks the new top risk. Treat the map as a living object. Discovery is a decision engine, not a linear checklist.

How does this work for small teams without a compliance function?

Use a triage checklist and a go-to external advisor for high-risk items. Most items will pass a basic screen. Escalate only when the checklist flags a real issue.

Does this apply to B2C as well as enterprise?

Yes. The risk mix changes, not the principle. B2C often leans toward desirability and growth risks. Enterprise leans toward compliance, feasibility, and viability. Match methods to the real top risk, not to habit.

How do we show ROI of this approach?

Track avoided rework, cycle time to decision, and ratio of decisions made per discovery week. Compare pre and post adoption. Also track launch blockers caught upstream and incidents avoided.

April 2026

Improved visual presentation and terminology clarity.

Framework substance unchanged.

June 2025

Original publish date