PAIR: A Simple Model for AI-Accelerated Apprenticeship

AI should speed work, not erase the training ground. A Seniors-only + AI model boosts short-term output but drains your pipeline and increases risk. Try Senior + Junior + AI: juniors draft with AI, seniors inspect thinking. Treat mentorship as first-class work and measure it. Build great teams. Now.

A quick note: The framework presented here is a working hypothesis based on my experience and observations. The opinions are my own, the examples are illustrative, and this is not company policy or legal advice.

TL;DR (for leaders betting on AI for efficiency)

- If you are considering a "Senior + AI only" model, stress-test the second-order effects on your pipeline, review load, and risk.

- Use Seniors-only + AI as a temporary exception for incidents, regulated changes, or urgent customer commitments.

- One workable lens is Senior + Junior + AI, where juniors draft with AI and seniors inspect thinking.

- Use AI to remove repetitive work, not the training ground itself. This allows juniors to focus on higher-order skills like problem-framing and trade-off analysis from day one.

- If you try this lens, treat mentorship as first-class work and measure it openly.

Introduction: The Efficiency Mirage

I still remember my early years in the late 90s. The digital trenches. Writing boilerplate C++ code that felt like assembling the same flat-pack furniture from a Swedish company every single day. Manually writing test scripts. Trying to make sense of customer notes so messy and chaotic they felt like abstract poetry. Doing a lot of data wrangling and basic descriptive statistics. It wasn’t glamorous work. But it was foundational. It built the instincts I still rely on today.

If I’d had an AI assistant back then, I would have begged it to write the boilerplate and summarize the chaos. And that’s precisely the point. AI should be the ultimate accelerator, the tool that removes the repetitive work, not the one that removes the training ground itself.

Yet, discussions on using AI to replace juniors are on-going. The logic is seductive and fits neatly on a spreadsheet: “One senior with AI copilots can replace three juniors”. This is not an argument against efficiency. It is a caution against treating Senior + AI as a substitute for early-career development. That shortcut erodes the pipeline that produces future seniors, and concentrates knowledge in a few people. Short-term throughput gains can create long-term operating risk.

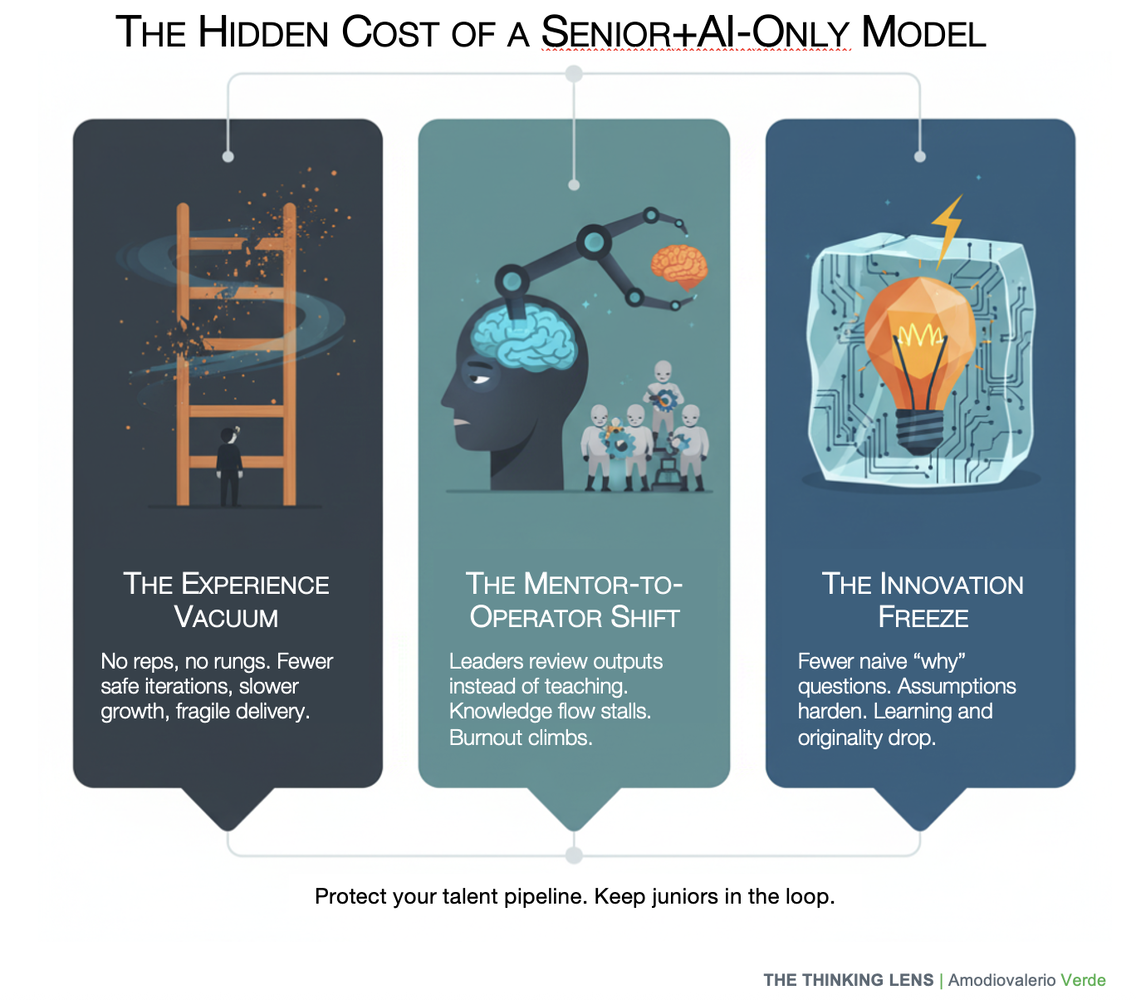

This approach creates three hidden costs that your dashboards will ignore until it’s too late:

- The Experience Vacuum: Seniors are forged through a thousand rounds of deliberate practice on real work, with safe failures. When you automate away the foundational work, you stop creating those short, low-risk iterations. You end up with operators who can follow a map but could never draw one themselves.

- The Mentor-to-Operator Shift: Your best, most experienced people stop teaching the why behind the work. They become prompt supervisors, reviewing outputs instead of shaping thinking. Knowledge transfer stalls. Burnout rises as their leverage disappears.

- The Innovation Freeze: Juniors ask the naive, “why are we doing it this way?” questions that break old assumptions. They are the randomizing element in a system that craves stability. When you remove that energy, your culture calcifies. The product stops learning because the organization has forgotten how to question itself.

This is primarily a leadership design problem. Leaders are accountable for forging the next generation of leaders. We should be using AI to multiply our ability to do that, not as an excuse to cancel it.

Note: In this article ‘junior’ and ‘senior’ refer to experience level, not age or any protected characteristic.

One lens: Senior + Junior + AI as an apprenticeship loop

The alternative isn’t a romantic return to the old ways. It's a more pragmatic, sustainable model that uses AI as an accelerator, not a replacement. The formula is simple: Keep the seniors. Keep the juniors. Give both a copilot. Then, change the work.

In this model, the roles are redefined. Juniors use AI to scaffold first drafts, summarize research, and automate repetitive tasks. Seniors shift their focus from correcting syntax to inspecting logic. The review is no longer about surface-level correctness; it’s a deep dive into the thinking behind the work. This is not just a mentorship program; it’s an operating model designed to compound capability.

This creates a symbiotic relationship where both juniors and seniors gain tangible benefits by changing the nature of their work and collaboration.

For juniors: from grunt work to real work

This model skips the thankless dues-paying phase and moves juniors straight to high-value learning.

- Accelerated learning: Work shifts from rote execution to judgment, problem framing, and trade-off analysis, guided by a mentor.

- Day-one impact: AI handles boilerplate so juniors ship real projects, not sandbox tasks.

- Clear path: The Levels of Independence gives evidence-based milestones, clear goals, and ties to progression and compensation where appropriate. No “shadow work.”

- Future-proof skills: Juniors learn the craft with an AI copilot and become AI-native professionals.

- Early leadership: The capstone is training the next junior, which builds mentorship and leadership.

For seniors: from contributor to force multiplier

This system moves seniors from high-level ICs to true multipliers. Their time goes to shaping judgment and scaling experience.

- More leverage, less repetitive work: Freed from basic reviews. Focus on the junior’s thinking, reasoning, and assumptions. Mentorship has bigger impact.

- Scalable impact, reduced burnout: Junior plus AI produces first drafts. Seniors spread expertise without bottlenecks. Their role becomes more strategic.

- Formal recognition for mentorship: Teaching is a first-class responsibility. It is measured and rewarded in performance goals, not unpaid extra work.

- Building a legacy: Seniors forge the next leaders. This strengthens the bench and the long-term resilience of the team and gives purpose.

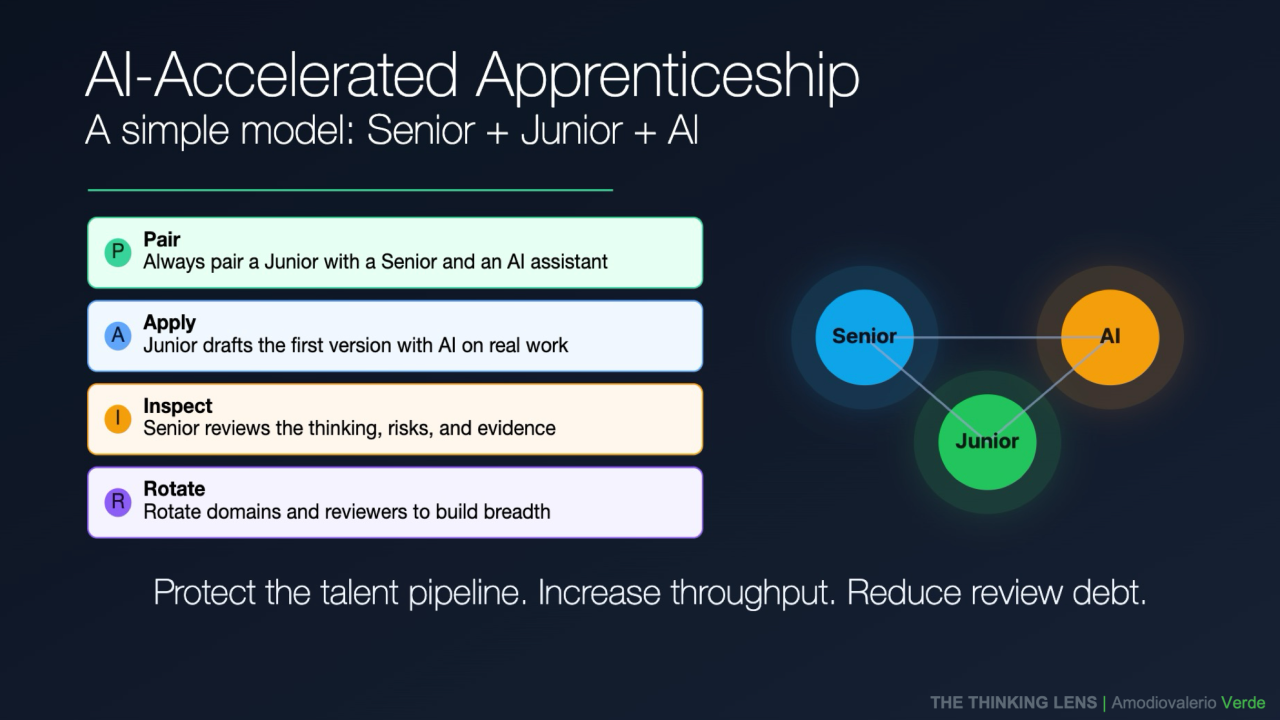

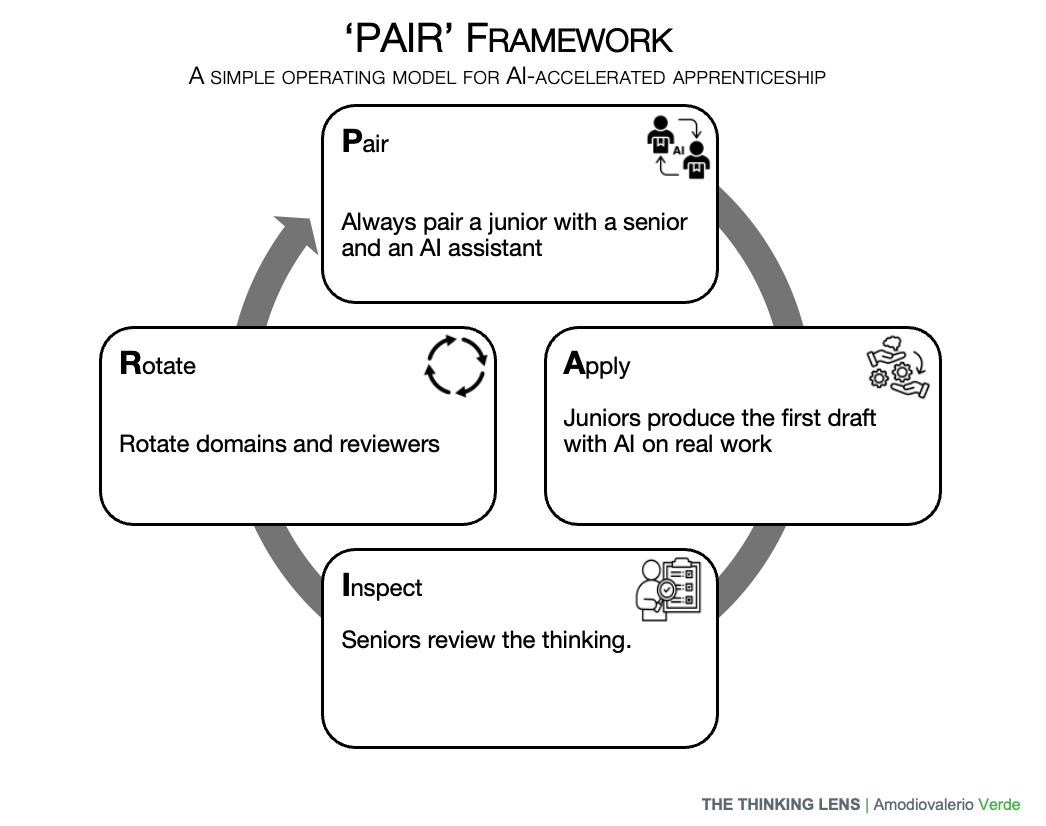

The Operating System: PAIR

To make this model work, you don’t need a new suite of tools or a complex process re-engineering project. One way to explore the trade-offs is a simple loop I use called PAIR.

Think of it as a lightweight wrapper around your existing rituals (sprint planning, stand-ups, and reviews). Its goal is to accelerate the growth of junior talent without compromising quality or speed. At its core is one simple rule: treat AI like driver-assist, not autopilot. Human judgment must remain accountable for the outcome.

The loop consists of four stages:

P - Pair

- Purpose: To establish a clear and focused working triangle: one junior, one senior, and an AI assistant. This initial alignment is the most critical step.

- Process: The senior prepares a mini-brief for the task. This isn’t a 10-page document; it’s a one-paragraph problem statement with clear constraints, acceptance criteria, and a defined risk tier.

- Key Action: The senior clarifies the problem, the desired outcome, and the non-goals. They confirm the AI guardrails (e.g., which data sources are allowed, no customer PII) and the definition of "good" for the first draft.

- Anti-Pattern to Avoid: The vague, "figure it out" brief that outsources the problem-framing itself. This leads to wasted cycles and frustration.

A - Apply

- Purpose: The junior produces the first draft with AI assistance, but on real work, not isolated sandbox tasks.

- Process: Following the brief, the junior uses AI to accelerate the work: drafting code, summarizing research, generating design variants. Crucially, they must maintain a clean prompt-and-rationale log: what they asked the AI, why they asked it, what the output was, and how they refined it.

- Key Action: The junior prepares the draft for review, along with a self-review checklist and a short note on where they believe the biggest risks or weaknesses are. They should present options and trade-offs, not just a single, unedited AI output.

- Anti-Pattern to Avoid: Copy-pasting raw AI output without refinement or critical thought. The goal is augmentation, not abdication.

I - Inspect

- Purpose: The senior reviews the junior's thinking, not just the surface-level artifact. You’re not just debugging the output; you’re debugging the reasoning. The goal is to improve their personal thinking system, which is the ultimate force multiplier for the team.

- Process: The review focuses on the rationale documented in the prompt log and the trade-offs the junior considered. This is where real coaching happens.

- Key Questions: Instead of "Is this correct?", the senior asks: "Which assumption did you test first, and why?" "What did the AI miss, and why do you think that is?" "If this fails in production, what is our rollback plan?".

- Key Action: The senior makes a clear decision: approve, request specific changes with a time box, or escalate a risk. Every review should also generate at least one learning that can be added to the team’s shared library of patterns or checklists.

- Anti-Pattern to Avoid: Surface-level edits that fix the output but ignore the flawed reasoning that created it. This is "review debt".

R - Rotate

- Purpose: To expand the junior’s judgment, avoid cloning a single working style, and distribute knowledge across the team.

- Process: Rotate mentors or problem areas regularly to avoid single-style cloning. In small teams, swap reviewers every 2–3 cycles or run a weekly peer review hour instead of formal rotations. This prevents mentorship from becoming a single point of failure and exposes juniors to diverse perspectives and problem spaces.

- Key Action: Rotations are managed by team leadership with a clear handover process to transfer context and highlight open risks. The rotation map should be visible to the team, ensuring the process is deliberate, not chaotic.

- Anti-Pattern to Avoid: Static pairing that lasts for months. This creates knowledge silos and limits the junior’s growth by exposing them to only one mentor’s habits and biases.

From Shadow to Multiplier: A Rubric for Growth and Independence

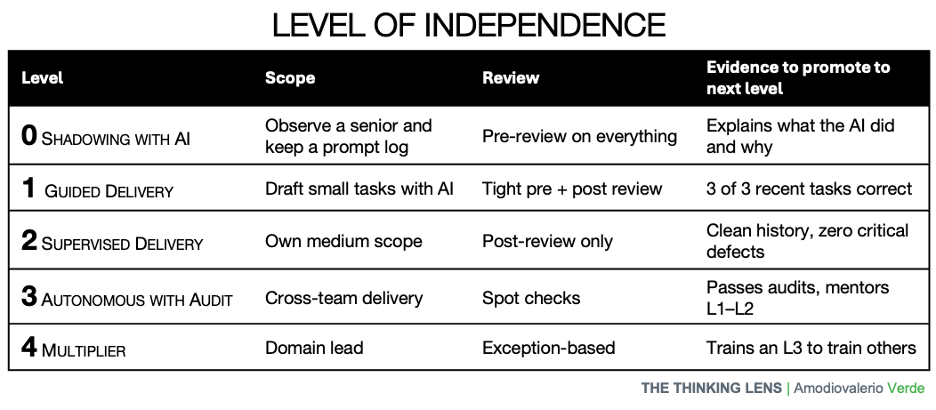

A system like PAIR is only effective if it’s connected to a clear, visible path for growth. This rubric defines five levels of independence, providing a transparent framework.

It’s designed to be tracked per skill, not as a single monolithic grade. A junior might be at Level 2 for technical delivery but at Level 1 for stakeholder communication. This allows for targeted coaching and recognizes that growth is not always linear.

Let's break down what each level looks like in practice.

Level 0: Shadowing with AI

- Definition: The individual observes a senior mentor executing a task, keeping a detailed log of the prompts used and the rationale behind them. Their job is not to produce, but to deconstruct and understand the workflow.

- What Good Looks Like: They can explain, in plain language, what the AI is doing, why a senior chose a specific approach, and what the model’s limitations are. They maintain a clean and well-documented prompt log.

- Evidence to Promote: They can present a short demo to the team explaining the workflow they observed, including the trade-offs the senior made. They show a reliable prompt log for at least two weeks.

Level 1: Guided Delivery

- Definition: The junior now produces first drafts for small, low-risk tasks using AI, but follows a tight outline provided by the senior. The senior performs a rigorous review of every deliverable.

- What Good Looks Like: The junior flags risks early, asks targeted questions, and presents the AI-assisted draft with clear annotations on what was changed and why. They can defend the trade-offs made in the work.

- Evidence to Promote: They consistently deliver drafts that require two or fewer review iterations to reach acceptable quality.

Level 2: Supervised Delivery

- Definition: The junior now proposes their own approach for medium-scope tasks, identifies risks, and uses AI to accelerate the work. The senior shifts to a post-review model, inspecting the work only after it’s complete.

- What Good Looks Like: They start with a clear problem statement and a plan. They use AI to accelerate research and scaffolding, not to outsource thinking. Their work is delivered with a clear test plan and success metrics.

- Evidence to Promote: They successfully deliver two medium-risk items with limited review and a clean history of zero critical defects.

Level 3: Autonomous with Audit

- Definition: The individual now owns work end-to-end, including cross-team dependencies and communication. The senior’s role shifts from reviewer to auditor, conducting periodic spot checks on quality, ethics, and maintainability.

- What Good Looks Like: They proactively manage stakeholders, design the guardrails for their own AI usage, and bring in the right reviewers at the right time. Their work is accompanied by clear decision records and a post-launch review mapping outcomes to initial goals.

- Evidence to Promote: They demonstrate repeatable success across different domains and begin mentoring juniors at Levels 1 and 2.

Level 4: Multiplier

- Definition: The individual’s primary focus shifts from delivering work to scaling the team’s capability. They teach Levels 0–2, codify best practices, and improve the team’s overall system.

- What Good Looks Like: They are the ones improving the checklists, refining the prompt patterns, and evolving the AI guardrails. They actively coach others and measure their mentees’ progression through the levels.

- The Final Step: The loop closes here.

The final step for a junior is to train a junior. This is how you create a self-sustaining system for leadership development.

The Guardrails: Operating Safely in High-Stakes Environments

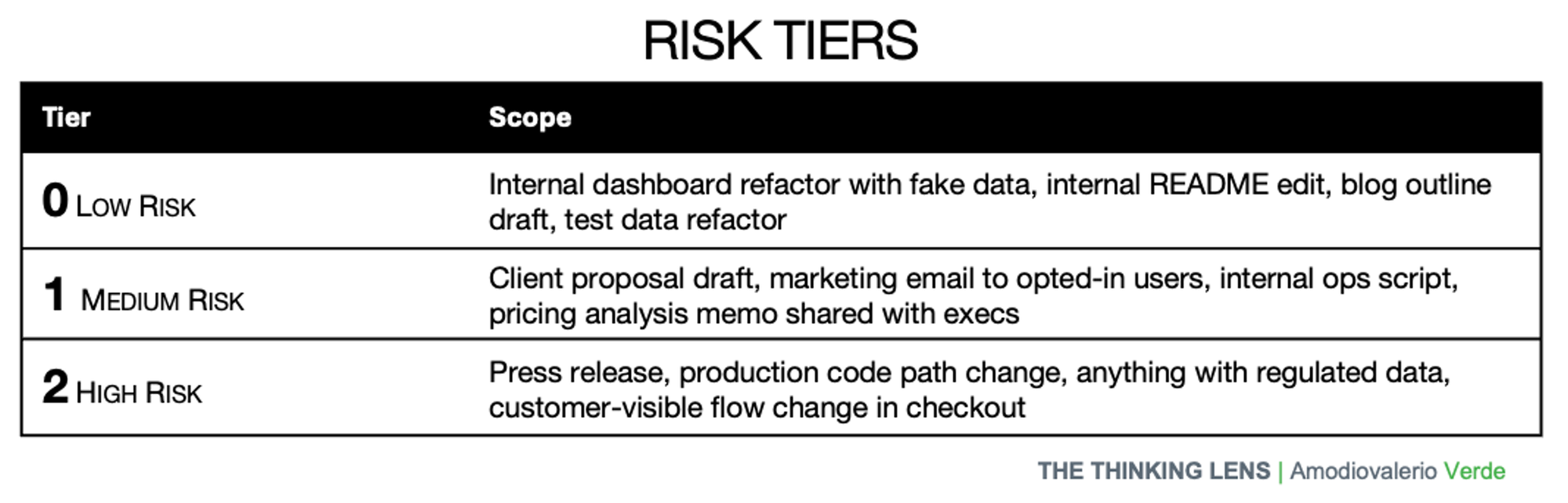

If you let juniors work on real items, consider proportional guardrails. Examples below are generic. Leaders should adapt them to their organization’s policies and applicable law.

1. Standards, Templates, and Checklists

- Definition of Done: Every task, especially those assisted by AI, must have a clear, pre-defined "Definition of Done" that includes quality checks and review gates.

- Review Checklists: Seniors should use a standardized checklist for reviews. This ensures consistency and helps train juniors on what to look for. The checklist should include items like "Was the prompt log clear?" and "Were ethical risks considered?"

- Prompt Patterns & The Red-Flag List: Maintain a library of approved, high-quality prompt patterns for common tasks. Alongside it, keep a "red-flag list" of prompts or data types that are strictly forbidden (e.g., entering customer PII into a public LLM).

2. Source Control for Thinking

- Auditability: Make work auditable, proportional to risk. If meaningful review is not possible, treat that as incomplete for high-risk items. For low-risk tasks a short note that links to key prompts and outputs is enough.

3. Data and IP Hygiene

- The Model-Access Matrix: Establish clear model access boundaries that align with your organization’s data classification and vendor-risk processes.

- Clear Practices: Teams should follow company policy and applicable law when handling sensitive information. Document the do’s and don’ts, teach them, and confirm they are followed.

- Note for EU contexts: account for works councils, data residency, processor locations, and DPIAs before tooling changes.

4. The Human-in-the-Loop Imperative

- No Autopilot for High-Risk Work: For customer-facing, business-critical, or high-risk work, keep a human-in-the-loop until the individual demonstrates consistent quality. Periodic reviews remain appropriate.

- AI Does Not Decide: AI can draft, scaffold, and summarize. It does not approve or take accountability; a human does.

When Seniors-only + AI is appropriate

Use Seniors-only + AI for clearly high-risk or time-critical work:

- Production incidents, security events, data-loss prevention.

- Regulated or safety-critical changes where review cycles are mandated.

- Executive escalations or urgent customer commitments with contractual impact.

- Highly sensitive IP, M&A, legal discovery, export-controlled topics.

- Hard deadlines where onboarding a junior would jeopardize the outcome.

For startups and scaleups

In blitz-growth periods you may choose Seniors-only on critical paths to hit market windows. Do it knowingly, document the debt, and plan fast payback with PAIR once the window closes.

Why this cannot be the steady state:

- Pipeline risk: juniors do not progress, hiring yield falls, succession stalls.

- Concentration risk: knowledge and decisions cluster on a few seniors; single-point-of-failure grows.

- Burnout and quality drift: review time compresses, defects rise.

Minimum viable guardrails for small teams

- Do not enter customer or partner data, secrets, or regulated information into public AI tools.

- Keep a short decision note with links to prompts and outputs when risk is non-trivial.

- Require human review on customer-visible changes until a person has demonstrated consistent quality.

- Use a simple data-use rule of thumb: public data is OK, internal data by exception, sensitive data never.

- Align with your organization’s data classification, retention, regional rules, and third-party risk processes. When in doubt, don’t paste.

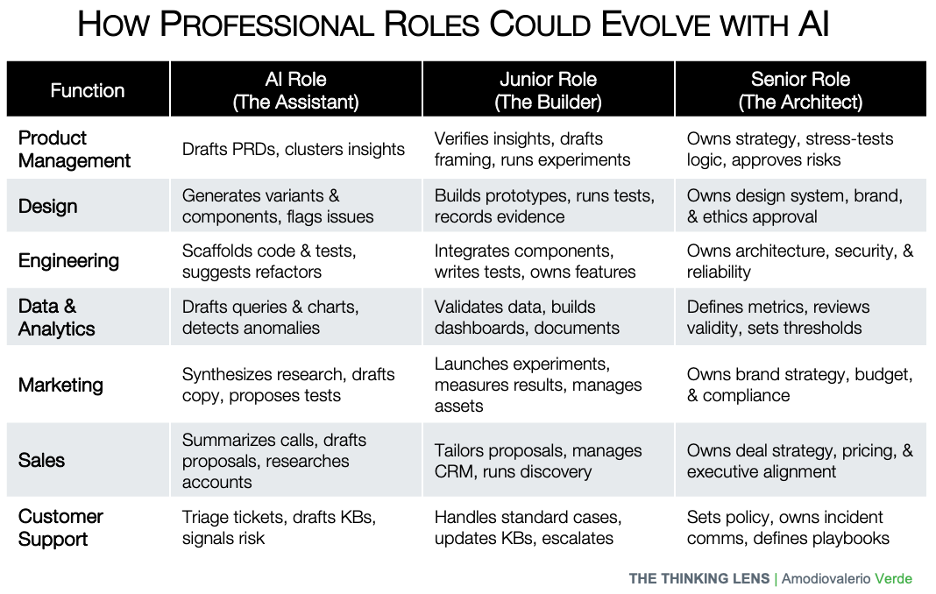

Putting It Into Practice: How roles could evolve (examples)

When these guardrails are in place, the nature of work changes across every function. The focus shifts from low-level execution to high-level reasoning and strategy.

Cross-role practices

- Juniors keep prompt logs and evidence.

- Seniors review the thinking and sign off on high-risk outputs.

- AI handles boilerplate and assists with options and checks.

- No customer or personal data goes to public models.

- Use evals to track quality and drift.

Common Traps and How to Avoid Them (Anti-Patterns)

Even a well-designed system can fail if you're not vigilant. Watch for these common anti-patterns, which are signs that you are slipping from a capability-building model back into a simple output factory:

- The "Seniors-Only + AI" Model: This is the most tempting trap. It looks efficient, but it hollows out your talent pipeline and guarantees a leadership vacuum in the future.

- Prompt-Only Workflows: This happens when juniors are only taught to paste pre-written prompts and are never required to learn the reasoning behind them. They learn to operate the tool, not to think with it. You need to grow thinkers not operators.

- Invisible "Shadow Work": This occurs when mentorship and reviews are treated as informal, unrewarded tasks. This leads to burnout and signals to the organization that teaching isn't valued.

- Accumulating "Review Debt": This is the habit of fixing a junior's mistakes on the surface but never addressing the flawed thinking that created them. You’re fixing the symptoms, not the cause. (and this happen more often than you can imagine)

Measuring What Matters: KPIs for a Resilient Team

If you pilot this, these are the few metrics to track. Your dashboard should reflect the health and growth of your team's capabilities. Track these metrics monthly and make the trends visible to everyone.

- Time to Independence: The average time it takes for a junior to advance from Level 0 to Level 2 for a specific, defined skill. This is your core metric for learning velocity.

- Review Load Trend: The number of hours a senior spends per month reviewing a junior's work. This number should be highest at the start and trend downward over time as competence and trust increase.

- Defect Escape Rate: The number of issues or errors found in work after it has been released, segmented by the level of the individual who produced it.

- Bench Strength: The ratio of team members at Level 3 or higher compared to the total team size. Your goal is to see this trend up and to the right.

- Impact, Not Velocity: Most importantly, tie the team's work to real business outcomes like adoption, retention, or risk reduction. Shipping faster is not the same as delivering value.

Final Thought: Make Teaching a First-Class Job

If your organization plans to let seniors use AI and skip hiring juniors, you will likely see faster delivery in the near term. This optimizes for short-term throughput and shifts risk to later phases. The predictable long-term effects are a hollowed-out bench, burned-out senior talent, and a leadership vacuum that becomes visible only when it is costly to fix.

Mentorship is not charity or "extra work." In this new era, it is a core business function. To make this model succeed, you must make teaching a first-class job. That means making it a formal part of a senior’s responsibilities, rewarding it publicly, and tying it to their performance goals. Promote the people who multiply others.

The future of your organization won't be secured by the efficiency of your AI copilots, but by the resilience of the leaders you build today. It's time to preserve and build your bench. Start building it with intention. Use AI as leverage, not as a license to stop investing in people.

Start with one team. Run the PAIR loop. Inspect the thinking. Rotate the work. Measure independence. Repeat. That is how you scale.

If you believe Juniors are optional now, I want to hear the case. My bet is simple: apprenticeships scale judgment, AI accelerates it. We need both.

If you enjoyed this article and you want more like this, subscribe to The Thinking Lens newsletter.

PAQs – Potentially Asked Questions

Isn’t this model slower, especially at the beginning?

At first, yes. You are making a deliberate investment in capability, not just chasing short-term output. The initial phase requires seniors to invest time in pairing and reviews. However, this is a front-loaded cost. Speed and leverage return once juniors hit Level 2 autonomy. After that, you get both speed and resilience, which is a far greater long-term advantage.

How do you maintain quality and manage risk with juniors on real work?

This is precisely what the guardrails are for. You install clear quality gates, mandatory review checklists, and auditable decision logs. Human review remains required for high-risk work until a junior has proven their competence and earned Level 3 status. You don't trade safety for speed; you create a system where juniors can learn safely.

Our seniors are already overloaded. How can they find time for more reviews?

This is the most common and most real objection. If your seniors are overloaded, you don’t have a time management problem; you have a traffic problem. Adding more cars to a jammed highway doesn’t help. You need to remove some cars or build a new lane. This is a leadership call: either you protect time for mentorship by reducing scope elsewhere, or you accept the long-term cost of review debt and a hollowed-out team. Leaders must either protect mentorship time or accept downstream risk.

What if our senior team members resist teaching?

This often happens when mentorship is treated as unrewarded "shadow work." The solution is to make it a formal, visible, and rewarded part of their role. Track mentorship as a recognized responsibility. Prefer team-level trends over individual scoring. Rotate reviewers to share the load. And ultimately, promote the leaders who have a proven track record of multiplying the talent around them.

Will relying on AI for first drafts make juniors lazy or prevent them from learning the fundamentals?

Only if you let it. The PAIR system is designed to prevent this. By requiring juniors to maintain a prompt-and-rationale log and defend their thinking during inspections, you force them to engage critically with the AI’s output. The goal is to reward reasoning, not just the final artifact. Some "grunt work" is just busywork; other parts are essential practice. The senior's job is to know the difference.

With AI automating 'grunt work,' isn't the traditional apprenticeship model obsolete? Aren't you just trying to preserve an old paradigm?

The nature of foundational work is absolutely changing, and we shouldn't try to preserve tasks that are better handled by machines. However, this model isn't designed to preserve obsolete tasks; it's designed to accelerate the development of timeless skills. Our contention is that while the tasks change, the fundamental need for developing human judgment through guided practice on real work does not. Judgment isn't about knowing the right syntax; it's about navigating ambiguity, weighing trade-offs, and taking accountability for an outcome. Things an AI cannot do.