LLMs, Longform, and Low-Code: What Worked (and Broke) in Real-World Tests

LLMs aid with tight, scoped tasks: cut redundancy, multipass feedback, summary maps, brainstorming. But they struggle with long inputs, continuity and consistent voice. Useful for scaffolding and agents, not autopilot. Keep humans on structure, logic and style. Use models to spot patterns.

We talk a lot about what LLMs can do. But when you're deep into a real project — like reviewing a 400-page book or building tools around it — the cracks start to show. That’s when the practical limitations (and the underlying reasons) become obvious.

I tested ChatGPT, Claude, Gemini and other tools like Base44, Gamma and others across demanding workflows. I didn’t use them to write the book — but to analyze it, cut bloat, test structure, and brainstorm visuals. Think of them as a tireless (if flawed) developmental editor’s assistant. I also experimented with AI coding platforms to build companion tools for faster delivery.

Some results were genuinely helpful. Others failed quietly — or loudly. Here’s what worked, what broke, and why.

✅ Where LLMs Deliver Value (If Scoped Well)

1. Focused Editing: Redundancy Detection Works

Asking, “What can I cut from this chapter without losing meaning?” often returns clear, actionable suggestions.

Why: LLMs process input within a “context window” — a fixed memory span. When you give them small, focused content (like one chapter), their pattern recognition is sharp. They can detect semantic overlap and redundancy. But if you overload the input, signal turns into noise.

⚠️ LLMs might think your metaphor is “redundant.” Or that your irony breaks the flow. And just like that — they kill what makes your writing you.

2. One Prompt = Shallow. Multi-Pass Prompts = Real Feedback

A single “assess this chapter” prompt returns generic insights. Instead, I run prompts per lens: structure, tone, clarity. The depth improves instantly.

Why: Asking a model to assess multiple dimensions divides its attention. Decomposing the task lets it optimize for one goal at a time. It’s a simple fix with big impact.

⚠️ Try assessing with at least two different LLMs — it helps you compare outputs and apply stronger reasoning.

3. Summary Maps Make Narrative Analysis Feasible

Feeding the full manuscript into an LLM for narrative evaluation? Useless. But when I create a structured map (chapter summaries, tone shifts, themes, transitions), AI can reason more effectively.

Why: Manuscripts are noisy. Summary maps act as abstraction layers. LLMs are strong at pattern matching — especially with structured input. This gives them a “map view” of the story rather than forcing them to crawl line by line.

⚠️ You may need to track versions manually — any major change means your summary maps should be rebuilt.

4. Visual Brainstorming Helps Break Blocks

Ask: “What kind of visual supports this idea?” and you’ll get good categories: timelines, flows, Venn diagrams. It’s a solid starting point.

Why it’s limited: LLMs are trained on text, not visuals. They associate concepts with common formats (e.g. “process” → “flowchart”), but they don’t understand layout, information hierarchy, or design logic. They suggest the category, not a viable instance of it. Translation still requires human judgment.

⚠️ Specialized tools like Whimsical, Napkin, or Gamma can help — and new ones pop up every day. Don’t default to ChatGPT, Claude, or Gemini alone. Try things. Your use case might need something more focused.

❌ Where It Breaks (And Why)

1. Too Much Input? It Starts Guessing

Feed in long, unfiltered content and the model stops analyzing. It starts assuming. Tone shifts. Logic appears out of nowhere. Hallucinations creep in.

Why (from what I’ve learned): Attention mechanisms weaken over long input. Early details get lost. The model fills gaps with statistically plausible text. It’s not doing deep comprehension — it’s doing pattern continuation. Recent models can handle more context, but performance still degrades beyond 30–50k tokens.

2. They Lose the Big Picture

Even when I split the book into nine parts, the model couldn’t maintain continuity. Themes dropped. Character arcs felt inconsistent. Callbacks were missed. You get smart-sounding feedback within a chunk — but each chunk is an island.

Why: LLMs are stateless. They don’t carry memory across prompts. Unless you manually stitch context across interactions — which is tedious and error-prone — the model forgets what it saw before. There’s work happening in persistent memory and agent frameworks, but it’s early.

3. Visual Suggestions Lack Execution Logic

AI will say, “This could be a relationship map.” But how should it be structured? What are the nodes? What matters most?

Why: LLMs lack visual grounding. They don’t understand spatial logic or user cognition. They match textual patterns. So while they can name diagram types, they can’t reason about how to design one meaningfully. The result sounds smart but lacks depth. (but still can be used as a starting point)

4. Different Models, Different Answers

Use the same prompt across ChatGPT, Claude, and Gemini — even with a defined evaluation framework — and you’ll get inconsistent (sometimes contradictory) feedback.

Why:

- Different training data

- Different alignment processes

- Random sampling in generation

- And often, the model just agrees with the tone of your question

They’re optimized to sound helpful — which sometimes means they mirror your assumptions instead of challenging them.

⚠️ Try telling the model it’s wrong. Odds are, it’ll give you a new answer — quickly dropping the old one to keep you happy. (If only that worked with my wife...) <-- this sentence would be considered bloat and removed by an LLM :D

✍️ Writing Style? Still a Human Job

I tested AI as a rewriting partner. Sometimes it helped tighten things. But often:

- The tone became generic

- Metaphors vanished

- The voice no longer sounded like me

Why: Voice isn’t a filter — it’s a system. It’s rhythm, humor, metaphor, tension, word choice. AI can mimic tone briefly, but it doesn’t hold onto identity. Over time, it regresses to the “mean” — the average internet voice. Voice is identity. AI mimics patterns. It doesn’t own them.

🛠️ AI for Tools: Fast Start, Fragile Logic

Alongside the book, I built lightweight tools — evaluators, diagnostics — using AI low-code platforms like Base44. The pitch is: “Just describe it. We’ll build it.”

What worked:

- Fast UI scaffolding

- Simple logic (if X, then Y)

- Rapid prototyping

Where it broke:

- Forgetting variable names between screens

- Breaking logic paths silently

- Misinterpreting flow or conditions

Why (from my research): AI code generation is pattern-based. It’s great at boilerplate, weak at architecture. These tools don’t reason about state, handle edge cases, or manage flow across components. They don’t build mental models of the app — they autocomplete fragments. You still need human developers for anything robust.

🧠 Agentic AI: The Next Chapter (But Not Autopilot)

Agentic AI is getting attention: tools that don’t just respond to prompts, but act across steps, make decisions, and coordinate tools. Think: interns with hustle.

Where it helps:

- Remembering multi-step flows

- Automating tasks (e.g. summarize chapters, generate visuals)

- Connecting APIs and external tools

Where it still fails:

- Understanding narrative coherence

- Designing anything visual

- Debugging logic in apps

- Preserving writing style

Why: Most agents just stitch LLM calls in loops. They don’t model goals or intent. They lack memory and common sense. When things go off track, they either stall or silently fail — just like early low-code builders.

To use a 90s metaphor: if LLMs are Clippy on steroids, Agentic AI is Office with macros. Useful, yes. Reliable? Not yet.

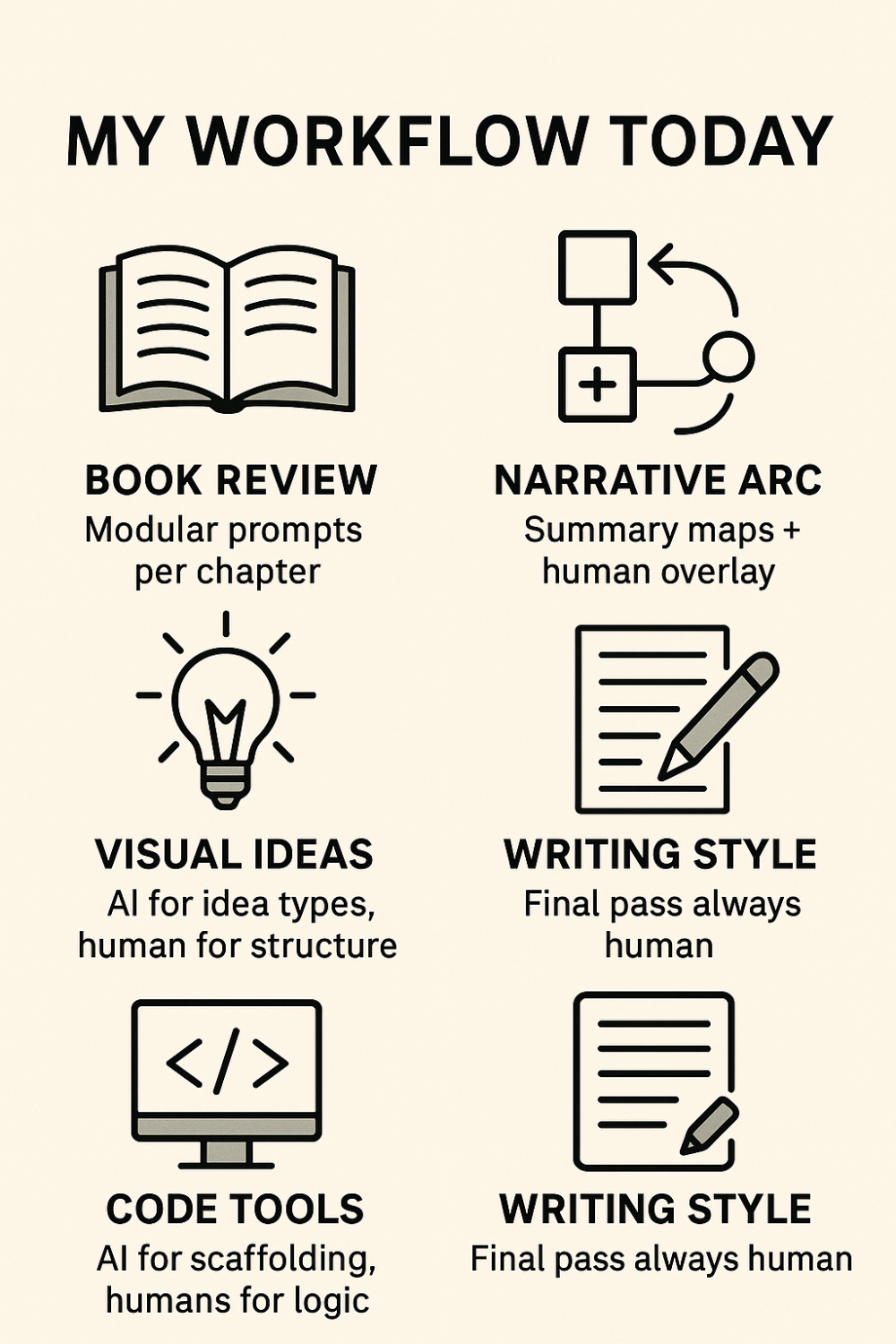

🧭 My Workflow Today

When reviewing a book, I break prompts down per chapter. This keeps the context tight and feedback specific — no sprawling, generic revisions.

To check the narrative arc, I create quick summary maps, then overlay human interpretation. AI helps structure the big picture, but it’s the human layer that makes coherence stick.

For visual ideas, I use AI to explore formats and creative directions — then step in to shape the actual structure. This avoids visual clutter and keeps things purposeful.

With code tools, I lean on AI for fast scaffolding. But logic stays human. That way, I move quickly without compromising clarity or reliability.

When it comes to writing style, the final pass is always human. That’s how I protect tone, voice, and intent — no matter how good the draft sounds.

Across the board, I use multiple models to review. I’m not looking for agreement — I’m looking for patterns. It helps surface bias and sharpens the final result.

💬 What About You?

This reflects my experience — but I’d love to hear yours.

- Have you used LLMs for longform or app development?

- Did they help? Or did you hit the same walls?

- What broke first — style, logic, or context?

- Ever trust an AI output… and find it was confidently wrong?

Let’s move past the demos and talk about the messy, real-world use of these tools.

⚠️ One Last Thought: AI-First or Hype-First?

There’s a rush toward “AI-First.” But here’s the risk:

You move too fast, and you stop thinking. You replace reasoning with statistical completion. You end up with tools that sound smart, but don’t actually help your team make better decisions.

LLMs don’t understand goals. They don’t build models of the world. They predict what’s likely — not what’s right.

The tools are powerful. But strategy, abstraction, and product sense still have to come from us.

💡 Bonus Tip: Use Chat Mode, Not Canvas (Usually)

If you're using ChatGPT for creative or strategic tasks, try using the Chat interface instead of Canvas. In my experience, Chat tends to return richer, more detailed output — while Canvas sometimes feels overly concise or stripped down.

I don’t know exactly why this happens. My guess? Canvas might be optimizing for document structure or brevity by default — which can flatten depth. With Chat, the model seems to prioritize completeness over formatting. If you're doing complex reasoning or longform editing, Chat is often the better space to think things through.