Control, Delegate, or Disappear: Thriving in the Age of AI Agents

Agents aren't UX upgrades. They're decision-makers. Mistaking automation for intelligence is a strategic failure. The shift is from operating tools to governing outcomes. Delegate objectives, embed escalation and governance, or your product becomes invisible in an agent-led economy. Govern it. Now

Note: This is not a prediction. It’s a scenario leaders should seriously consider.

The IVR Illusion

I still remember the day we installed that IVR system, nearly twenty years ago. At the time, it felt like progress. “Interactive Voice Response”, even the name sounded futuristic. We spent months configuring menus, designing workflows, fine-tuning prompts. A machine handling customer calls? It looked like the future.

Except, it wasn’t.

Let me remind you what customers actually heard:

“Press 1 to make a reservation. Press 2 to retrieve your invoice. Press 3 if you are experiencing technical problems. Press # to speak to someone who might actually help.”

It didn’t take long for the verdict to arrive, from both customers and frontline staff alike:

“This isn’t interactive. It’s frustrating. Bring back human support.”

And they were right. What we built wasn’t intelligence: it was a glorified switchboard pretending to be one. It didn’t solve problems; it routed them. It didn’t answer questions; it delayed them.

Years later, we repeated the mistake with early chatbots. Great marketing, poor delivery. The only thing they reliably automated was customer disappointment. Then came assistants that struggled with accents, “AI-powered” FAQs that answered the wrong questions, and bots that required human intervention to function at all.

Or home voice assistants that forced users to repeat the same request ten times before giving up.

Why does this matter today? Because many companies are about to make the same conceptual error, only on a larger scale. They’re mistaking automation for intelligence. They’re confusing task completion with decision-making.

This isn’t about building faster interfaces.

It’s about who (or what) is making operational decisions inside your systems.

And too many are underestimating how real AI agents, capable of reasoning, planning, and acting, will reshape not just interfaces, but the entire human-machine relationship.

This is not a UX upgrade. It’s a structural shift.

The systems you’re building, buying, and deploying aren’t tools anymore. They’re decision-makers. If you don’t grasp that shift, you’re not just at risk of falling behind. You’re at risk of becoming irrelevant.

Whether AI agents evolve into autonomous decision-makers or remain controlled tools, will depend entirely on how companies frame and govern their adoption.

The Conceptual Error: Why Automation Does Not Equal Intelligence

Many technological shifts begin with the same mistake: confusing automation for intelligence.

IVR automated the wrong tasks. Chatbots mimicked conversation without understanding. Generative AI now produces fluent text and images, but that’s not reasoning.

Fluid language output is not depth of understanding. Output is not intelligence.

Let me be explicit: Automation completes tasks. Intelligence reasons about outcomes.

Treating them as equivalent is not a minor misunderstanding. It is a strategic and operational failure.

The Fallacy of Better Interfaces

Here’s the real trap: companies think agents are just better chatbots. They’re not.

Agents aren’t an interface upgrade. They represent a paradigm shift.

Unlike chatbots, agents don’t wait for instructions. They decide how to achieve objectives. The human-machine dynamic shifts from issuing commands to delegating outcomes.

If your thinking is still centered around workflows and dashboards, you’re operating at the wrong level.

Many describe AI agents as orchestrators. That’s accurate but incomplete.

Orchestration describes execution. Agentic AI defines decision-making.

True agents don’t just sequence tasks. They reason, plan, and adapt. They interpret objectives, not scripts. That distinction matters: orchestration without reasoning is just automation.

The real shift is this: you’re no longer building software to execute commands. You’re building systems that make decisions, within governance, control, and escalation frameworks embedded by design.

Your software isn’t a tool anymore. It’s a decision-maker.

But one that must be supervised, constrained, and governed from day one.

The Digital Proxy Clarification

When I say Personal Assistant, I don’t mean a scheduling bot or a glorified to-do list.

I mean a digital proxy: a system that learns your goals, constraints, and preferences, and acts independently to serve them. Your AI twin.

Whether it’s rescheduling your flight, optimizing your calendar, managing your finances, or orchestrating your family’s logistics, this isn’t software you command. It’s a system acting in your place, across contexts.

In short: your assistant isn’t a tool. It’s you, digitally extended.

This is a power shift: from human-controlled processes to agent-controlled execution.

Here’s the structural truth: AI agents redefine who controls the “how.”

- In automation, humans control the process.

- In the agent economy, humans control the objective. But agents decide the process.

This flips the hierarchy. The machine isn’t your tool anymore. It’s your delegate.

But here’s the twist: delegation without controlled escalation isn’t autonomy. It’s abdication.

And that’s the critical risk most organizations are missing.

So what happens next?

To answer that, you need to rethink human-machine interaction not as operating tools, but as governing outcomes.

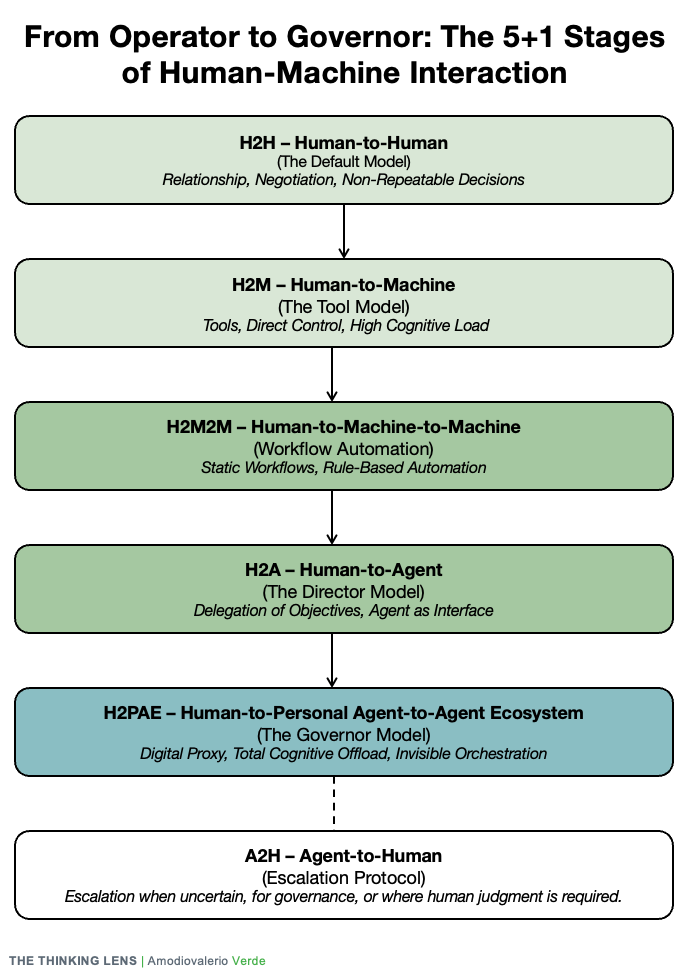

And that journey? It unfolds in five stages plus one we can’t afford to ignore.

Let’s unpack them.

The 5+1 Stages of Human-Machine Interaction

From Operator to Governor: A New Human Role Emerges

For decades, human-machine interaction followed two simple models: humans interacted with other humans (H2H), or humans operated machines (H2M).

The rise of AI agents doesn’t introduce a new tool. It introduces a new human role. From Operators to Architects, from Directors to Governors, this shift is no longer hypothetical. It’s operational.

Consider this not as a framework, but as a thinking lens for understanding the change.

Stage 1: H2H = Human-to-Human (The Default Model)

Humans interact directly. Conversations are unstructured, nuanced, and context-rich. Territory only humans can navigate, for now.

- Strategic Role: Relationship building, complex negotiations, non-repeatable decisions.

- Limitations: High cost, zero scalability.

- Insight: AI will augment, not replace, human-to-human interactions. Think transcription, summarization, translation. But your top negotiator? Still human.

Stage 2: H2M = Human-to-Machine (The Tool Model)

Humans as Operators. Machines as passive tools awaiting explicit commands.

- Strengths: Precision at scale, complete human control.

- Weaknesses: High cognitive load; users must learn the tool, not just solve the problem.

- Insight: The Operator model is collapsing. Users no longer want dashboards. They want outcomes.

Stage 3: H2M2M = Human-to-Machine-to-Machine (Workflow Automation)

Humans become Architects. We design static workflows; machines execute.

- Examples: Zapier, IFTTT, marketing automation.

- Strategic Advantage: Automates repetitive tasks, delivers operational efficiency.

- Fragility: Static logic breaks easily, lacks adaptability, and still requires oversight.

- Strategic Risk: Static automation will be outperformed or replaced. If your automation is static, it’s already vulnerable.

Stage 4: H2A = Human-to-Agent (The Director Model)

This is the pivot point. Humans evolve into Directors. You no longer instruct; you delegate.

Agents become the interface.

Like onboarding a junior analyst: they’re capable, imperfect, and increasingly autonomous.

- Strategic Shift: Delegate objectives, not steps. Agents reason, learn, and optimize.

- Risks: Hallucinations, black-box decisions, compliance and security exposure.

- Insight: This stage rewrites product design. Your tool isn’t operated. It’s queried. Delegation replaces instruction. Your interface? It’s no longer a screen. It’s a conversation.

Stage 5: H2PAE = Human-to-Personal Agent-to-Agent Ecosystem (The Governor Model)

Now, humans ascend to Governors.

You no longer manage agents directly. You manage your Personal Agent (PA) – your digital proxy, your operational twin. It understands your goals, constraints, and context.

You express outcomes. Your PA orchestrates the solution.

But reality won’t look like a single clean interface. It will resemble a diplomatic council. Your PA negotiates with a federation of agents from different vendors.

The strategic prize? Not just becoming the user’s agent, but becoming the agent that other agents must defer to.

A successful PA must also understand that humans are not single operational entities. It must respect contextual boundaries: data from your professional life must remain siloed from your personal life unless explicitly authorized. An assistant that optimizes across these boundaries without consent isn’t intelligent. It’s invasive.

- Example Command: “Optimize next month’s inventory orders within budget, notify suppliers, and prepare compliance reports.”

- Your Role as Governor: Define objectives, set constraints, oversee escalations.

- The PA’s Role: Coordinate multiple agents invisibly.

- Strategic Prize: Delegation of “how” to the PA; optimal cognitive load, not just total offload. Delegate repetitive tasks, retain oversight where judgment matters.

- Strategic Risks: Vendor lock-in, privacy erosion, governance complexity.

- Strategic Imperative: Owning the PA layer is the ultimate prize. If your product is accessed via someone else’s assistant, you’ve lost the customer interface and likely your brand.

Stage +1: A2H = The Agent-to-Human Escalation Protocol

In delegated ecosystems, autonomy isn’t enough. What matters is controlled delegation.

Agents must know when to escalate:

- When confidence is low.

- When ethical ambiguity arises.

- When human judgment is required.

Without an A2H escalation protocol, agents don’t delegate. They gamble.

And in mission-critical environments, gambling isn’t autonomy. It’s operational risk.

Five stages. One critical safeguard.

Together, they redefine not just product design, but your organizational operating model.

Next, we’ll unpack the strategic implications of this shift.

From Customer-Centric to Assistant-Centric

For years, companies have claimed to be customer-centric. But AI agents change what that actually means.

In an agent-powered future scenario, customers no longer interact directly with your product. Their personal assistant does.

The assistant becomes the primary interface, not your app, not your website, not your UX.

- Customers express goals.

- Their assistant handles execution.

This creates a new reality:

- Your interface doesn’t matter. The assistant interacts, not the human.

- Your workflows don’t matter. The assistant decides how tasks are executed.

- Your brand is changing. It will be defined less by marketing and more by measurable service performance. Assistants choose the best-performing service, not the best-known.

A Simple Example

Lisa says:

“Aura, finalize next week’s offsite. Prioritize venues with top sustainability ratings and use my preferred airline, even if it’s not the cheapest. Block Thursday morning for my daughter’s recital.”

In the background, Aura:

- Confirms venue and catering within policy constraints.

- Coordinates travel and accommodations for attendees.

- Books courier delivery for prototypes.

- Blocks Thursday morning as personal time and reschedules two meetings.

- Orders flowers for the recital, based on past preferences.

- Adjusts workload forecasts and notifies Lisa’s team of reduced availability.

- Summarizes all actions in a single update:

“Offsite finalized. Thursday morning marked as personal. Team informed. Flowers ordered. Anything else?”

Lisa never switches apps. Never checks dashboards. Tasks move forward. Focus stays on decisions that matter.

This is the shift. You are no longer building products for humans. You’re building services for personal assistants.

In practical terms, your product must deliver measurable outcomes, with maximum reliability, security, and efficiency. Otherwise, the assistant will bypass you. No human customer will notice. No one will ask why.

This isn’t invisibility. It’s transparency.

If your product isn’t the best component for the task, the assistant will route around you.

In this context, customer-centricity means solving the customer’s goal, even if the customer never learns your name.

And remember: personal and professional boundaries won’t be respected by default. They must be designed. Otherwise, systems like Aura will optimize across them, treating “you” as a single operational entity.

From Interfaces to Capabilities

In an assistant-driven economy, understanding customer needs doesn’t mean designing new interfaces. It means building capabilities that assistants trust and leverage.

When a personal agent queries your system, it isn’t seeking a screen. It’s looking for service quality:

- Precision: Is your data complete, current, and accurate?

- Capability: Can your system handle edge cases and complex requests?

- Speed: Can you deliver outcomes in milliseconds, not minutes?

In this world:

- Your API is your interface.

- Your data is your product.

- Your system reliability defines your brand.

The job of product teams shifts. Buttons don’t matter. Outcomes do. Assistants don’t care about your UX. They care about what your system delivers, how consistently, and how confidently.

If your capability isn’t best-in-class, the assistant will bypass you. Your brand, your interface, and your features become irrelevant.

Strategic Implications: This Isn’t UX

If you think AI agents are just better chatbots, you’re building the wrong product and the wrong company.

This isn’t a UX upgrade. It’s a structural shift. A redistribution of power.

Delegation changes everything, but not for everyone. In some sectors, human oversight will remain mandatory. Industries like healthcare, banking, and defense won’t fully delegate decision-making. Compliance will restrict autonomy.

But for everyone else? The future belongs to services assistants can trust.

Delegation as Product Value

In the old world, your product’s value was measured by how easily a human could operate it.

In the new world, your product’s value is measured by how much cognitive load it removes.

- In H2M, your software was a tool.

- In H2A, your software is either an agent or irrelevant.

Outcome delivery, not tool usability, is now the key performance metric.

Agents as the New UX Layer

This doesn’t mean UX is obsolete. But the front lines of UX are shifting.

Traditional buttons and menus? Secondary. APIs are now your interface.

Yet the critical UX work doesn’t vanish, it evolves. It shifts from the end-user interface to the governance interface.

The new design challenge isn’t: “How do I help the user operate this tool?”

It’s: “How do I design a system that makes this agent easy to trust, direct, and correct?”

That includes:

- Conversational flows for delegating goals.

- Dashboards for reviewing agent performance.

- Clear, unambiguous escalation paths for when human judgment is required.

Trust Engineering Becomes Mandatory

Autonomy isn’t free. Without controlled escalation, delegation becomes dangerous.

This creates a new discipline: Trust Engineering.

Trust Engineering isn’t a feature. It’s foundational. It must be embedded at the system level through:

- Defined confidence thresholds.

- Transparent reasoning where possible.

- Clear escalation triggers and protocols.

- User override and veto mechanisms.

In short: Build for controlled delegation or don’t delegate at all.

The Platform Wars: Owning the Interface Layer

Controlling the assistant layer isn’t a winner-takes-all battle. But it’s a decisive strategic advantage.

Many vendors will survive by integrating into dominant personal agents, sharing user trust through open protocols or differentiated expertise. The competition isn’t for exclusivity — it’s for influence.

Whether your users rely on:

- Apple’s Siri 2.0,

- OpenAI’s GPT Agents,

- Amazon Alexa,

- Google’s Gemini ecosystem,

- ...

If your product is integrated but doesn’t control the interface, you’re plumbing.

Owning the PA layer means owning:

- The brand relationship.

- The transaction flow.

- The monetization leverage.

Lose control of the interface layer, and your product becomes invisible.

This isn’t a UX problem. It’s a platform war.

And most companies haven’t realized they’re already fighting it.

While one battle is to become the customer’s primary personal agent, another is emerging: becoming the undisputed best-in-class specialist agent for a specific domain — whether travel, finance, or logistics.

The future may not belong to a single monolithic governor, but to a federated council of trusted agents.

The Governance Imperative

Let’s be blunt. We’re not building products anymore. We’re building infrastructures.

Agents aren’t a feature. They’re a system.

A system that, if left unchecked, will:

- Erode privacy.

- Centralize control.

- Amplify operational risk.

- Concentrate power.

And yet, many companies are treating agents as just another UX enhancement.

That’s not just a tactical mistake. It’s a governance failure waiting to happen.

The Critical Questions

Who owns the governance layer of your agents? In most organizations, the answer is: no one.

❓ Consider these questions:

- Who audits your agents, and who inside your company is accountable for those audits?

- Who decides when your personal agent should escalate decisions — engineering, legal, or product?

- Who prevents vendor lock-in at the delegation layer — your architecture team or procurement?

- Who owns the orchestration protocols across your products?

- Who defines how much autonomy is too much, and who makes the final call when risks materialize?

If you don’t have clear answers, you’re not governing agents. You’re surrendering to them.

Designing for Strategic Memory

Most organizations aren’t failing because they’re unintelligent. They’re failing because their systems are designed to forget.

Every escalation, every override, every human intervention: that’s strategic memory.

If your agents aren’t learning from these moments, your system isn’t intelligent. It’s automated ignorance.

But memory without understanding is just a more sophisticated form of failure. A system that blindly learns from every correction risks entrenching biases or amplifying errors.

True strategic memory requires a corrective dialogue. When an agent is overridden, it must be designed to ask: why?

Capturing that reasoning transforms an override from a datapoint into a contextual learning moment. That’s the difference between an agent that repeats tasks and one that aligns with human goals.

Governance must include:

- Transparent, structured escalation logs at the system layer not buried in the UI.

- Retrospective, version-controlled audits of agent decisions.

- Persistent, contextual memory designed into your orchestration layer, not bolted on later.

Open Standards or Platform Prison?

Agent ecosystems require interoperability.

Without open protocols, such as Agent Communication Protocols (ACP) or Agent Negotiation Protocols (ANP), you’re heading toward a vendor-controlled future. A world where your assistant can’t speak to other agents without paying a toll.

Regulators, industry consortia, open-source movements — they’re not optional here. Without them, autonomy consolidates rather than democratizes.

But let’s be clear: platform owners have every incentive to build walled gardens.

Therefore, while advocating for open standards is necessary, waiting for them is not a strategy. A parallel approach is essential: become strategically indispensable.

Instead of waiting for open interoperability, focus on being the undisputed best-in-class agent for your domain. Make excluding your service more costly than including it.

Governance Is Not a Legal Problem. It’s a Design Problem.

Embedding governance isn’t compliance theater. It’s product architecture.

If your system doesn’t know:

- When to escalate,

- How to remember interventions, and

- How to respect delegation boundaries,

then you’re not deploying autonomy. You’re deploying liability.

Governance isn’t a safety net. It’s the operating system of your agent infrastructure.

Without pre-defined constraints and escalation protocols, you’re not scaling intelligence. You’re scaling risk.

While the legal frameworks remain unsettled, leadership cannot wait.

The governance imperative requires action now:

- Appoint a cross-functional AI Governance Council to own these questions internally.

- Draft an internal 'Agent Constitution' defining your organization’s ethical boundaries and escalation protocols.

- Mandate ‘Auditability by Design’ in all agentic systems, ensuring every decision can be explained and reviewed.

Legal and Governance Caveat: Questions Outnumber Answers

Privacy risks, data ownership, accountability, liability — the governance implications of personal agents are profound and unresolved.

- No universal AI statute spans all jurisdictions. The EU AI Act, U.S. state-level laws, and global regulations diverge rapidly.

- A personal agent might operate in one cloud region while its owner, users, and downstream services span three others. Whose law applies?

- GDPR compliance collides with U.S. privacy fragmentation. Cross-border prompts generate instant legal conflict.

- Liability stacking sounds clear on paper, until a rogue instruction or model drift causes harm. Then, proof, intent, and indemnification collide.

The questions are multiplying faster than answers.

Every deployment should be treated as a live experiment in legal, ethical, and operational governance. And you should plan for rapid revision as the rulebook evolves.

Conclusion: The Future Is Delegated

Here’s the fundamental shift:

In the old world, your product was defined by its interface.

In the new world, your product is defined by the outcomes it delivers through the customer’s assistant.

If your product doesn’t deliver outcomes that agents can trust, your:

- Brand

- User experience

- Feature set

become irrelevant.

Your product will vanish, either silently consumed by personal assistants or bypassed entirely in favor of more efficient alternatives.

You’re no longer designing tools. You’re architecting delegation systems.

And that changes everything.

In this new age:

- Reducing cognitive load is your primary value proposition.

- The customer’s assistant, not your UI, is your integration point.

- Your system’s reliability, not your interface, defines your brand.

You are no longer competing on usability.

You’re competing on:

- Cognitive offload

- Orchestration trust

- Delegation control

But remember: delegation without escalation isn’t autonomy. It’s abdication.

If your agents don’t know when to give control back, your future won’t be autonomous. It will be ungoverned.

AI agents aren’t optional.

The real question is: Will you control the delegation or become invisible inside someone else’s system?

Design for control. Or plan for irrelevance. Establishing this control is the definitive challenge for leadership. The framework of delegation is the starting point, but the path forward demands a rigorous confrontation with the practical 'how,' a clear-eyed assessment of the human and economic costs, and the courage to build the governance that will define this new era.

If your product serves humans today, what will it serve tomorrow: agents or assistants? Let’s discuss.

The opinions expressed here are my own and they represent my personal perspective on long-term industry shifts that will shape the future of business technology.