Beyond the Dashboard | Principle 11: Turn AI into a Judgment Multiplier

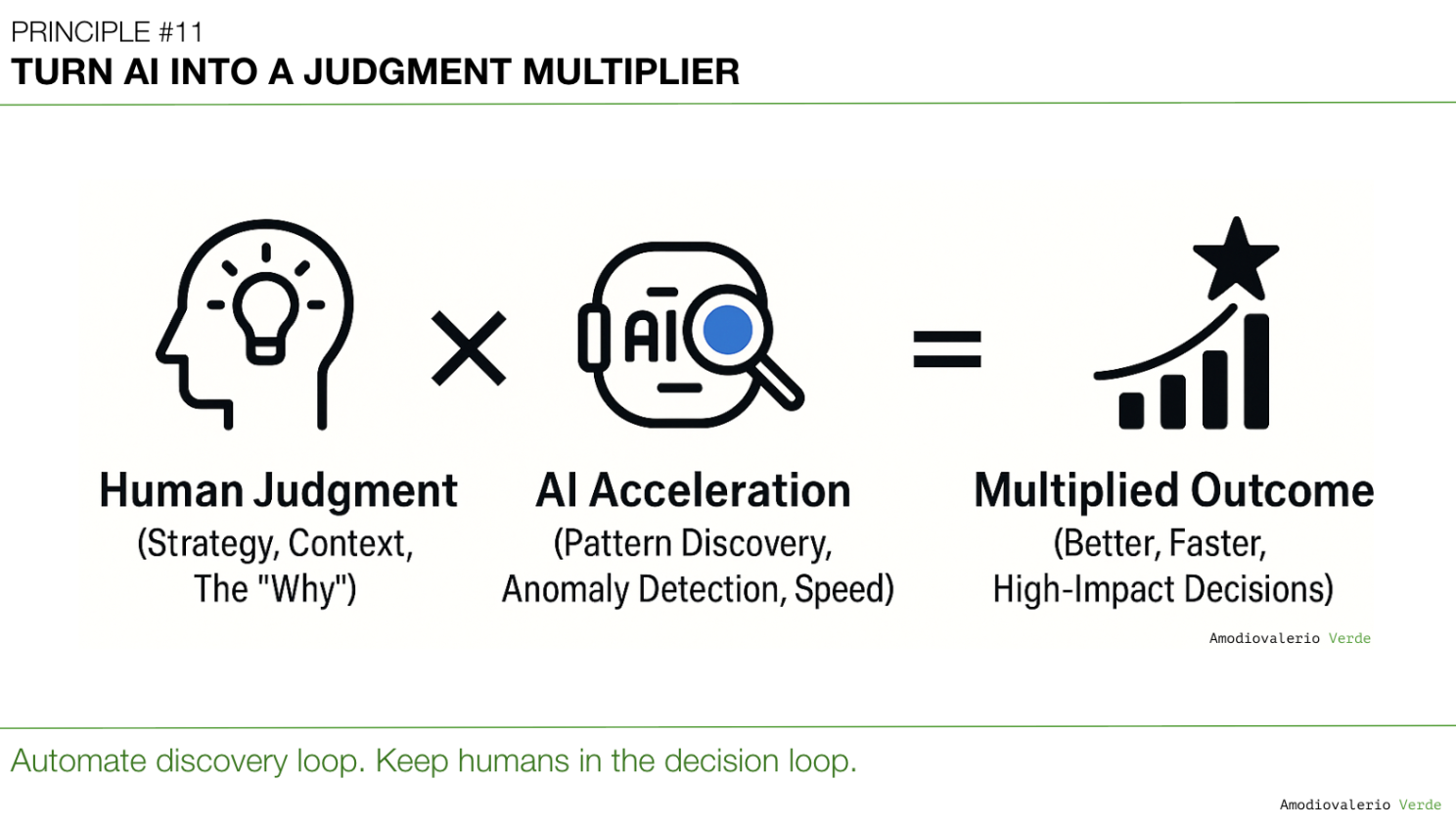

AI is not your strategist; it multiplies your judgment. Automate discovery, keep humans in the decision loop, and treat judgment as the API. Clean hypotheses and consequence paths in, clarity out. Use AI to amplify decisions, not outsource them. Automate discovery, own decisions. Judgment is moat.

TL;DR

- AI is not your strategist. It has no vision, scars, or accountability. It multiplies whatever judgment you feed it.

- If your judgment is clear, AI scales clarity. If your judgment is weak, AI scales confusion at machine speed.

- Your responsibility is not to “adopt AI.” It is to ensure your organization has judgment worth multiplying.

- In regulated and safety-critical workflows, automation is non-negotiable for reliability, compliance and auditability; this piece argues for using AI to accelerate discovery while people retain decision rights on strategic calls.

Why this principle and why now

Across Principles 1-10 we dismantled the illusion that prettier dashboards produce better decisions. We replaced reporting theater with thinking systems. That scaffolding had one purpose: strengthen judgment so decisions are shaped by clarity, not noise. AI is the amplifier that stress-tests whether that scaffolding holds. Feed it confusion and you get confusion at scale. Feed it clarity and you unlock leverage no company has had before.

Dashboards remain the instrumentation layer; Principle 11 is about ensuring that what those instruments surface is framed as hypotheses and decisions, not passive reports.

What this principle commits you to

- Automate the discovery loop with AI.

- Keep humans in the decision loop.

- Treat your organization’s judgment as the API that makes AI useful.

This is the winning playbook. Automate discovery. Own the decision.

The journey to Principle 11

In my role, I'm in constant conversation about the transformative power of AI. But beyond the demos and the hype, a tougher question emerges: how do we embed this power into our organizations without accidentally scaling confusion or automating bias? We're moving from an era of 'adopting AI' to an era of 'using AI wisely.' This has led me to a core belief I'm seeing play out in real-time: the goal isn't to replace human judgment, but to multiply it. Here’s a deep dive into that principle and a playbook for putting it into practice.

As always, the thoughts and frameworks here are my own and don't represent the views of my employer.

The tireless junior analyst: power and limit

What AI is excellent at: parsing logs, clustering feedback, surfacing anomalies, finding correlations, summarizing at scale. What it lacks: sense of stakes, context, and consequences. It cannot tell which anomaly matters or when to kill a feature. That leap from pattern to meaning is judgment, and it is still your job. Treating AI outputs as instructions is not transformation. It is abdication.

Concrete example

AI flags that users who skip the tour activate at a higher rate. That does not mean “kill the tour.” It might mean the tour is telling instead of showing value. The human task is to interpret and decide: redesign the tour as an interactive path to value.

The strategic price of abdication

If teams stop forming hypotheses and wait for the model to decide, curiosity atrophies, bias is automated, moats evaporate, and accountability dissolves into “the model told us.” At enterprise scale, this becomes catastrophic because AI accelerates mistakes.

Judgment as the new API

Your organization’s judgment is the API. AI is the processor. A clean API multiplies clarity. A messy API multiplies noise.

An API is an interface and contract: you send a well-formed request, you get a predictable response. Treat your judgment the same way. By judgment I mean three things made explicit: a belief or hypothesis, the stakes, and pre-committed consequence paths.

For this API to work, the contract must be built on a foundation of clean, reliable data. Without it, even the clearest judgment is fed noise, and AI will simply find more efficient ways to be wrong.

The Judgment API: a minimal spec

- Endpoint: the decision you must make now. Example: “Reduce onboarding drop-off.”

- Request schema: belief, question, stakes. Example: “We believe value is not shown early. Test correlation between skipping the tour and activation; if variance is above 20 percent, we prototype a new path.”

- Processor: AI accelerates discovery only. It clusters, flags, summarizes. It does not decide.

- Response handling: humans interpret, choose, and commit to an action path.

- Error handling: missing hypothesis or undefined stakes. Outcome: beautifully formatted noise.

Clean vs messy in practice

Clean requests look like:

- “We believe churn spikes because users fail to see value in the first session.”

- “Test whether activation drops correlate with skipped onboarding.”

- “If variance exceeds our threshold, we test an alternate path.”

Messy requests look like:

- “Why are users churning?”

- “Show me insights from the data.”

- “What does the AI recommend?” Clean multiplies judgment. Messy multiplies noise.

Automate discovery. Own the decision. Split the work into two loops and make the ownership explicit.

Discovery loop: AI excels

- Surface anomalies in driver metrics.

- Cluster qualitative feedback at scale.

- Identify correlations between behaviors and outcomes.

- Simulate scenarios to shape better questions.

Decision loop: humans are accountable

- Frame the question and form the hypothesis.

- Set stakes and pre-commit decision paths.

- Interpret signals in strategic context.

- Make the call and own consequences.

The temptation of autopilot

Large organizations are especially drawn to “AI autopilot.” It sounds efficient. It looks modern. It feels safe in board decks. Automated prioritization, predictive resource allocation, AI-generated roadmaps. Here is the problem. Models are trained on the past and need human framing to reason about the future. Strategy is about the future. If you put your organization on autopilot, you are not navigating. You are drifting with confidence toward irrelevance.

While some may argue for full automation to achieve maximum speed, this approach mistakes velocity for vector, leading to brittle strategies that optimize for the past right up until the moment they become irrelevant.

Aviation is a helpful analogy. Autopilot reduces fatigue and handles routine operations. When turbulence hits or an engine fails, autopilot disengages and a human takes over. Judgment in moments of consequence cannot be automated. Companies that confuse autopilot with leadership repeat the same error. They optimize past patterns and miss discontinuities, threats, and opportunities. When the turbulence comes, their judgment muscles are weak.

This is why the final principle is not “adopt AI.” The principle is “decide how to use AI.” Automate discovery. Keep humans in the decision loop. Your role is to build a system where AI accelerates signal, while people decide. Anything else is abdication dressed up as transformation.

Automation vs amplification

Draw a sharp line.

Automation saves time. Amplification creates advantage. If you only automate, you get efficiency but no edge. If you mistake automation for amplification, you overtrust the machine and outsource judgment. Both paths are dangerous. One leaves advantage on the table. The other gives it away. Your job is to set the boundary between the two.

Automation is the efficiency layer.

Use AI for routine, repeatable, operational work. Examples: transcribing sales calls, summarizing support tickets, flagging anomalies, and automating reporting pipelines. This work matters because it frees up time. It does not produce a moat. Once a vendor ships a tool, everyone can adopt it. Treat automation as table stakes.

Amplification is the judgment layer.

Use AI to sharpen human thinking, accelerate discovery, and pressure-test assumptions. Examples: simulate counterfactuals, run hypothesis loops faster, stress-test assumptions in real time with scenario analysis. Amplification changes the quality and speed of decisions. It takes a strong judgment system and scales it. This is where advantage emerges.

Without clear boundaries, teams either underuse AI or overtrust it. Underuse leads to faster reports and the same decisions. Overtrust leads to passivity and fragile strategy. Set the boundary, then enforce it in process, language, and review.

This distinction is critical in the age of Generative AI. Using GenAI to summarize meeting notes is automation. Using it as a 'sparring partner' to challenge your strategy and simulate counter-arguments is amplification.

The 3As of judgment multiplication

Here is the leadership playbook for the AI era. Three simple, non-negotiable disciplines.

Ask first.

No hypothesis, no query. Your teams must state a belief before they ask AI anything. Example: “We believe churn is caused by activation friction.” Now AI can cluster activation drop-offs, compare cohorts, and flag anomalies. Without this, queries become fishing expeditions and noise floods the system. Make “ask first” a hard gate in reviews.

Anchor in consequences.

Every AI query must tie to a decision path. Frame outputs as “If A then X. If B then Y.” Example: “If users who skip onboarding convert higher, we redesign the tour. If not, we test messaging instead.” This prevents decorative insights and forces conversion of output into action. Write the consequence pairs into tickets and briefs.

Audit the system.

Do not only inspect outputs. Inspect the loop itself. Ask: Are teams treating AI as sparring partner or strategist. Is it multiplying clarity or multiplying bias. Has accountability shifted from people to algorithms. If accountability is drifting toward the machine, pull it back. Audit the judgment system, not just the metrics.

Together, the 3As keep AI in its rightful place. Amplifier, not autopilot. They convert “AI adoption” from a tooling story into a leadership system.

Make the boundary visible in operating rhythm

Translate the boundary into a cadence.

- Team reviews. Start with the hypothesis and consequence pairs. Accept only clean “requests” to AI. Reject “show me insights” prompts. Require evidence that outputs were treated as input, not instruction.

- Product council. Separate the automation layer from the amplification layer. Celebrate time saved, then spend most of the meeting on decisions improved. Ask which assumptions were stress-tested, which scenarios were simulated, and what changed as a result.

- Quarterly audit. Sample 10 analyses that used AI. Score them against the 3As. Look for bias multiplication and accountability drift. Publish the findings. Build a culture where clean judgment is visible and rewarded.

What goes wrong when you skip the 3As

If you skip “ask first,” the organization learns to query the model instead of forming a view. Curiosity weakens. Discovery collapses into obedience.

If you skip “anchor in consequences,” you generate impressive decks that do not move decisions.

If you skip “audit,” bias and accountability quietly migrate into the model. At scale, these misses are not theoretical. They are expensive.

What success looks like

You will know the system is working when hypotheses appear before charts, when the same metric is defined the same way everywhere, and when AI outputs are brought to the table as signals to interpret rather than orders to follow. You will also see fewer slides and more decisions.

Three moves to start this month

Do not wait for a 12-month rollout. Start now with three simple moves.

1) Kill one vanity dashboard panel, not just a metric. Find a chart or metric that nobody uses but still reports. Delete it. By cutting noise, you sharpen the judgment signal. Example: the weekly logins chart that never changes a decision. Remove it. Announce the kill in the team channel and explain the why. This sets a new tone.

2) Lock one definition and show it inline on the dashboard.

Pick a KPI that sparks endless debate. Activation, churn, or retention are common offenders. Write down the formula, the source, and the owner. Make it unambiguous and publish it where everyone can see it. Example: “Activation equals user completes three core actions within seven days, measured in product logs, owned by Growth Analytics.” This single act removes hours of wasted argument.

3) Run one adoption deep dive and publish the decision on the dashboard’s outcome layer.

Choose a feature shipped in the last six months. Track its discovery, activation, usage, and value. Use AI to cluster feedback and identify drop-offs. Decide whether to redesign, deprecate, or double down. Example: a collaboration feature launched to applause but hidden in onboarding. The decision is to redesign discovery. Publish the decision and the learning.

These moves reset expectations. They tell the organization that AI exists to accelerate judgment, not replace it. They also convert dashboards from history reports into instruments for future decisions.

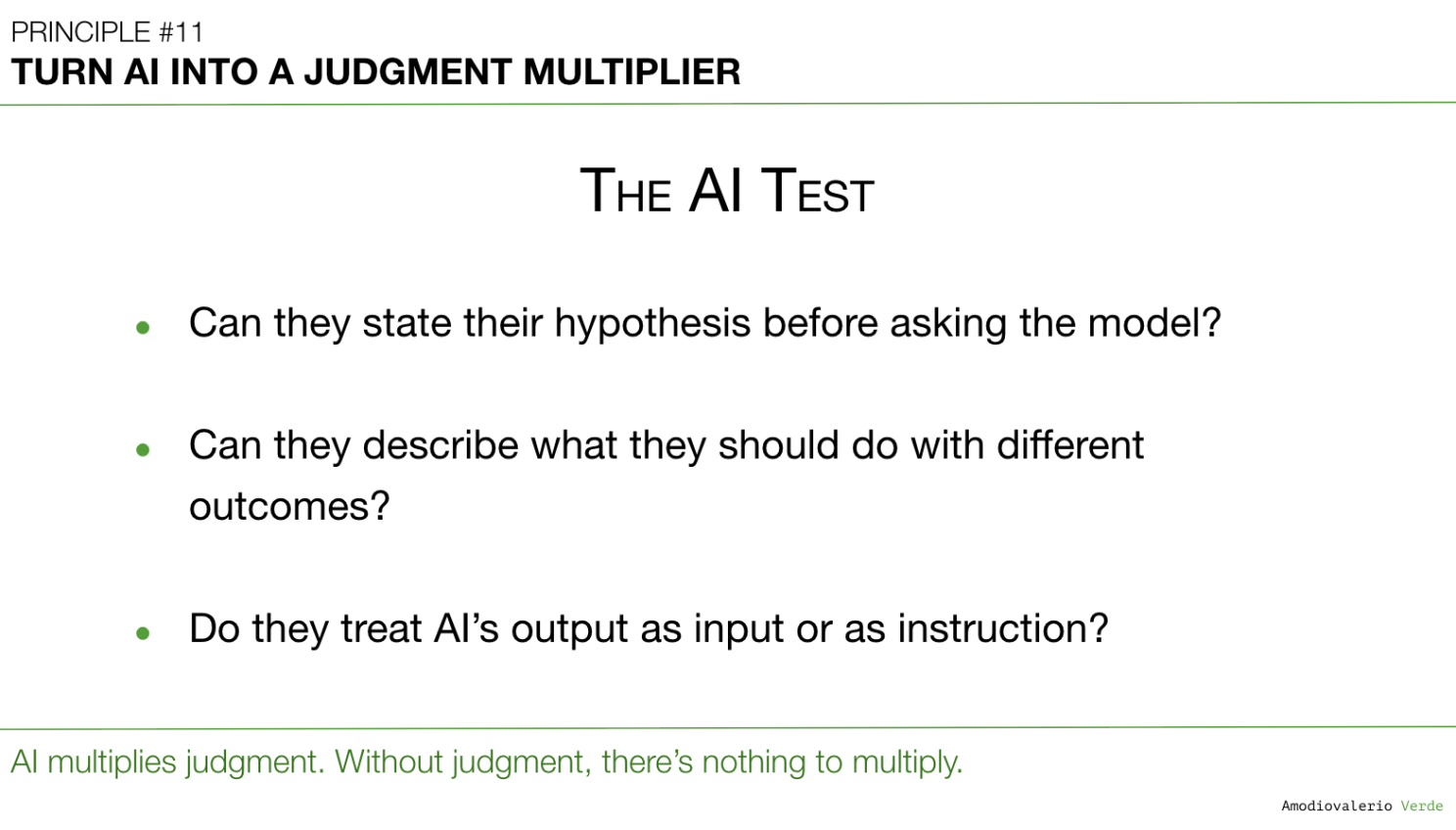

The final test for your teams

When a team presents an AI-generated insight, ask three questions. If they cannot answer clearly, they are not ready to treat AI as a multiplier.

- What was your hypothesis before you queried the model?

- What would you do under outcome A versus outcome B?

- Are you treating the output as input or as instruction?

If they treat the output as instruction, AI is just filling the silence where judgment should be. Insist on hypotheses, consequence paths, and accountable decisions.

Enterprise guardrails

Enterprises pay a higher price for abdication because scale multiplies mistakes. A wrong bet at a startup wastes weeks. The same bet at 20,000 people wastes markets. Use AI as amplifier and keep executives accountable for decisions, not dashboards. This is not optional governance. It is core strategy.

Closing the Loop: The End of the Series

We started this journey by acknowledging an uncomfortable truth: our beautiful dashboards were often making us dumber, creating an illusion of clarity while judgment quietly withered. We were tracking everything and deciding nothing.

Across eleven principles, we’ve built a new system, not for reporting, but for reasoning.

We began by Avoiding the Data Delusion, recognizing that statistical significance is often strategically irrelevant.

We shifted our posture to Adopt a Data-Informed Approach, deciding on the meal before opening the fridge.

We learned to Choose What to Measure, treating metrics like a cockpit, not a buffet.

We started using Frameworks as Filters, Not Blueprints , as tools to sharpen thinking, not replace it.

We shifted our focus to Adoption, Not Just Delivery , understanding that shipping is a cost and adoption is the asset.

We learned to Know Our Tool Stack’s Boundaries, managing a portfolio of partial truths instead of chasing a single mythical one.

We designed Layered Dashboards to Scale Thinking, giving executives a telescope, teams levers, and analysts a microscope.

We learned to Manage Multi-Product Portfolios Separately, rejecting Franken-metrics that hide the truth.

We committed to Reconciling Metric Definitions, knowing that teams arguing about numbers are really arguing about definitions.

We moved to Build Thinking Systems, Not Reporting Systems, designing structures that help us decide what to do next, not just report on what happened.

And finally, we’ve learned to Turn AI into a Judgment Multiplier, using it to augment our most valuable and uniquely human skill.

That’s the entire shift: from reporting to reasoning. From passive tracking to active thinking. And from dashboards that just look good, to systems that actually help you win.

Dashboards don’t make decisions. You do. AI won’t replace judgment, it will expose it and multiply it. And in this next era, the teams who win will be the ones who don’t just track progress… they decide where to go.

PAQs – Potentially Asked Questions

How do you actually train "judgment" in product managers so there's something for AI to multiply?

Judgment isn't a mystical quality; it's a muscle built through structured practice. You train it by creating systems that force rigorous thinking. First, implement the "Thinking Loop" : for every initiative, require teams to explicitly state the question, their belief, and the stakes. Second, create a "Decision Log" where teams document not just what they decided, but why, including the data considered and the assumptions made. Reviewing these logs quarterly teaches pattern recognition about what makes a good or bad decision. Finally, celebrate learning from failed hypotheses as much as successes from correct ones. A culture that punishes being wrong creates a culture that avoids forming a hypothesis in the first place, which is the death of judgment.

What happens if the AI's recommendation consistently outperforms human judgment? At what point do we trust the machine?

The goal isn't to beat the machine; it's to partner with it. If an AI model is consistently better at a specific, bounded task (like optimizing ad spend or suggesting button colors), you absolutely should automate that task. That frees up human judgment for higher-order problems the AI can't solve, such as: "Should we be in this market at all?" or "What is the core, un-met emotional need of our target user?" Trust the AI for optimization within a defined system. Trust humans to question and redesign the system itself. The most dangerous trap is letting AI's tactical excellence lull you into outsourcing strategic direction.

This all sounds great for Product teams. How do you get Sales, Marketing, and other functions to adopt this "judgment-led" mindset?

The principles are universal because every department uses data to make decisions. The key is to translate the concepts into their language. For Sales, instead of "data-informed," talk about "using metrics to qualify leads better, not just faster." For Marketing, it’s about "using analytics to understand customer intent, not just track clicks." The "Metric Dictionary" from Principle 9 is the most powerful tool for driving cross-functional alignment. When Sales, Marketing, and Product agree on the definition of a "Qualified Lead," you've already won half the battle. The change starts when a leader has the courage to stop a meeting and say, "These numbers don't match. Let's pause this decision until we agree on what we're measuring".

What are the biggest risks for a company that fails to make this shift and continues to treat AI as a decision-making oracle?

The risks are systemic and existential. First, you get strategic drift. The company will become incredibly efficient at optimizing for local maxima, completely missing disruptive shifts in the market because "the data didn't tell us to pivot". Second, you have talent atrophy. Your best strategic thinkers will leave because their judgment is undervalued, and you'll be left with operators who are great at following dashboards but incapable of setting a new course. Finally, and most dangerously, you create brittle systems. An organization that has outsourced its judgment to AI models is incredibly vulnerable. When a true crisis hits or market conditions change in a way the model wasn't trained on, the organization will freeze, unable to reason from first principles.

Isn't there a risk that by emphasizing human judgment, we re-introduce the very biases that being "data-driven" was supposed to eliminate?

Yes, and this is the essential tension. A purely "data-driven" approach can lead to strategic blindness, while a purely "intuition-driven" approach is susceptible to bias. The data-informed model sits in the middle and manages this tension explicitly. The "Thinking Loop" is the mechanism for this: you start with your belief (which may contain bias), but then you are forced to state how data could prove you wrong. You make your intuition falsifiable. AI can be a powerful partner here. You can ask it, "Based on this data, what are the counter-arguments to my hypothesis?" This uses the machine's objectivity to stress-test our subjective judgment in a structured way.

What is the single most important skill for a leader to cultivate in their teams for this new era?

The ability to ask powerful questions. In the old world, value came from having the answers. In the new world, where answers are cheap and machine-generated, value comes from knowing which question to ask. A leader's job is no longer to be the chief decision-maker, but the chief question-framer. They must coach their teams to move from "show me the data" to "what is the most important question we need to answer, and how can data help us get there?". Every other principle in this series flows from that one skill.

The article's thesis seems too conservative and risks being outmaneuvered. Why not fully trust AI?

This approach mistakes speed for true advantage. A competitor on AI autopilot is simply optimizing past patterns, making their strategy brittle and blind to market shifts their models werent trained on. It doesnt eliminate bias; it automates it and dissolves accountability into the model told us. The goal is to use AI as a sparring partner to make human judgment falsifiable, not to replace it. We trust AI for optimization within a system, but trust humans to question and redesign the system itself.

After 11 principles, what's the one-sentence summary of the entire "Beyond the Dashboard" philosophy?

Stop using data to report on the past and start using it as a tool to reason about the future, because judgment is, and will remain, the last unfair advantage.

Each principle in this series builds upon the last to form a coherent system for better decision-making. Here is the full list of principles we are exploring:

Intro: Beyond the Dashboard Series

Principle 1: Avoid the Data Delusion

Principle 2: Adopt a Data-Informed Approach

Principle 3: Choose What to Measure

Principle 4: Use Frameworks as Filters, Not Blueprints

Principle 5: Focus on Adoption, Not Just Delivery

Principle 6: Know Your Tool Stack’s Boundaries

Principle 7: Build Layered Dashboards to Scale Thinking

Principle 8: Manage Multi-Product Portfolios Separately

Principle 9: Reconcile Metric Definitions Before Analysis

Principle 10: Build Thinking Systems, Not Reporting Systems

Principle 11: Turn AI into a Judgment Multiplier